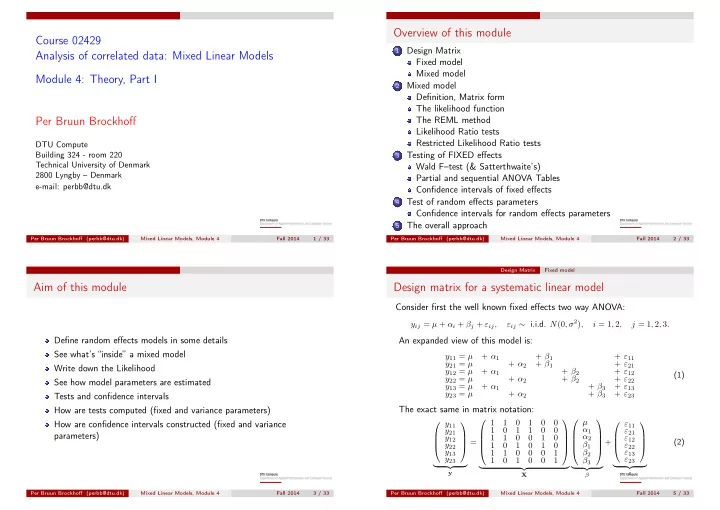

Course 02429 Analysis of correlated data: Mixed Linear Models Module 4: Theory, Part I Per Bruun Brockhoff

DTU Compute Building 324 - room 220 Technical University of Denmark 2800 Lyngby – Denmark e-mail: perbb@dtu.dk

Per Bruun Brockhoff (perbb@dtu.dk) Mixed Linear Models, Module 4 Fall 2014 1 / 33

Overview of this module

1

Design Matrix Fixed model Mixed model

2

Mixed model Definition, Matrix form The likelihood function The REML method Likelihood Ratio tests Restricted Likelihood Ratio tests

3

Testing of FIXED effects Wald F–test (& Satterthwaite’s) Partial and sequential ANOVA Tables Confidence intervals of fixed effects

4

Test of random effects parameters Confidence intervals for random effects parameters

5

The overall approach

Per Bruun Brockhoff (perbb@dtu.dk) Mixed Linear Models, Module 4 Fall 2014 2 / 33

Aim of this module

Define random effects models in some details See what’s “inside” a mixed model Write down the Likelihood See how model parameters are estimated Tests and confidence intervals How are tests computed (fixed and variance parameters) How are confidence intervals constructed (fixed and variance parameters)

Per Bruun Brockhoff (perbb@dtu.dk) Mixed Linear Models, Module 4 Fall 2014 3 / 33 Design Matrix Fixed model

Design matrix for a systematic linear model

Consider first the well known fixed effects two way ANOVA:

yij = µ + αi + βj + εij, εij ∼ i.i.d. N(0, σ2), i = 1, 2, j = 1, 2, 3.

An expanded view of this model is:

y11 = µ + α1 + β1 + ε11 y21 = µ + α2 + β1 + ε21 y12 = µ + α1 + β2 + ε12 y22 = µ + α2 + β2 + ε22 y13 = µ + α1 + β3 + ε13 y23 = µ + α2 + β3 + ε23 (1)

The exact same in matrix notation:

y11 y21 y12 y22 y13 y23

- y

= 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

- X

µ α1 α2 β1 β2 β3

- β

+ ε11 ε21 ε12 ε22 ε13 ε23

- ε

(2)

Per Bruun Brockhoff (perbb@dtu.dk) Mixed Linear Models, Module 4 Fall 2014 5 / 33