1

(c) 2003 Thomas G. Dietterich 1

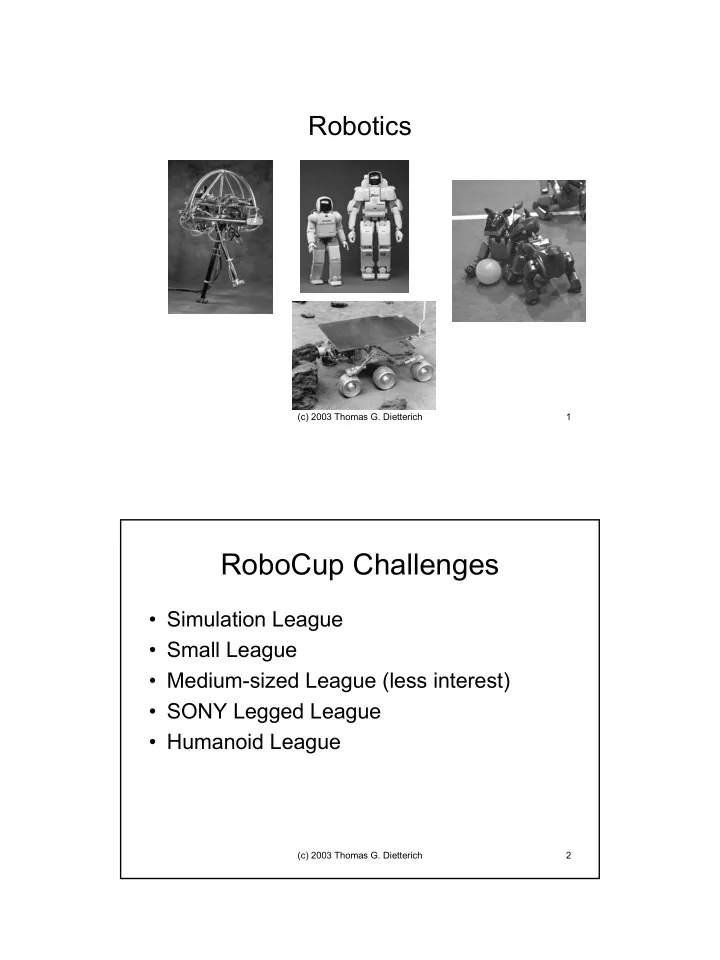

Robotics

(c) 2003 Thomas G. Dietterich 2

RoboCup Challenges

- Simulation League

- Small League

- Medium-sized League (less interest)

- SONY Legged League

- Humanoid League

RoboCup Challenges Simulation League Small League Medium-sized - - PDF document

Robotics (c) 2003 Thomas G. Dietterich 1 RoboCup Challenges Simulation League Small League Medium-sized League (less interest) SONY Legged League Humanoid League (c) 2003 Thomas G. Dietterich 2 1 Small League

(c) 2003 Thomas G. Dietterich 1

(c) 2003 Thomas G. Dietterich 2

(c) 2003 Thomas G. Dietterich 3

(c) 2003 Thomas G. Dietterich 4

(c) 2003 Thomas G. Dietterich 5

(c) 2003 Thomas G. Dietterich 6

(c) 2003 Thomas G. Dietterich 7

(c) 2003 Thomas G. Dietterich 8

(c) 2003 Thomas G. Dietterich 9

Bright Light Dim Light

(c) 2003 Thomas G. Dietterich 10

(c) 2003 Thomas G. Dietterich 11

1) · ∑Bt P(Ct | Bt) · ∑Bt-1P(Bt | Bt-1,At)

Wt-1 Wt It Ct Bt-1 Bt At

(c) 2003 Thomas G. Dietterich 12

(c) 2003 Thomas G. Dietterich 13

(c) 2003 Thomas G. Dietterich 14

(c) 2003 Thomas G. Dietterich 15

(c) 2003 Thomas G. Dietterich 16

X a1 a2 X1 X2 X3

0.7 0.3 0.6 0.4 π

1.5

4.0 Vπ(X) = 0.3 · γ (-2) + 0.7 · γ (1.5) = 0.405 (γ = 0.9)

(c) 2003 Thomas G. Dietterich 17

(c) 2003 Thomas G. Dietterich 18

(c) 2003 Thomas G. Dietterich 19

(c) 2003 Thomas G. Dietterich 20

(c) 2003 Thomas G. Dietterich 21

(c) 2003 Thomas G. Dietterich 22

(c) 2003 Thomas G. Dietterich 23

– Each player’s current policy is a local optimum if all of the other players’ policies are kept fixed – Each player has no incentive to change

(c) 2003 Thomas G. Dietterich 24

(c) 2003 Thomas G. Dietterich 25

(c) 2003 Thomas G. Dietterich 26

(c) 2003 Thomas G. Dietterich 27

R R R P R R

How many (internal) degrees of freedom does this arm have?

(c) 2003 Thomas G. Dietterich 28

(c) 2003 Thomas G. Dietterich 29

(c) 2003 Thomas G. Dietterich 30

(c) 2003 Thomas G. Dietterich 31

(c) 2003 Thomas G. Dietterich 32

(c) 2003 Thomas G. Dietterich 33

(c) 2003 Thomas G. Dietterich 34

(c) 2003 Thomas G. Dietterich 35

(c) 2003 Thomas G. Dietterich 36

(c) 2003 Thomas G. Dietterich 37

cell color indicates optimal value function (distance to goal along optimal policy)

(c) 2003 Thomas G. Dietterich 38

(c) 2003 Thomas G. Dietterich 39

(c) 2003 Thomas G. Dietterich 40

(c) 2003 Thomas G. Dietterich 41

(c) 2003 Thomas G. Dietterich 42

(c) 2003 Thomas G. Dietterich 43

(c) 2003 Thomas G. Dietterich 44

(c) 2003 Thomas G. Dietterich 45

(c) 2003 Thomas G. Dietterich 46

(c) 2003 Thomas G. Dietterich 47

(c) 2003 Thomas G. Dietterich 48

(c) 2003 Thomas G. Dietterich 49