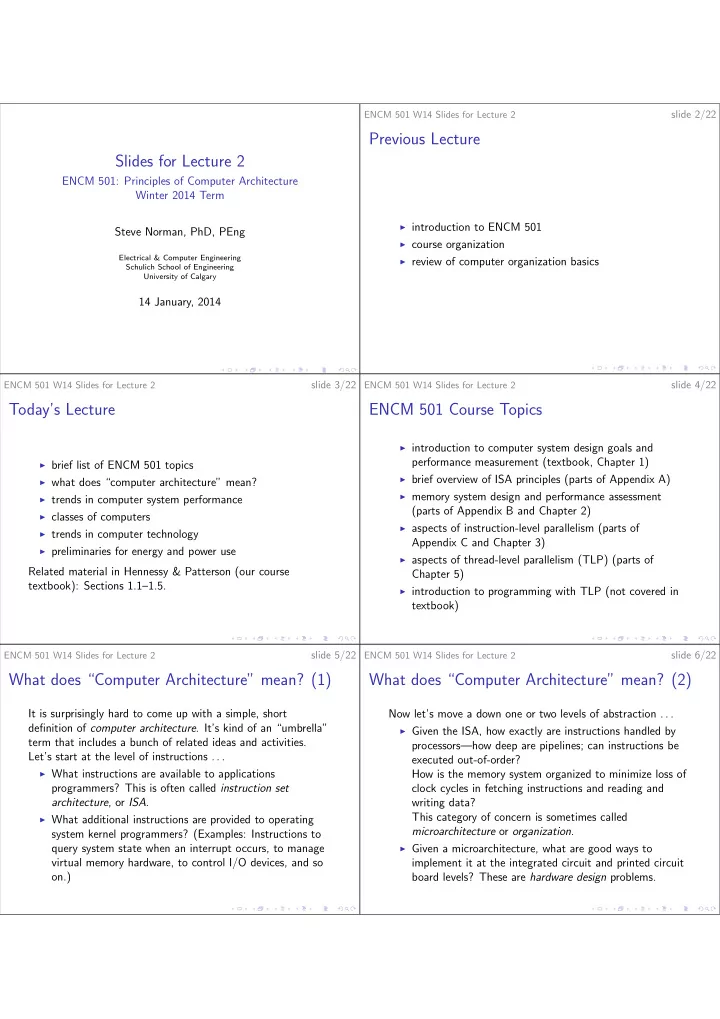

Slides for Lecture 2

ENCM 501: Principles of Computer Architecture Winter 2014 Term Steve Norman, PhD, PEng

Electrical & Computer Engineering Schulich School of Engineering University of Calgary

14 January, 2014

ENCM 501 W14 Slides for Lecture 2

slide 2/22

Previous Lecture

◮ introduction to ENCM 501 ◮ course organization ◮ review of computer organization basics

ENCM 501 W14 Slides for Lecture 2

slide 3/22

Today’s Lecture

◮ brief list of ENCM 501 topics ◮ what does “computer architecture” mean? ◮ trends in computer system performance ◮ classes of computers ◮ trends in computer technology ◮ preliminaries for energy and power use

Related material in Hennessy & Patterson (our course textbook): Sections 1.1–1.5.

ENCM 501 W14 Slides for Lecture 2

slide 4/22

ENCM 501 Course Topics

◮ introduction to computer system design goals and

performance measurement (textbook, Chapter 1)

◮ brief overview of ISA principles (parts of Appendix A) ◮ memory system design and performance assessment

(parts of Appendix B and Chapter 2)

◮ aspects of instruction-level parallelism (parts of

Appendix C and Chapter 3)

◮ aspects of thread-level parallelism (TLP) (parts of

Chapter 5)

◮ introduction to programming with TLP (not covered in

textbook)

ENCM 501 W14 Slides for Lecture 2

slide 5/22

What does “Computer Architecture” mean? (1)

It is surprisingly hard to come up with a simple, short definition of computer architecture. It’s kind of an “umbrella” term that includes a bunch of related ideas and activities. Let’s start at the level of instructions . . .

◮ What instructions are available to applications

programmers? This is often called instruction set architecture, or ISA.

◮ What additional instructions are provided to operating

system kernel programmers? (Examples: Instructions to query system state when an interrupt occurs, to manage virtual memory hardware, to control I/O devices, and so

- n.)

ENCM 501 W14 Slides for Lecture 2

slide 6/22

What does “Computer Architecture” mean? (2)

Now let’s move a down one or two levels of abstraction . . .

◮ Given the ISA, how exactly are instructions handled by

processors—how deep are pipelines; can instructions be executed out-of-order? How is the memory system organized to minimize loss of clock cycles in fetching instructions and reading and writing data? This category of concern is sometimes called microarchitecture or organization.

◮ Given a microarchitecture, what are good ways to