logoRWTH Verifying Continuous-Time Markov Chains

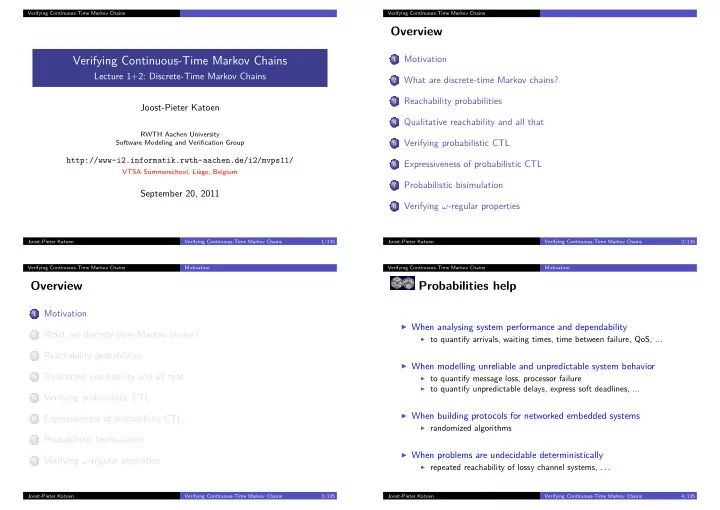

Verifying Continuous-Time Markov Chains

Lecture 1+2: Discrete-Time Markov Chains Joost-Pieter Katoen

RWTH Aachen University Software Modeling and Verification Group

http://www-i2.informatik.rwth-aachen.de/i2/mvps11/

VTSA Summerschool, Liège, Belgium

September 20, 2011

Joost-Pieter Katoen Verifying Continuous-Time Markov Chains 1/135 Verifying Continuous-Time Markov Chains

Overview

1

Motivation

2

What are discrete-time Markov chains?

3

Reachability probabilities

4

Qualitative reachability and all that

5

Verifying probabilistic CTL

6

Expressiveness of probabilistic CTL

7

Probabilistic bisimulation

8

Verifying ω-regular properties

Joost-Pieter Katoen Verifying Continuous-Time Markov Chains 2/135 Verifying Continuous-Time Markov Chains Motivation

Overview

1

Motivation

2

What are discrete-time Markov chains?

3

Reachability probabilities

4

Qualitative reachability and all that

5

Verifying probabilistic CTL

6

Expressiveness of probabilistic CTL

7

Probabilistic bisimulation

8

Verifying ω-regular properties

Joost-Pieter Katoen Verifying Continuous-Time Markov Chains 3/135 Verifying Continuous-Time Markov Chains Motivation

Probabilities help

◮ When analysing system performance and dependability

◮ to quantify arrivals, waiting times, time between failure, QoS, ...

◮ When modelling unreliable and unpredictable system behavior

◮ to quantify message loss, processor failure ◮ to quantify unpredictable delays, express soft deadlines, ...

◮ When building protocols for networked embedded systems

◮ randomized algorithms

◮ When problems are undecidable deterministically

◮ repeated reachability of lossy channel systems, . . . Joost-Pieter Katoen Verifying Continuous-Time Markov Chains 4/135