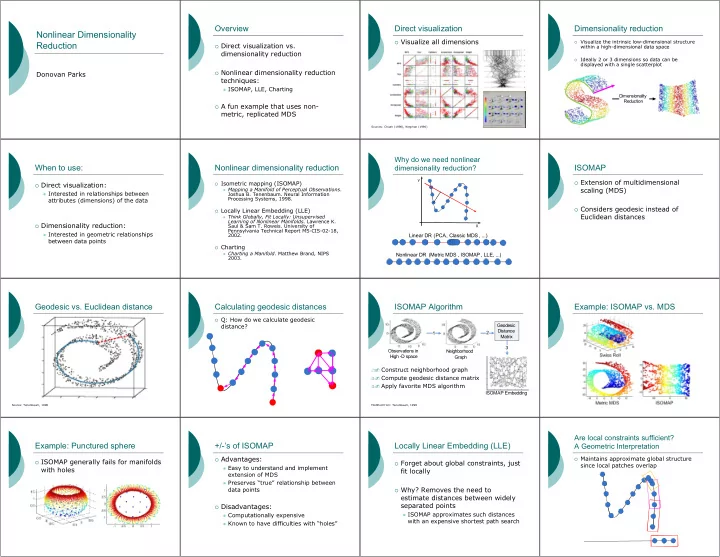

Nonlinear Dimensionality Reduction

Donovan Parks

Overview

Direct visualization vs.

dimensionality reduction

Nonlinear dimensionality reduction

techniques:

ISOMAP, LLE, Charting A fun example that uses non-

metric, replicated MDS

Direct visualization

Visualize all dimensions

Sources: Chuah (1998), Wegman (1990)Dimensionality reduction

Visualize the intrinsic low-dimensional structurewithin a high-dimensional data space

Ideally 2 or 3 dimensions so data can bedisplayed with a single scatterplot Dimensionality Reduction

When to use:

Direct visualization: Interested in relationships between

attributes (dimensions) of the data

Dimensionality reduction: Interested in geometric relationships

between data points

Nonlinear dimensionality reduction

Isometric mapping (ISOMAP) Mapping a Manifold of Perceptual Observations.

Joshua B. Tenenbaum. Neural Information Processing Systems, 1998.

Locally Linear Embedding (LLE)

Think Globally, Fit Locally: UnsupervisedLearning of Nonlinear Manifolds. Lawrence K. Saul & Sam T. Roweis. University of Pennsylvania Technical Report MS-CIS-02-18, 2002.

Charting

Charting a Manifold. Matthew Brand, NIPS2003.

Why do we need nonlinear dimensionality reduction?

X Y

Linear DR (PCA, Classic MDS, ...) Nonlinear DR (Metric MDS , ISOMAP, LLE, ...)

ISOMAP

Extension of multidimensional

scaling (MDS)

Considers geodesic instead of

Euclidean distances

Geodesic vs. Euclidean distance

Source: Tenenbaum, 1998Calculating geodesic distances

Q: How do we calculate geodesic

distance?

ISOMAP Algorithm

Construct neighborhood graph Compute geodesic distance matrix Apply favorite MDS algorithm

Geodesic Distance Matrix 1 2 3 Observations in High -D space Neighborhood Graph ISOMAP Embedding

Modified from: Tenenbaum, 1998Example: ISOMAP vs. MDS Example: Punctured sphere

ISOMAP generally fails for manifolds

with holes

+/-’s of ISOMAP

Advantages: Easy to understand and implement

extension of MDS

Preserves “true” relationship between

data points

Disadvantages: Computationally expensive Known to have difficulties with “holes”

Locally Linear Embedding (LLE)

Forget about global constraints, just

fit locally

Why? Removes the need to

estimate distances between widely separated points

ISOMAP approximates such distances

with an expensive shortest path search

Are local constraints sufficient? A Geometric Interpretation

Maintains approximate global structure

since local patches overlap