1

- 1

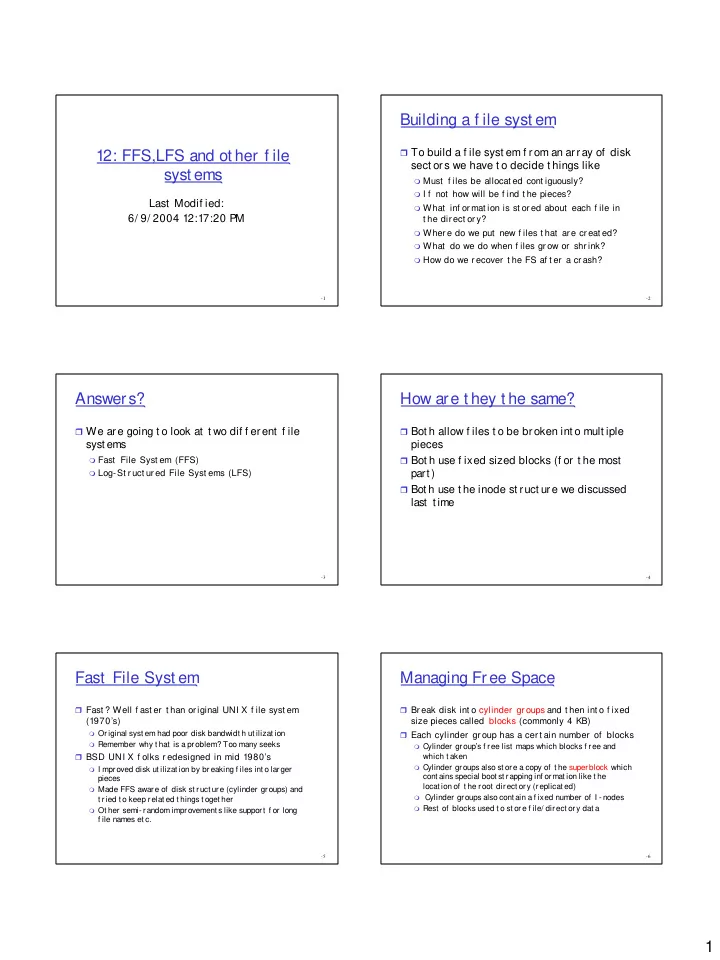

12: FFS,LFS and ot her f ile syst ems

Last Modif ied: 6/ 9/ 2004 12:17:20 PM

- 2

Building a f ile syst em

To build a f ile syst em f rom an array of disk

sect ors we have t o decide t hings like

Must f iles be allocat ed cont iguously? I f not how will be f ind t he pieces? What inf or mat ion is st or ed about each f ile in

t he dir ect or y?

Where do we put new f iles t hat are creat ed? What do we do when f iles gr ow or shr ink? How do we r ecover t he FS af t er a cr ash?

- 3

Answers?

We are going t o look at t wo dif f erent f ile

syst ems

Fast File Syst em (FFS) Log-St r uct ur ed File Syst ems (LFS)

- 4

How are t hey t he same?

Bot h allow f iles t o be broken int o mult iple

pieces

Bot h use f ixed sized blocks (f or t he most

part )

Bot h use t he inode st ruct ure we discussed

last t ime

- 5

Fast File Syst em

Fast ? Well f ast er t han or iginal UNI X f ile syst em

(1970’s)

Original syst em had poor disk bandwidt h ut ilizat ion Remember why t hat is a problem? Too many seeks

BSD UNI X f olks r edesigned in mid 1980’s

I mproved disk ut ilizat ion by breaking f iles int o larger

pieces

Made FFS aware of disk st ruct ure (cylinder groups) and

t ried t o keep relat ed t hings t oget her

Ot her semi- random improvement s like support f or long

f ile names et c.

- 6

Managing Free Space

Br eak disk int o cylinder gr oups and t hen int o f ixed

size pieces called blocks (commonly 4 KB)

Each cylinder gr oup has a cer t ain number of blocks

Cylinder group’s f ree list maps which blocks f ree and

which t aken

Cylinder groups also st ore a copy of t he superblock which

cont ains special boot st rapping inf ormat ion like t he locat ion of t he root direct ory (replicat ed)

Cylinder groups also cont ain a f ixed number of I - nodes Rest of blocks used t o st ore f ile/ direct ory dat a