1

- 1

18: Dist ribut ed Syst ems

Last Modif ied: 7/ 3/ 2004 1:49:01 PM

- 2

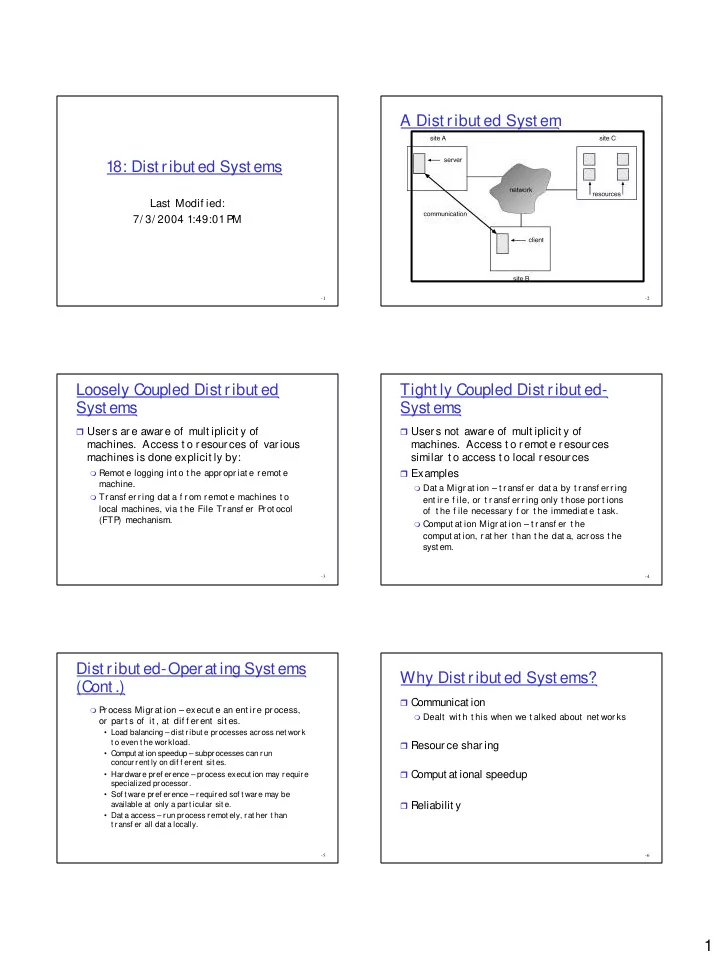

A Dist ribut ed Syst em

- 3

Loosely Coupled Dist ribut ed Syst ems

Users are aware of mult iplicit y of

- machines. Access t o resources of various

machines is done explicit ly by:

Remot e logging int o t he appr opr iat e r emot e

machine.

Tr ansf er r ing dat a f r om r emot e machines t o

local machines, via t he File Transf er Prot ocol (FTP ) mechanism.

- 4

Tight ly Coupled Dist ribut ed- Syst ems

Users not aware of mult iplicit y of

- machines. Access t o remot e resources

similar t o access t o local resources

Examples

Dat a Migr at ion – t r ansf er dat a by t r ansf er r ing

ent ir e f ile, or t r ansf er r ing only t hose por t ions

- f t he f ile necessary f or t he immediat e t ask.

Comput at ion Migr at ion – t r ansf er t he

comput at ion, r at her t han t he dat a, acr oss t he syst em.

- 5

Dist ribut ed-Operat ing Syst ems (Cont .)

Pr ocess Migr at ion – execut e an ent ir e pr ocess,

- r part s of it , at dif f erent sit es.

- Load balancing – dist ribut e processes across net work

t o even t he workload.

- Comput at ion speedup – subprocesses can run

concurrent ly on dif f erent sit es.

- Hardware pref erence – process execut ion may require

specialized processor.

- Sof t ware pref erence – required sof t ware may be

available at only a part icular sit e.

- Dat a access – run process remot ely, rat her t han

t ransf er all dat a locally.

- 6

Why Dist ribut ed Syst ems?

Communicat ion

Dealt wit h t his when we t alked about net wor ks