1

- 1

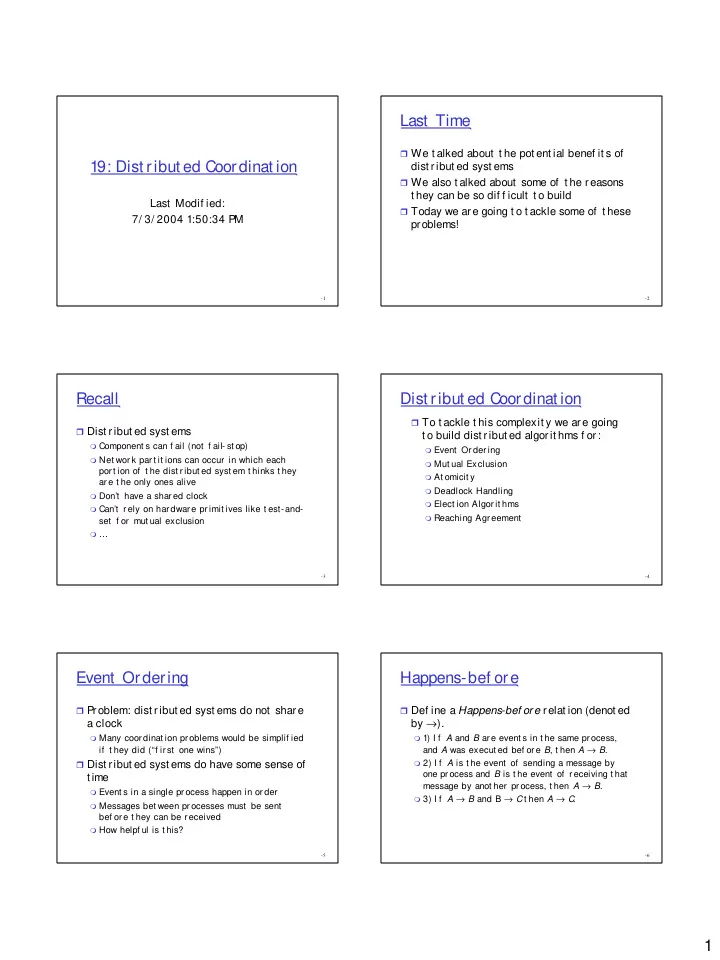

19: Dist ribut ed Coordinat ion

Last Modif ied: 7/ 3/ 2004 1:50:34 PM

- 2

Last Time

We t alked about t he pot ent ial benef it s of

dist ribut ed syst ems

We also t alked about some of t he reasons

t hey can be so dif f icult t o build

Today we are going t o t ackle some of t hese

problems!

- 3

Recall

Dist ribut ed syst ems

Component s can f ail (not f ail- st op) Net wor k par t it ions can occur in which each

por t ion of t he dist r ibut ed syst em t hinks t hey ar e t he only ones alive

Don’t have a shar ed clock Can’t r ely on har dwar e pr imit ives like t est-and-

set f or mut ual exclusion

…

- 4

Dist ribut ed Coordinat ion

To t ackle t his complexit y we are going

t o build dist ribut ed algorit hms f or:

Event Or der ing Mut ual Exclusion At omicit y Deadlock Handling Elect ion Algor it hms Reaching Agr eement

- 5

Event Ordering

P

roblem: dist ribut ed syst ems do not share a clock

Many coor dinat ion pr oblems would be simplif ied

if t hey did (“f ir st one wins”) Dist ribut ed syst ems do have some sense of

t ime

Event s in a single pr ocess happen in or der Messages bet ween pr ocesses must be sent

bef or e t hey can be r eceived

How helpf ul is t his?

- 6

Happens-bef ore

Def ine a Happens-bef ore relat ion (denot ed

by →).

1) I f A and B ar e event s in t he same pr ocess,

and A was execut ed bef or e B, t hen A → B.

2) I f A is t he event of sending a message by

- ne pr ocess and B is t he event of r eceiving t hat

message by anot her pr ocess, t hen A → B.

3) I f A → B and B → C