1

CS4513 Dist ribut ed Comput er Syst ems

Synchronizat ion (Ch 5)

I nt roduct ion

- Communicat ion not enough. Need

cooperat ion Synchronizat ion

- Dist ribut ed synchronizat ion needed f or

– t ransact ions (bank account via ATM) – access t o shar ed r esour ce (net wor k pr int er ) – ordering of event s (net work games where players have dif f erent ping t imes)

Out line

- I nt ro

(done)

- Clock Synchronizat ion

(next )

- Global Time and St at e

- Elect ion Algor it hms

- Mut ual Exclusion

- Dist ribut ed Transact ions

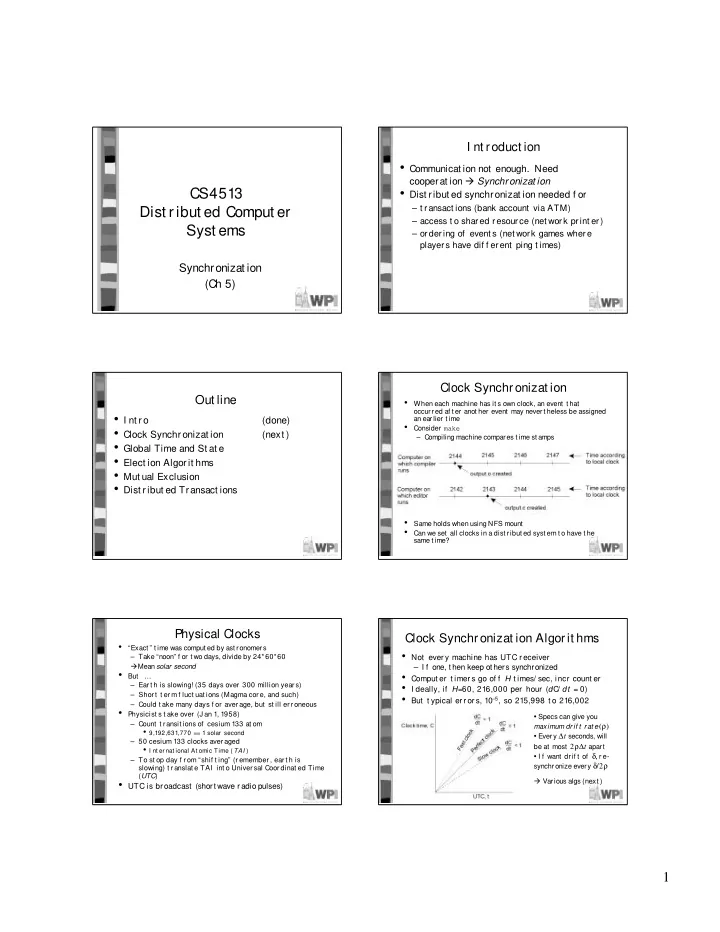

Clock Synchronizat ion

- When each machine has it s own clock, an event t hat

- ccurred af t er anot her event may nevert heless be assigned

an earlier t ime

- Consider make

– Compiling machine compares t ime st amps

- Same holds when using NFS mount

- Can we set all clocks in a dist ribut ed syst em t o have t he

same t ime?

Physical Clocks

- “Exact ” t ime was comput ed by ast ronomers

– Take “noon” f or t wo days, divide by 24*60*60 Mean solar second

- But …

– Ear t h is slowing! (35 days over 300 million year s) – Shor t t er m f luct uat ions (Magma cor e, and such) – Could t ake many days f or aver age, but st ill er r oneous

- Physicist s t ake over (J an 1, 1958)

– Count t r ansit ions of cesium 133 at om

- 9,192,631,770 == 1 solar second

– 50 cesium 133 clocks aver aged

- I nt er nat ional At omic Time ( TAI )

– To st op day f r om “shif t ing” (r emember , ear t h is slowing) t r anslat e TAI int o Univer sal Coor dinat ed Time (UTC)

- UTC is br oadcast (short wave radio pulses)

Clock Synchronizat ion Algorit hms

- Not ever y machine has UTC r eceiver

– I f one, t hen keep ot hers synchronized

- Comput er t imer s go of f H t imes/ sec, incr count er

- I deally, if H=60, 216,000 per hour (dC

/ dt = 0)

- But t ypical er r or s, 10–5, so 215,998 t o 216,002

- Specs can give you

maximum dr if t r at e(ρ)

- Every ∆t seconds, will

be at most 2ρ∆t apart

- I f want drif t of δ, r e-

synchronize every δ/2ρ Various algs (next )