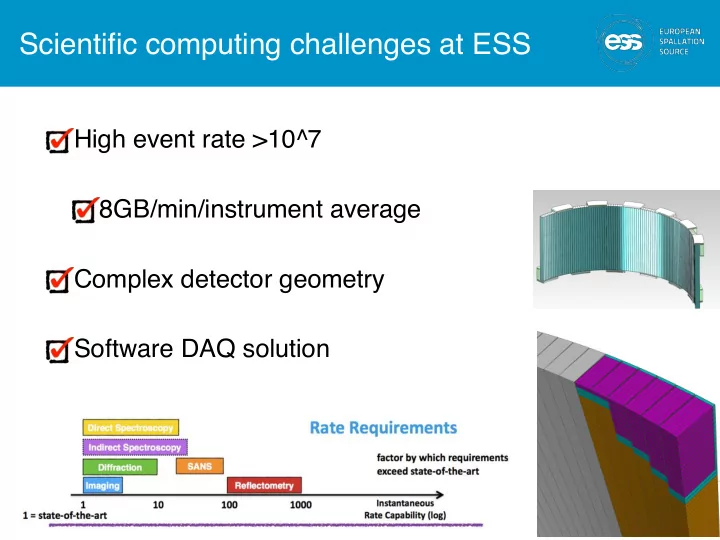

Scientific computing challenges at ESS

High event rate >10^7 8GB/min/instrument average Complex detector geometry Software DAQ solution

1

Scientific computing challenges at ESS High event rate >10^7 - - PowerPoint PPT Presentation

Scientific computing challenges at ESS High event rate >10^7 8GB/min/instrument average Complex detector geometry Software DAQ solution 1 Mantid development at the ESS Construction phase Core framework development MPI

1

2

3

Data Systems & Technologies 6FTE Instrument Data 8FTE Data Analysis & Modeling 11FTE

Copenhagen Data Centre DMSC servers in Lund Clusters, Workstations Disks, Parallel File System, Database Servers Networks (incl. Lund – CPH) Data transfer, Back-up & Archive External facing Servers User Program Software – Proposal & Scheduling Systems Instrument Control User Interfaces Live Visualization Data reduction (MANTID) Analysis codes MCSTAS support + dev.

DAQ & Data Management 13FTE

Detector readout Data Acquisition File writers (NeXus) Data curation 4

Project admin 3FTE

Project support Budget & schedule Meeting organisation

Data Systems & Technologies 10FTE Data reduction and data analysis 10FTE +10FTE Scientific modelling and simulation 4FTE

Storage and compute Data centre operations Lund Data centre operation CPH Inter site connection & network Software Deployment Security Data reduction & visualisation support and development Data analysis support & development Support and integration of MD and a-priori simulation tools

Experiment control Data curation 13FTE

Readout DAQ and Control Data management Data curation

5

Project admin (Petra Aulin) 3FTE

Project support Budget & schedule Meeting

User office support 4FTE

Development, support and maintenance of user office solution User database proposal system Visit system sample tracking

Detector data interface FEA - FE-BE Event formation unit(s) (Pixel positioning)

Detector signal

Frames of Events & Aggregation of meta data Detectors

Fast SE & motion i.e With a latency that is outside of spec for EPICS

Fast SE data

High speed environment data interface Neutron data and meta data Aggregator Mantid subscriber

File writing service

Choppers

Control Box Control Box

DM group

ICS

Detector group

Time stamped signals Time stamped signals Instrument PV access gateway

ERC ERC ChiC

Sample environment Motion axis

PLC layer

Apache Kafka

ERC ERC

catalogue

IOC(s) IOC(s)

Python based experiment control system & visualisation

control & configuration DB access

Reduction / Analysis API

Data reduction automated reduction data correction visualisation of reduced data

PFS API config

Kafka

Analysis interface Data analysis codes visualisation of analysed data

API config File Nexus Processed Analysis file in

DOI WEB

Accelerator data PSS Status User Office DB

Kafka

ERC Event receiver card

Fast SE readout

ID group DAM group DST group

ERC

Chopper & motion & SE

ICS software, CCDB ,IOC factory naming, SCR

7

BrightnESS is funded by the European Union’s Horizon 2020 research and innovation programme under grant agreement No. 676548

Detector Backend Detector Backend

Event Formation Unit Event Formation Unit

EPICS Bridge

Data Aggregation (Kafka)

NeXus File Writing

Live Feedback

EPICS

10 GB/s fibre Experiment control

Mantid reduction MPI + Kafka listener Mantid automatic reduction Instrument view of live data

~4x107 events/s

9

9

10

11

12

Functional safety Developer freedom

In collaboration with STFC

13

14

15

16

PixID # Spectrum #

17

18

In collaboration with STFC

19

20