CS 4100: Artificial Intelligence

Reinforcement Learning II

Jan-Willem van de Meent – Northeastern University

[These slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

Reinforcement Learning

- Still assume a Marko

kov decision process (MDP):

- A se

set o

- f st

states s s Î S

- A se

set o

- f a

actions ( s (per st state) A

- A mo

model T( T(s,a s,a,s ,s’) ’)

- A re

reward rd functio ion R( R(s,a s,a,s ,s’) ’)

- Still looki

king for a policy p(s) s)

- Ne

New twist st: We d We don’t kn know T or

- r R

- Id

Idea: Don’t have T and R, but we do have sa samples (s (s, a, r, s’)

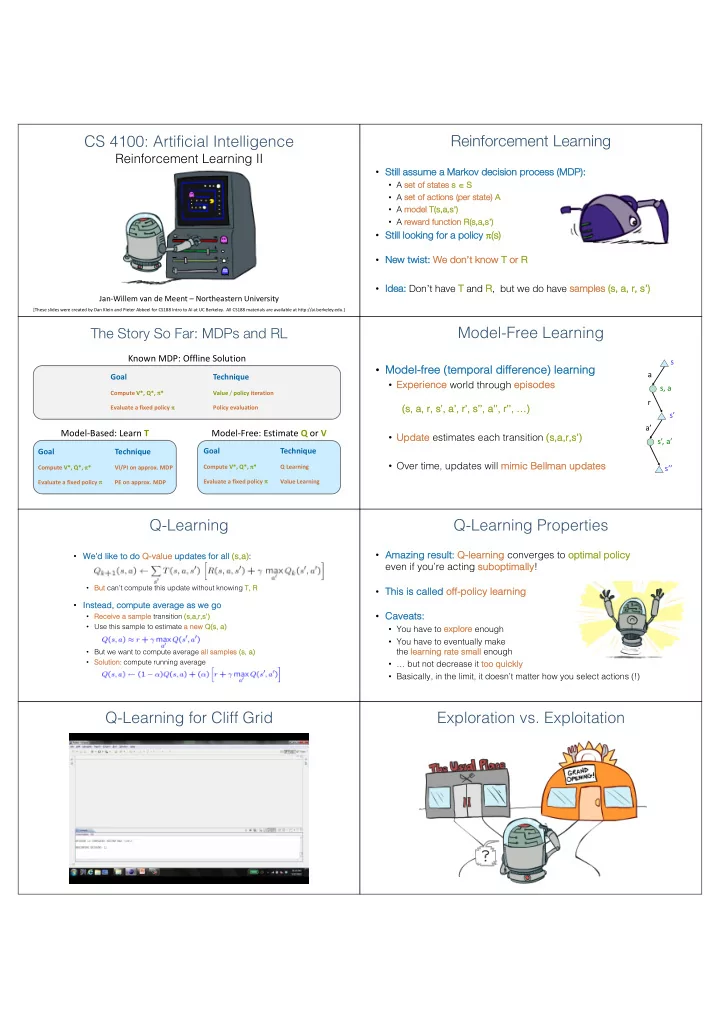

The Story So Far: MDPs and RL

Known MDP: Offline Solution

Goal Technique

Compute V*, Q*, p* Value / policy iteration Evaluate a fixed policy p Policy evaluation

Model-Based: Learn T Model-Free: Estimate Q or V

Goal Technique

Compute V*, Q*, p* VI/PI on approx. MDP Evaluate a fixed policy p PE on approx. MDP

Goal Technique

Compute V*, Q*, p* Q Learning Evaluate a fixed policy p Value Learning

Model-Free Learning

- Mod

Model el-fr free ( (te temporal d diffe fference) l learning

- Exp

xperience world through episo sodes (s, s, a, r, s’ s’, a’, r’, s’ s’’, a’’, r’’, …) …)

- Up

Update estimates each transition (s, s,a,r,s’) ’)

- Over time, updates will mi

mimi mic c Bel ellman man up updat ates es

r a s s, a s’ a’ s’, a’ s’’

Q-Learning

- We’d like

ke to do Q-va value updates s for all (s, s,a):

- Bu

But can’t compute this update without knowing T, T, R

- Inst

stead, compute ave verage as s we go

- Receive

ve a sa sample transition (s, s,a,r,s’) ’)

- Use this sample to estimate a

a new new Q(s, s, a)

- But we want to compute average all sa

samples s (s, a , a)

- So

Solution: compute running average

Q-Learning Properties

- Amazi

zing resu sult: Q-le learnin ing converges to optimal policy y even if you’re acting su suboptimally!

- This

s is s called of

- ff-policy

y learning

- Cave

veats: s:

- You have to exp

xplore enough

- You have to eventually make

the learning rate sm small enough

- … but not decrease it too quickl

kly

- Basically, in the limit, it doesn’t matter how you select actions (!)