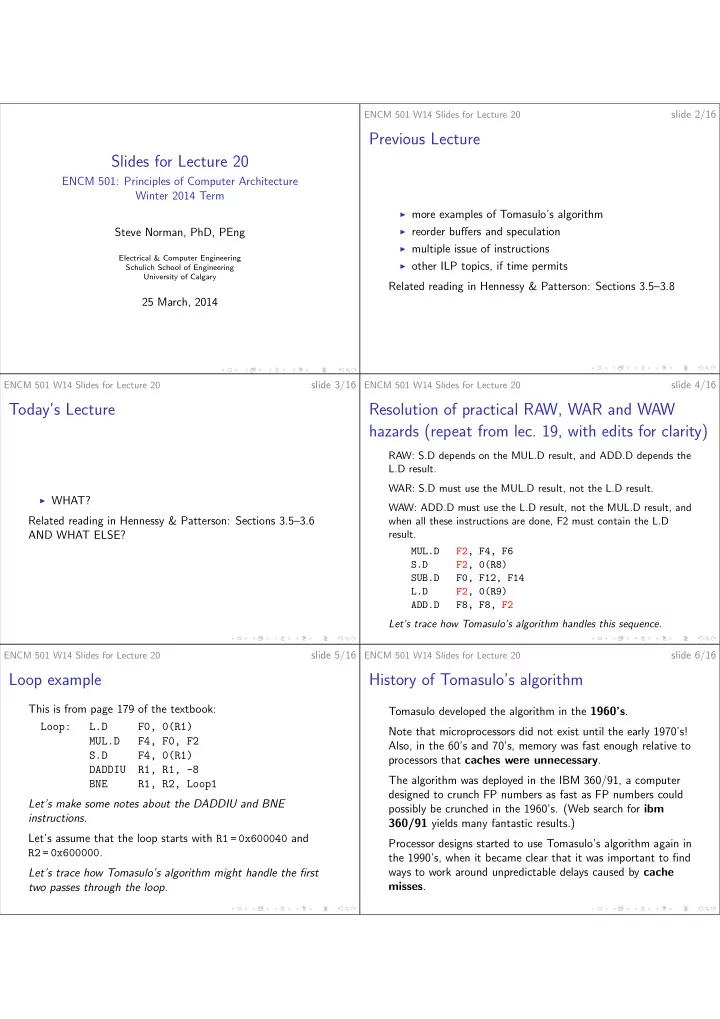

SLIDE 3 ENCM 501 W14 Slides for Lecture 20

slide 13/16

Register file changes:

◮ The Qi field for each register is replaced by a Busy flag

and a Reorder # field. Busy = 0 means the register is up-to-date; Busy = 1 means the register is waiting for a result from whatever entry in the reorder buffer matches the Reorder #.

◮ The register file does not watch the CDB for results.

The ROB must watch the CDB for results for all of the instructions within the ROB that don’t yet have results.

ENCM 501 W14 Slides for Lecture 20

slide 14/16

The reservation stations and functional units work very much as before, except:

◮ the Qj and Qk fields hold ROB entry numbers instead of

reservation station numbers;

◮ each reservation stations has a Dest field to hold an ROB

entry number;

◮ when a reservation station broadcasts its result on the

CDB, it includes the Dest field value to help both the ROB and the other reservation stations.

ENCM 501 W14 Slides for Lecture 20

slide 15/16

The reorder buffer and safe speculation

The key point about the ROB is that it can collect a large number of results without knowing whether those results should really be written to registers or memory. Consider a branch instruction that is mispredicted as taken.

◮ What happens to all the instructions that got into the

ROB before the branch?

◮ What happens to the branch target instruction, the

successor of the the branch target instruction, etc., which got into the ROB after the branch? The bad effect of the above scenario is a waste of time and

- energy. What are the important bad effects that were

prevented?

ENCM 501 W14 Slides for Lecture 20

slide 16/16

More Topics for Today

As time permits . . .

◮ multiple issue of instructions ◮ limitations of ILP

ENCM 501 W14 Slides for Lecture 20

slide 16/16

Upcoming Topics

◮ Processes and threads. ◮ Multi-core processor circuits and their caches. ◮ Multi-core support for processes and threads.

Related reading in Hennessy & Patterson: Sections 5.1–5.2