Cognitive Modeling

Lecture 12: Connectionist Networks: Multi-layer Networks; Backpropagation

Frank Keller School of Informatics University of Edinburgh

keller@inf.ed.ac.uk

Cognitive Modeling: Multi-layer Networks; Backpropagation – p.1

Overview

Multi-layer networks:

limits of single layer networks; multi-layer networks: solution to XOR; properties of multi-layer networks; training multi-layer networks: backpropagation.

Reading: McLeod et al. (1998, Ch. 5).

Cognitive Modeling: Multi-layer Networks; Backpropagation – p.2

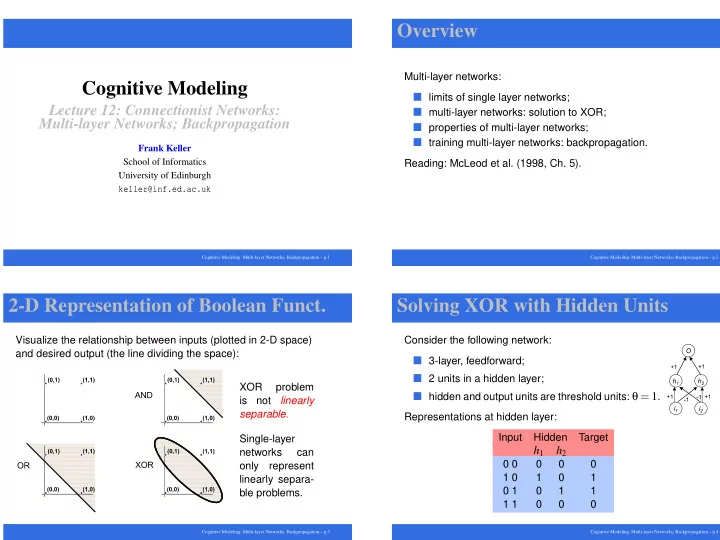

2-D Representation of Boolean Funct.

Visualize the relationship between inputs (plotted in 2-D space) and desired output (the line dividing the space): XOR problem is not linearly separable. Single-layer networks can

- nly

represent linearly separa- ble problems.

Cognitive Modeling: Multi-layer Networks; Backpropagation – p.3

Solving XOR with Hidden Units

Consider the following network:

3-layer, feedforward; 2 units in a hidden layer; hidden and output units are threshold units: θ = 1.

Representations at hidden layer: Input Hidden Target

h1 h2

0 0 1 0 1 1 0 1 1 1 1 1

Cognitive Modeling: Multi-layer Networks; Backpropagation – p.4