1

1/25/2005 1

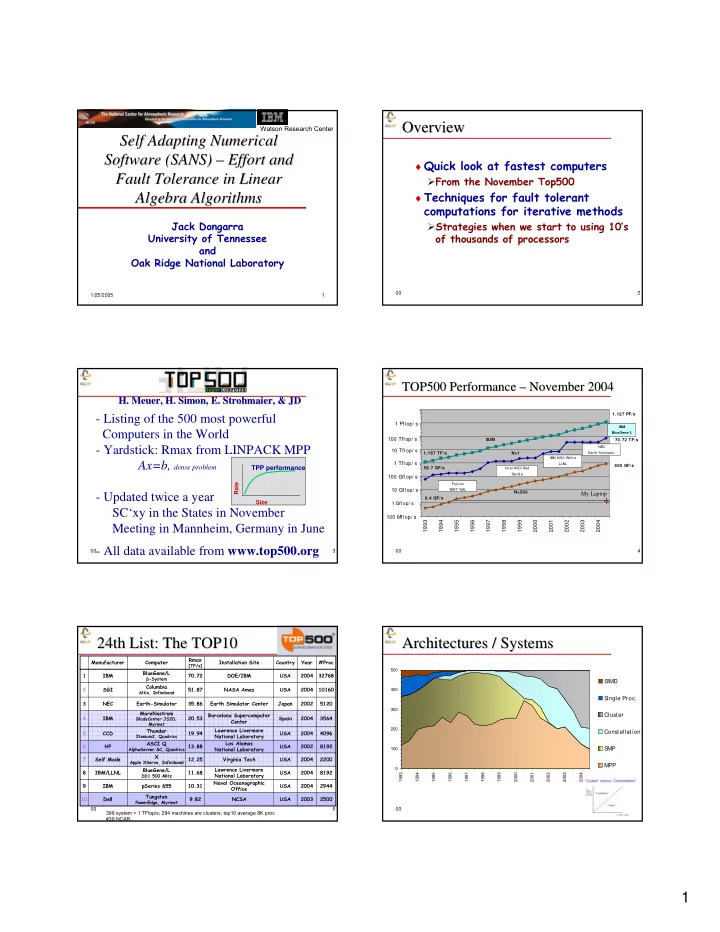

Self Adapting Numerical Self Adapting Numerical Software (SANS) Software (SANS) – – Effort and Effort and Fault Tolerance in Linear Fault Tolerance in Linear Algebra Algorithms Algebra Algorithms

Jack Dongarra University of Tennessee and Oak Ridge National Laboratory

Watson Research Center

00 2

Overview Overview

♦ Quick look at fastest computers From the November Top500 ♦ Techniques for fault tolerant

computations for iterative methods

Strategies when we start to using 10’s

- f thousands of processors

00 3

- H. Meuer, H. Simon, E. Strohmaier, & JD

- H. Meuer, H. Simon, E. Strohmaier, & JD

- Listing of the 500 most powerful

Computers in the World

- Yardstick: Rmax from LINPACK MPP

Ax=b, dense problem

- Updated twice a year

SC‘xy in the States in November Meeting in Mannheim, Germany in June

- All data available from www.top500.org

Size Rate

TPP performance

00 4

1.127 PF/s 1.167 TF/s 59.7 GF/s 70.72 TF/s 0.4 GF/s 850 GF/s

1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004

Fuj itsu 'NWT' NAL NEC Earth Simulator Intel ASCI Red Sandia IBM ASCI White LLNL

N=1 N=500 SUM 1 Gflop/ s

1 Tflop/ s 100 Mflop/ s 100 Gflop/ s 100 Tflop/ s 10 Gflop/ s 10 Tflop/ s 1 Pflop/ s

IBM BlueGene/L

My Laptop

TOP500 Performance TOP500 Performance – – November 2004 November 2004

00 5

24th List: The TOP10 24th List: The TOP10

2500 2003 USA NCSA 9.82 Tungsten

PowerEdge, Myrinet

Dell 10 2944 2004 USA Naval Oceanographic Office 10.31 pSeries 655 IBM 9 8192 2004 USA Lawrence Livermore National Laboratory 11.68 BlueGene/L

DD1 500 MHz

IBM/LLNL 8 2200 2004 USA Virginia Tech 12.25 X

Apple XServe, Infiniband

Self Made 7 8192 2002 USA Los Alamos National Laboratory 13.88 ASCI Q

AlphaServer SC, Quadrics

HP 6 4096 2004 USA Lawrence Livermore National Laboratory 19.94 Thunder

Itanium2, Quadrics

CCD 5 3564 2004 Spain Barcelona Supercomputer Center 20.53 MareNostrum

BladeCenter JS20, Myrinet

IBM 4 5120 2002 Japan Earth Simulator Center 35.86 Earth-Simulator NEC 3 10160 2004 USA NASA Ames 51.87 Columbia

Altix, Infiniband

SGI 2 32768 2004 USA DOE/IBM 70.72 BlueGene/L

β-System

IBM 1 #Proc Year Country Installation Site Rmax

[TF/s]

Computer Manufacturer 399 system > 1 TFlop/s; 294 machines are clusters, top10 average 8K proc #39 NCAR 00 6

Architectures / Systems Architectures / Systems

100 200 300 400 500 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004

S IMD S ingle Proc. Cluster Constellation S MP MPP