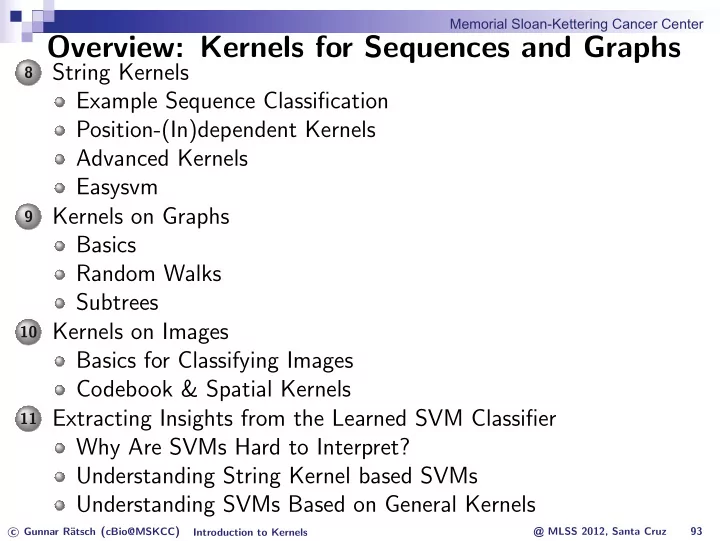

Overview: Kernels for Sequences and Graphs

8

String Kernels Example Sequence Classification Position-(In)dependent Kernels Advanced Kernels Easysvm

9

Kernels on Graphs Basics Random Walks Subtrees

10 Kernels on Images

Basics for Classifying Images Codebook & Spatial Kernels

11 Extracting Insights from the Learned SVM Classifier

Why Are SVMs Hard to Interpret? Understanding String Kernel based SVMs Understanding SVMs Based on General Kernels

c Gunnar R¨ atsch (cBio@MSKCC) Introduction to Kernels @ MLSS 2012, Santa Cruz 93

Memorial Sloan-Kettering Cancer Center