Hebbian Learning, Principal Component Analysis, and Independent Component Analysis

15-486/782: Artificial Neural Networks Fall 2006 (slides by Kornel Laskowski based on earlier slides by Dave Touretzky)

Hebbian Learning

Donald Hebb wrote in 1949: When an axon in cell A is near enough to excite cell B and repeat- edly and persistently takes part in firing it, some growth process

- r metabolic change takes place in one or both cells such that A’s

efficiency in firing B is increased. Today this growth process is known as Hebbian learning.

2

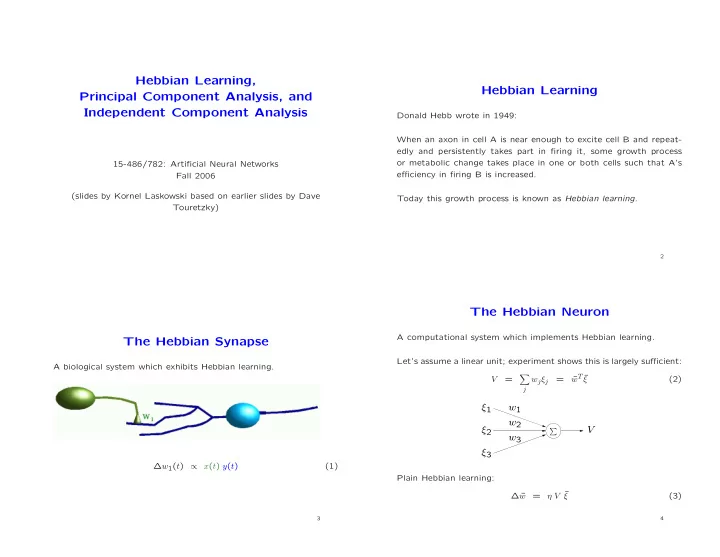

The Hebbian Synapse

A biological system which exhibits Hebbian learning. ∆w1(t) ∝ x(t) y(t) (1)

3

The Hebbian Neuron

A computational system which implements Hebbian learning. Let’s assume a linear unit; experiment shows this is largely sufficient: V =

- j

wjξj = ¯ wT ¯ ξ (2)

ξ1 ξ2 ξ3 V

- w1

w2 w3

Plain Hebbian learning: ∆ ¯ w = η V ¯ ξ (3)

4