On Computing the Total Variation Distance of Hidden Markov Models - PowerPoint PPT Presentation

On Computing the Total Variation Distance of Hidden Markov Models Stefan Kiefer University of Oxford, UK ICALP 2018 Prague, 10 July 2018 Stefan Kiefer On Computing the Total Variation Distance 1 Hidden Markov Models = Labelled Markov Chains

On Computing the Total Variation Distance of Hidden Markov Models Stefan Kiefer University of Oxford, UK ICALP 2018 Prague, 10 July 2018 Stefan Kiefer On Computing the Total Variation Distance 1

Hidden Markov Models = Labelled Markov Chains 1 1 1 2 a 3 a 2 a 1 1 1 4 $ 3 a 2 $ q 1 q 2 q 3 1 1 4 b 3 b Pr 1 ( aa ) = 1 2 · 1 2 · 1 Pr 2 ( aa ) = 1 3 · 1 3 · 1 2 + 1 3 · 1 2 · 1 4 2 Each Labelled Markov Chain (LMC) generates a probability distribution over Σ ∗ . Stefan Kiefer On Computing the Total Variation Distance 2

Hidden Markov Models = Labelled Markov Chains Very widely used: speech recognition gesture recognition signal processing climate modelling computational biology DNA modelling biological sequence analysis structure prediction probabilistic model checking: see tools like Prism or Storm Stefan Kiefer On Computing the Total Variation Distance 3

Hidden Markov Models = Labelled Markov Chains 1 1 1 2 a 3 a 2 a 1 1 1 4 $ 3 a 2 $ q 1 q 2 q 3 1 1 4 b 3 b Pr 1 ( aa ) = 1 2 · 1 2 · 1 Pr 2 ( aa ) = 1 3 · 1 3 · 1 2 + 1 3 · 1 2 · 1 4 2 Each LMC generates a probability distribution over Σ ∗ . Equivalence problem: Are the two distributions equal? Solvable in O ( | Q | 3 | Σ | ) with linear algebra [Schützenberger’61]. Direct applications in the verification of anonymity properties. Stefan Kiefer On Computing the Total Variation Distance 4

Total Variation Distance in Football Pr James 0.1 0.1 0.8 0.0 Pr Stefan 0.3 0.4 0.2 0.1 �� �� �� �� Pr Stefan − Pr James = 0 . 2 �� �� �� �� Pr Stefan − Pr James = 0 . 5 , , �� �� �� �� Pr Stefan − Pr James = 0 . 6 , , , , �� �� �� �� Pr Stefan − Pr James = − 0 . 6 Stefan Kiefer On Computing the Total Variation Distance 5

Total Variation Distance for Words Let Pr 1 , Pr 2 be two probability distributions over Σ ∗ . � � d ( Pr 1 , Pr 2 ) := max � Pr 1 ( W ) − Pr 2 ( W ) � W ⊆ Σ ∗ The maximum is attained by W 1 := { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } . As in the football case: d ( Pr 1 , Pr 2 ) = 1 � � � � Pr 1 ( w ) − Pr 2 ( w ) � 2 w ∈ Σ ∗ Stefan Kiefer On Computing the Total Variation Distance 6

Total Variation Distance for Words Let Pr 1 , Pr 2 be two probability distributions over Σ ∗ . � � d ( Pr 1 , Pr 2 ) := max � Pr 1 ( W ) − Pr 2 ( W ) � W ⊆ Σ ∗ The maximum is attained by W 1 := { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } . As in the football case: d ( Pr 1 , Pr 2 ) = 1 � � � � Pr 1 ( w ) − Pr 2 ( w ) � 2 w ∈ Σ ∗ By a simple calculation: 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) for W 2 := { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } . Stefan Kiefer On Computing the Total Variation Distance 6

Verification View 1 1 1 2 a 3 a 2 a 1 1 1 4 $ 3 a 2 $ q 1 q 2 q 3 1 1 4 b 3 b ∀ ϕ : Pr 2 ( ϕ ) ∈ [ Pr 1 ( ϕ ) − d , Pr 1 ( ϕ ) + d ] Small distance saves verification work. Especially for parameterised models. Stefan Kiefer On Computing the Total Variation Distance 7

Irrational Distances 1 1 2 a 4 a 1 1 4 $ 4 $ q 1 q 2 1 1 4 b 2 b √ 2 d = ≈ 0 . 35 4 Given two LMCs and a threshold τ ∈ [ 0 , 1 ] . Is d > τ ? strict distance-threshold problem Is d ≥ τ ? non-strict distance-threshold problem NP-hard: [Lyngsø,Pedersen’02], [Cortes,Mohri,Rastogi’07], [Chen,K.’14] Stefan Kiefer On Computing the Total Variation Distance 8

Decidability of the Distance-Threshold Problem Theorem (K.’18) The strict distance-threshold problem is undecidable. Reduction from emptiness of probabilistic automata. What about the non-strict distance-threshold problem? It is sqrt-sum-hard [Chen,K.’14] and PP-hard [K.’18]. Decidability status “strict vs. non-strict” similar as for the joint spectral radius of a set of matrices. Stefan Kiefer On Computing the Total Variation Distance 9

Acyclic LMCs 1 2 a 1 1 2 a 2 a a $ $ q 1 q 2 1 2 b 1 2 a b 1 2 a Theorem (K.’18) For acyclic LMCs: Computing the distance is #P-complete. Approximating the distance is #P-complete. The strict and non-strict distance-threshold problems are PP-complete. Reduction from #NFA: Given an NFA A and n ∈ N in unary, compute | L ( A ) ∩ Σ n | . Probably simpler than previous NP-hardness reductions. Stefan Kiefer On Computing the Total Variation Distance 10

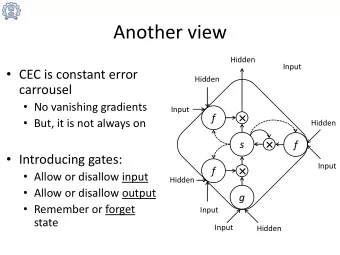

Approximation Theorem (K.’18) Given two LMCs and an error bound ε > 0 in binary, one can compute in PSPACE a number x ∈ [ d − ε, d + ε ] . 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) where W 1 = { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } W 2 = { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } Stefan Kiefer On Computing the Total Variation Distance 11

Approximation Theorem (K.’18) Given two LMCs and an error bound ε > 0 in binary, one can compute in PSPACE a number x ∈ [ d − ε, d + ε ] . 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) where W 1 = { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } W 2 = { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } 1 2 a 1 1 2 a 2 a a $ $ q 1 q 2 1 2 b 1 b 1 2 a 2 a Stefan Kiefer On Computing the Total Variation Distance 11

Approximation Theorem (K.’18) Given two LMCs and an error bound ε > 0 in binary, one can compute in PSPACE a number x ∈ [ d − ε, d + ε ] . 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) where W 1 = { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } W 2 = { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } 1 2 a 1 1 2 a 2 a a $ $ q 1 q 2 1 2 b 1 b 1 2 a 2 a In the cyclic case: we have to sample exponentially long words. Stefan Kiefer On Computing the Total Variation Distance 11

Approximation Theorem (K.’18) Given two LMCs and an error bound ε > 0 in binary, one can compute in PSPACE a number x ∈ [ d − ε, d + ε ] . 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) where W 1 = { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } W 2 = { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } 1 2 a 1 1 2 a 2 a a $ $ q 1 q 2 1 2 b 1 b 1 2 a 2 a In the cyclic case: we have to sample exponentially long words. Floating-point arithmetic computes Pr 1 ( w ) , Pr 2 ( w ) up to small relative error. Stefan Kiefer On Computing the Total Variation Distance 11

Approximation Theorem (K.’18) Given two LMCs and an error bound ε > 0 in binary, one can compute in PSPACE a number x ∈ [ d − ε, d + ε ] . 1 + d ( Pr 1 , Pr 2 ) = Pr 1 ( W 1 ) + Pr 2 ( W 2 ) where W 1 = { w ∈ Σ ∗ : Pr 1 ( w ) ≥ Pr 2 ( w ) } W 2 = { w ∈ Σ ∗ : Pr 1 ( w ) < Pr 2 ( w ) } 1 2 a 1 1 2 a 2 a a $ $ q 1 q 2 1 2 b 1 b 1 2 a 2 a In the cyclic case: we have to sample exponentially long words. Floating-point arithmetic computes Pr 1 ( w ) , Pr 2 ( w ) up to small relative error. Use Ladner’s result on counting in polynomial space. Stefan Kiefer On Computing the Total Variation Distance 11

Infinite-Word LMCs 2 2 3 b 3 b 1 2 1 2 q 1 q 2 3 a 3 a 3 a 3 a 1 1 3 b 3 b E.g., if W = { aw : w ∈ Σ ω } then Pr 1 ( W ) = 1 3 and Pr 2 ( W ) = 2 3 . � � d ( Pr 1 , Pr 2 ) := max � Pr 1 ( W ) − Pr 2 ( W ) � W ⊆ Σ ω � � = max Pr 1 ( W ) − Pr 2 ( W ) W ⊆ Σ ω Theorem (Chen,K.’14) One can decide in polynomial time if d ( Pr 1 , Pr 2 ) = 1 . One can also decide in polynomial time if Pr 1 = Pr 2 . Finite-word LMCs are a special case of infinite-word LMCs. Stefan Kiefer On Computing the Total Variation Distance 12

Summary Theorem (main results again) The strict distance-threshold problem is undecidable. Approximating the distance is #P-hard and in PSPACE. Open problems: decidability of the non-strict distance-threshold problem complexity of approximating the distance of infinite-word LMCs non-hidden/deterministic LMCs Stefan Kiefer On Computing the Total Variation Distance 13

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.