SLIDE 1

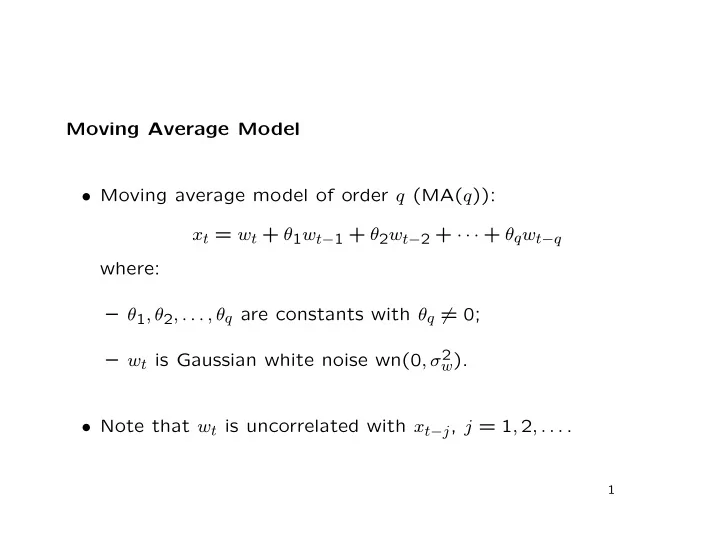

Moving Average Model

- Moving average model of order q (MA(q)):

xt = wt + θ1wt−1 + θ2wt−2 + · · · + θqwt−q where: – θ1, θ2, . . . , θq are constants with θq = 0; – wt is Gaussian white noise wn(0, σ2

w).

- Note that wt is uncorrelated with xt−j, j = 1, 2, . . . .