Lecture 9 LVCSR Search Michael Picheny, Bhuvana Ramabhadran, - PowerPoint PPT Presentation

Lecture 9 LVCSR Search Michael Picheny, Bhuvana Ramabhadran, Stanley F . Chen, Markus Nussbaum-Thom Watson Group IBM T.J. Watson Research Center Yorktown Heights, New York, USA {picheny,bhuvana,stanchen,nussbaum}@us.ibm.com 23 March 2016

A Simple Case a b 1 2 3 A a:A b:B 1 2 3 T A B 1,1 2,2 3,3 A ◦ T Intuition: trace through A , T simultaneously. 45 / 139

Another Simple Case a b d A 1 2 3 4 d:D c:C b:B a:A T 1 A B D 1,1 2,1 3,1 4,1 A ◦ T Intuition: trace through A , T simultaneously. 46 / 139

Composition: States a b d A 1 2 3 4 d:D c:C b:B a:A T 1 A B D 1,1 2,1 3,1 4,1 A ◦ T What is the possible set of states in result? Cross product of states in inputs, i.e. , ( s 1 , s 2 ) . 47 / 139

Composition: Arcs a b d A 1 2 3 4 d:D c:C b:B a:A T 1 A B D 1,1 2,1 3,1 4,1 A ◦ T Create arc from ( s 1 , t 1 ) to ( s 2 , t 2 ) with label o iff . . . Arc from s 1 to s 2 in A with label i and . . . Arc from t 1 to t 2 in T with input i and output o . 48 / 139

The Composition Algorithm For every state s ∈ A , t ∈ T , create state ( s , t ) ∈ A ◦ T . Create arc from ( s 1 , t 1 ) to ( s 2 , t 2 ) with label o iff . . . Arc from s 1 to s 2 in A with label i and . . . Arc from t 1 to t 2 in T with input i and output o . ( s , t ) is initial iff s and t are initial; similarly for final states. What is time complexity? 49 / 139

Example a b A 1 2 3 a:A b:B T 1 2 3 1,3 2,3 3,3 B A ◦ T 1,2 2,2 3,2 A 1,1 2,1 3,1 50 / 139

Another Example 2 a b A a 1 3 b a:A T b:B 1 2 a:a b:b A A ◦ T A b B a B 1,1 2,2 3,1 1,2 2,1 3,2 a b 51 / 139

Composition and ǫ -Transitions Basic idea: can take ǫ -transition in one FSM . . . Without moving in other FSM. Tricky to do exactly right. Do readings if you care: (Pereira, Riley, 1997) <epsilon> B <epsilon>:B B:B A , T 1 2 3 1 2 3 A:A A eps 1,3 2,3 3,3 B eps A ◦ T 1,2 2,2 3,2 B A B B eps 1,1 2,1 3,1 52 / 139

Recap Composition is easy! Composition is fast! Worst case: quadratic in states. Optimization: only expand reachable state pairs. 53 / 139

Where Are We? Introduction to FSA’s, FST’s, and Composition 1 What Can Composition Do? 2 How To Compute Composition 3 Composition and Graph Expansion 4 Weighted FSM’s 5 54 / 139

Building the One Big HMM Can we do this with composition? Start with n -gram LM expressed as HMM. Repeatedly expand to lower-level HMM’s. 55 / 139

A View of Graph Expansion Design some finite-state machines. L = language model FSA. T LM → CI = FST mapping to CI phone sequences. T CI → CD = FST mapping to CD phone sequences. T CD → GMM = FST mapping to GMM sequences. Compute final decoding graph via composition: L ◦ T LM → CI ◦ T CI → CD ◦ T CD → GMM How to design transducers? 56 / 139

Example: Mapping Words To Phones DH AH THE DH IY THE D AO G DOG ❚❍❊✿❉❍ ✎ ✿❆❍ ❉❖●✿❉✳❆❖✳● ❚❍❊✿❉❍✳■❨ ✎ ✿■❨ ❚❍❊✿❉❍✳❆❍ ❉❖●✿❉ ✎ ✿❆❖ ✎ ✿● 57 / 139

Example: Mapping Words To Phones ❚❍❊ ❉❖● A ❚❍❊✿❉❍ ✎ ✿❆❍ ✎ ✿■❨ T ✎ ✿❆❖ ❉❖●✿❉ ✎ ✿● ❆❍ ❉❍ ❉ ❆❖ ● A ◦ T ■❨ 58 / 139

Example: Inserting Optional Silences C A B A 1 2 3 4 C:C B:B A:A <epsilon>:~SIL T 1 ~SIL ~SIL ~SIL ~SIL A ◦ T C A B 1 2 3 4 Don’t forget identity transformations! Strings that aren’t accepted are discarded. 59 / 139

Example: Rewriting CI Phones as HMM’s ❉ ❆❖ ● A ✎ ✿ ❣ ❉✳✶ ✎ ✿ ❣ ❉✳✷ ❉✿ ❣ ❉✳✶ ✎ ✿ ❣ ❉✳✷ ✎ ✿ ✎ ✎ ✿ ❣ ❆❖✳✷ ✎ ✿ ✎ T ❆❖✿ ❣ ❆❖✳✶ ✎ ✿ ❣ ❆❖✳✶ ✎ ✿ ❣ ❆❖✳✷ ●✿ ❣ ●✳✶ ✎ ✿ ❣ ●✳✶ ✎ ✿ ❣ ●✳✷ ✎ ✿ ❣ ●✳✷ ✎ ✿ ✎ ❣ ●✳✷ ❣ ❉✳✶ ❣ ❉✳✷ ❣ ❆❖✳✶ ❣ ❆❖✳✷ ❣ ●✳✶ A ◦ T ❣ ❉✳✶ ❣ ❉✳✷ ❣ ❆❖✳✶ ❣ ❆❖✳✷ ❣ ●✳✶ ❣ ●✳✷ 60 / 139

Example: Rewriting CI ⇒ CD Phones e.g. , L ⇒ L-S+IH The basic idea: adapt FSA for trigram model. When take arc, know current trigram ( P ( w i | w i − 2 w i − 1 ) ). Output w i − 1 - w i − 2 + w i ! ❞✐t ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞✐t ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ 61 / 139

How to Express CD Expansion via FST’s? ❚ ❆❆ ❚ ❆❆ A ❉ ❉ ❚✿❆❆✲❚✰❚ ❆❆ ❚ ❆❆✿❚✲❆❆✰❆❆ ❚✿❆❆✲❉✰❚ ❉✿❆❆✲❉✰❉ T ❉✿❆❆✲❚✰❉ ❆❆✿❚✲ ❥ ✰❆❆ ❚ ❆❆ ❆❆ ❉ ✎ ✿❆❆✲❉✰ ❥ ❆❆✿❉✲❆❆✰❆❆ ❚✿ ✎ ❥ ❚ ❉ ❆❆ ✎ ✿❆❆✲❚✰ ❥ ❆❆ ❥ ❉✿ ✎ ❥ ❥ ❉ ❆❆✿❉✲ ❥ ✰❆❆ ❆❆✲❚✰❚ ❚✲❆❆✰❆❆ ❚✲ ❥ ✰❆❆ ❆❆✲❚✰ ❥ ❆❆✲❚✰❉ A ◦ T ❉✲ ❥ ✰❆❆ ❆❆✲❉✰ ❥ ❆❆✲❉✰❚ ❉✲❆❆✰❆❆ ❆❆✲❉✰❉ 62 / 139

How to Express CD Expansion via FST’s? ❚ ❆❆ ❚ ❆❆ ❉ ❉ ❆❆✲❚✰❚ ❚✲❆❆✰❆❆ ❚✲ ❥ ✰❆❆ ❆❆✲❚✰ ❥ ❆❆✲❚✰❉ ❆❆✲❉✰ ❥ ❉✲ ❥ ✰❆❆ ❆❆✲❉✰❚ ❉✲❆❆✰❆❆ ❆❆✲❉✰❉ Point: composition automatically expands FSA . . . To correctly handle context! Makes multiple copies of states in original FSA . . . That can exist in different triphone contexts. (And makes multiple copies of only these states.) 63 / 139

Example: Rewriting CD Phones as HMM’s D-|+AO AO-D+G G-AO+| A ǫ : g D.1,3 D-|+AO: g D.1,3 ǫ : g D.2,7 ǫ : g D.2,7 ǫ : ǫ ǫ : g AO.2,3 ǫ : ǫ AO-D+G: g AO.1,5 T ǫ : g AO.1,5 ǫ : g AO.2,3 G-AO+|: g G.1,8 ǫ : g G.1,8 ǫ : g G.2,4 ǫ : g G.2,4 ǫ : ǫ g G.2,4 g D.1,3 g D.2,7 g AO.1,5 g AO.2,3 g G.1,8 A ◦ T g D.1,3 g D.2,7 g AO.1,5 g AO.2,3 g G.1,8 g G.2,4 64 / 139

Recap: Whew! Design some finite-state machines. L = language model FSA. T LM → CI = FST mapping to CI phone sequences. T CI → CD = FST mapping to CD phone sequences. T CD → GMM = FST mapping to GMM sequences. Compute final decoding graph via composition: L ◦ T LM → CI ◦ T CI → CD ◦ T CD → GMM 65 / 139

Where Are We? Introduction to FSA’s, FST’s, and Composition 1 What Can Composition Do? 2 How To Compute Composition 3 Composition and Graph Expansion 4 Weighted FSM’s 5 66 / 139

What About Those Probability Thingies? e.g. , to hold language model probs, transition probs, etc. FSM’s ⇒ weighted FSM’s. WFSA’s, WFST’s. Each arc has score or cost . So do final states. ❝✴✵✳✹ ❜✴✶✳✸ ✷✴✶✳✵ ❛✴✵✳✸ ✎ ✴✵✳✻ ✸✴✵✳✹ ✶ ❛✴✵✳✷ 67 / 139

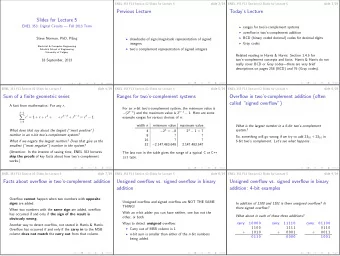

What Is A Cost? HMM’s have probabilities on arcs. Prob of path is product of arc probs. ❛✴✵✳✶ ❜✴✶✳✵ ❞✴✵✳✵✶ ✶ ✷ ✸ ✹ WFSM’s have negative log probs on arcs. Cost of path is sum of arc costs plus final cost. ❛✴✶ ❜✴✵ ❞✴✷ ✹✴✵ ✶ ✷ ✸ 68 / 139

What Does a WFSA Accept? A WFSA accepts a string i with cost c . . . If path from initial to final state labeled with i and with cost c . How costs/labels distributed along path doesn’t matter! Do these accept same strings with same costs? a/1 b/2 a/0 b/0 1 2 3/3 1 2 3/6 69 / 139

What If Two Paths With Same String? How to compute cost for this string? Use “min” operator to compute combined cost? Combine paths with same labels. a/1 a/2 c/0 a/1 c/0 1 2 3/0 1 2 3/0 b/3 b/3 Operations (+ , min ) form a semiring (the tropical semiring). 70 / 139

Which Is Different From the Others? a/0 1 2/1 a/0.5 1 2/0.5 a/1 <epsilon>/1 a/0 1 2 3/0 b/1 a/3 b/1 1 2/-2 3 71 / 139

Weighted Composition ❛✴✶ ❜✴✵ ❞✴✷ A ✹✴✵ ✶ ✷ ✸ ❞✿❉✴✵ ❝✿❈✴✵ ❜✿❇✴✶ ❛✿❆✴✷ T ✶✴✶ ❆✴✸ ❇✴✶ ❉✴✷ A ◦ T ✹✴✶ ✶ ✷ ✸ 72 / 139

The Bottom Line Place LM, AM log probs in L , T LM → CI , T CI → CD , T CD → GMM . e.g. , LM probs, pronunciation probs, transition probs. Compute decoding graph via weighted composition: L ◦ T LM → CI ◦ T CI → CD ◦ T CD → GMM Then, doing Viterbi decoding on this big HMM . . . Correctly computes (more or less): ω ∗ = arg max P ( ω | x ) = arg max P ( ω ) P ( x | ω ) ω ω 73 / 139

Recap: FST’s and Composition? Awesome! Operates on all paths in WFSA (or WFST) simultaneously. Rewrites symbols as other symbols. Context-dependent rewriting of symbols. Adds in new scores. Restricts set of allowed paths (intersection). Or all of above at once. 74 / 139

Weighted FSM’s and ASR Graph expansion can be framed . . . As series of (weighted) composition operations. Correctly combines scores from multiple WFSM’s. Building FST’s for each step is pretty straightforward . . . Except for context-dependent phone expansion. Handles graph expansion for training, too. 75 / 139

Discussion Don’t need to write code?! AT&T FSM toolkit ⇒ OpenFST; lots of others. Generate FST’s as text files. 1 2 C 2 3 A 3 4 B 4 C A B 1 2 3 4 WFSM framework is very flexible. Just design new FST’s! e.g. , CD pronunciations at word or phone level. 76 / 139

Part II Making Decoding Fast 77 / 139

How Big? How Fast? Time to look at efficiency. How big is the one big HMM? How long will Viterbi take? 78 / 139

Pop Quiz How many states in HMM representing trigram model . . . With vocabulary size | V | ? How many arcs? ❞✐t ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞✐t ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ 79 / 139

Issue: How Big The Graph? Trigram model ( e.g. , vocabulary size | V | = 2) ❞✐t ❞✐t ❞❛❤ ❞✐t ❞❛❤ ❞✐t ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ | V | 3 word arcs in FSA representation. Words are ∼ 4 phones = 12 states on average (CI). If | V | = 50000, 50000 3 × 12 ≈ 10 15 states in graph. PC’s have ∼ 10 10 bytes of memory. 80 / 139

Issue: How Slow Decoding? In each frame, loop through every state in graph. If 100 frames/sec, 10 15 states . . . How many cells to compute per second? A core can do ∼ 10 11 floating-point ops per second. 81 / 139

Recap Naive graph expansion is way too big; Viterbi way too slow. Shrinking the graph also makes things faster! How to shrink the one big HMM? 82 / 139

Where Are We? Shrinking the Language Model 1 Graph Optimization 2 Pruning 3 Other Viterbi Optimizations 4 Other Decoding Paradigms 5 83 / 139

Compactly Representing N -Gram Models One big HMM size ∝ LM HMM size. Trigram model: | V | 3 arcs in naive representation. ❞✐t ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞✐t ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ ❞❛❤ ❞❛❤ ❞✐t ❞✐t ❞❛❤ Small fraction of all trigrams occur in training data. Is it possible to keep arcs only for seen trigrams? 84 / 139

Compactly Representing N -Gram Models Can express smoothed n -gram models . . . Via backoff distributions. � P primary ( w i | w i − 1 ) if count ( w i − 1 w i ) > 0 P smooth ( w i | w i − 1 ) = α w i − 1 P smooth ( w i ) otherwise Idea: avoid arcs for unseen trigrams via backoff states. 85 / 139

Compactly Representing N -Gram Models � P primary ( w i | w i − 1 ) if count ( w i − 1 w i ) > 0 P smooth ( w i | w i − 1 ) = α w i − 1 P smooth ( w i ) otherwise t❤r❡❡✴P✭t❤r❡❡ ❥ t✇♦✮ t✇♦✴P✭t✇♦ ❥ t✇♦✮ t✇♦✴P✭t✇♦ ❥ t❤r❡❡✮ t✇♦✴P✭t✇♦ ❥ ♦♥❡✮ ✎ ✴ ☛ ✭t✇♦✮ t✇♦ t❤r❡❡✴P✭t❤r❡❡ ❥ t❤r❡❡✮ ♦♥❡✴P✭♦♥❡ ❥ t✇♦✮ t✇♦✴P✭t✇♦✮ ✎ ✴ ☛ ✭t❤r❡❡✮ ♦♥❡✴P✭♦♥❡ ❥ ♦♥❡✮ ✎ t❤r❡❡ ✎ ✴ ☛ ✭♦♥❡✮ t❤r❡❡✴P✭t❤r❡❡✮ ♦♥❡ ♦♥❡✴P✭♦♥❡✮ t❤r❡❡✴P✭t❤r❡❡ ❥ ♦♥❡✮ ♦♥❡✴P✭♦♥❡ ❥ t❤r❡❡✮ 86 / 139

Problem Solved!? Is this FSA deterministic? i.e. , are there multiple paths with same label sequence? Is this method exact ? Does Viterbi ever use the wrong probability? 87 / 139

Can We Make the LM Even Smaller? Sure, just remove some more arcs. Which? Count cutoffs. e.g. , remove all arcs corresponding to n -grams . . . Occurring fewer than k times in training data. Likelihood/entropy-based pruning (Stolcke, 1998). Choose those arcs which when removed, . . . Change likelihood of training data the least. 88 / 139

Discussion Only need to keep seen n -grams in LM graph. Exact representation blows up graph several times. Can further prune LM to arbitrary size. e.g. , for BN 4-gram model, 100MW training data . . . Pruning by factor of 50 ⇒ +1% absolute WER. Graph small enough now? Let’s keep on going; smaller ⇒ faster! 89 / 139

Where Are We? Shrinking the Language Model 1 Graph Optimization 2 Pruning 3 Other Viterbi Optimizations 4 Other Decoding Paradigms 5 90 / 139

Graph Optimization Can we modify topology of graph . . . Such that it’s smaller (fewer arcs or states) . . . Yet accepts same strings (with same costs)? (OK to move labels and costs along paths.) 91 / 139

Graph Compaction Consider word graph for isolated word recognition. Expanded to phone level: 39 states, 38 arcs. ABROAD DD AO B R AX S ABUSE UW B Y AX B Y UW Z ABUSE AX AE B S ER DD ABSURD AE B Z ER DD AE ABSURD AA B UW ABU B UW ABU 92 / 139

Determinization Share common prefixes: 29 states, 28 arcs. ABROAD DD AO ABUSE R S Y UW Z ABUSE B ER DD ABSURD AX S AE B Z ER DD ABSURD AA UW ABU B UW ABU 93 / 139

Minimization Share common suffixes: 18 states, 23 arcs. AO DD R ABROAD Y UW B S Z ABUSE AX S ABSURD AE B Z ER DD AA UW ABU B UW Does this accept same strings as original graph? Original: 39 states, 38 arcs. 94 / 139

What Is A Deterministic FSM? Same as being nonhidden for HMM. No two arcs exiting same state with same input label. No ǫ arcs. i.e. , for any input label sequence . . . Only one state reachable from start state. A A <epsilon> B A B B 95 / 139

Determinization: A Simple Case 2 a a 2,3 1 3 a b 1 b 4 4 Does this accept same strings? States on right ⇔ state sets on left! 96 / 139

A Less Simple Case a 2 3 <epsilon> b 1 b a a b b 1,2 3,4 4,5 4 5 Does this accept same strings? ( ab ∗ ) 97 / 139

Determinization Start from start state. Keep list of state sets not yet expanded. For each, compute outgoing arcs in logical way . . . Creating new state sets as needed. Must follow ǫ arcs when computing state sets. 2 A 1 <epsilon> 5 A B A B 1 2,3,5 4 3 4 B 98 / 139

Example 2 a a 1 2 a 4 a a a b b 3 5 a b a a b 1 2,3 2,3,4,5 4,5 99 / 139

Example 3 ABROAD DD 35 AO 30 R 23 B 16 9 2 AX S ABUSE UW Y 33 38 B 28 14 21 7 AX Y UW Z ABUSE B 15 22 29 34 39 8 AX AE B S ER DD ABSURD 1 3 10 17 24 31 36 AE B Z ER AE 4 DD 11 18 25 ABSURD 32 37 AA B 5 UW ABU 12 19 26 B 6 UW 13 ABU 20 27 100 / 139

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.