Design & Analysis of Design & Analysis of Design & - PowerPoint PPT Presentation

Design & Analysis of Design & Analysis of Design & Analysis of Physical Design Algorithms Physical Design Algorithms Physical Design Algorithms Majid Sarrafzadeh Majid Sarrafzadeh Elaheh Bozorgzadeh Elaheh Bozorgzadeh Ryan

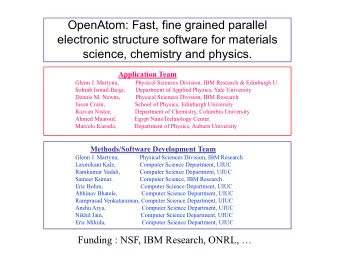

Design & Analysis of Design & Analysis of Design & Analysis of Physical Design Algorithms Physical Design Algorithms Physical Design Algorithms Majid Sarrafzadeh Majid Sarrafzadeh Elaheh Bozorgzadeh Elaheh Bozorgzadeh Ryan Kastner Ryan Kastner Ankur Srivatsava Ankur Srivatsava UCLA CS Dept UCLA CS Dept majid@cs.ucla.edu majid@cs.ucla.edu ER ER ISPD - 2001 UCLA UCLA

Outline Outline Outline � Introduction � Introduction � Problem Transformation: Upper-Bound � Problem Transformation: Upper-Bound � Practical Implication of Lower-Bound Analysis � Practical Implication of Lower-Bound Analysis � Greedy Algorithms and their proofs � Greedy Algorithms and their proofs � Greedy vs Global � Greedy vs Global � Approximation Algorithms � Approximation Algorithms � Probabilistic Algorithms � Probabilistic Algorithms � Conclusions � Conclusions � Main message: � Main message: � we need more/better algorithms � we need more/better algorithms � Better understanding (less hacks) � Better understanding (less hacks) ER ER ISPD - 2001 UCLA UCLA

Introduction Introduction Introduction ER ER ISPD - 2001 UCLA UCLA

Some points to consider Some points to consider Some points to consider � Physical Design Problems are getting harder � Physical Design Problems are getting harder � Size, Concurrent optimization, DSM, … � Size, Concurrent optimization, DSM, … � Novel/effective algorithms are essential in coping with � Novel/effective algorithms are essential in coping with the complexity the complexity � (mincut vs congestion) � (mincut vs congestion) � Analysis of these algorithms are of fundamental � Analysis of these algorithms are of fundamental importance importance � We can then concentrate on other issues/parameters � We can then concentrate on other issues/parameters � Novel algorithmic paradigms need to be tried � Novel algorithmic paradigms need to be tried ER ER ISPD - 2001 UCLA UCLA

In this talk In this talk In this talk � Talk about paradigms that have been (can be) used � Talk about paradigms that have been (can be) used � Upper-bound transformation � Upper-bound transformation � Seemingly unimportant concepts can be very powerful � Seemingly unimportant concepts can be very powerful � Proof of a greedy algorithm � Proof of a greedy algorithm � There are LOTS of things that we still do not understand � There are LOTS of things that we still do not understand (and yet seem important in making progress) (and yet seem important in making progress) � congestion � congestion � “We” need to make research progress (abstract/long � “We” need to make research progress (abstract/long term in addition to the usual hacks). term in addition to the usual hacks). ER ER ISPD - 2001 UCLA UCLA

Problem Transformation: Problem Transformation: Problem Transformation: Upper Bound Upper Bound Upper Bound ER ER ISPD - 2001 UCLA UCLA

Formal Definition Formal Definition Formal Definition � A is X(n)-transformable to B: � A is X(n)-transformable to B: � The input to A is converted to a suitable input to B � The input to A is converted to a suitable input to B � Problem B is solved � Problem B is solved � The output of B is transformed into a correct solution to problem � The output of B is transformed into a correct solution to problem A A � Steps 1 & 3 takes X(n) time � Steps 1 & 3 takes X(n) time � Upper Bound via Transformability: � Upper Bound via Transformability: If B can be solved in T(n) time and A is X(n)-transformable If B can be solved in T(n) time and A is X(n)-transformable to B, then A can be solved in T(n) + O(X(n)) time. to B, then A can be solved in T(n) + O(X(n)) time. � Quality of the solution to A? � Quality of the solution to A? � Bad example: finding min of a list via sorting O(n) transform � Bad example: finding min of a list via sorting O(n) transform � Good example: element uniqueness via sorting O(n) transform � Good example: element uniqueness via sorting O(n) transform ER ER ISPD - 2001 UCLA UCLA

Multi- -way Partitioning Using Bi way Partitioning Using Bi- -partition partition Multi Multi-way Partitioning Using Bi-partition Heuristics [Wang et al] Heuristics Heuristics [Wang et al] [Wang et al] Input: Input: •A target graph to be partitioned. A target graph to be partitioned. • •k: number of target partitions. k: number of target partitions. • Output: Output: Each vertex in the target graph gets assigned to one of the target partitions. et partitions. Each vertex in the target graph gets assigned to one of the targ Numbers of vertices among target partitions are “the same” (balanced). Numbers of vertices among target partitions are “the same” (bala nced). Objective: Objective: The number of edges between target partitions (net- The number of edges between target partitions (net -cut) is minimized. cut) is minimized. ER ER ISPD - 2001 UCLA UCLA

Example Example Example Target Graph Bi-partition, net-cut = 2 3-way partition, net-cut = 4 4-way partition, net-cut = 5 ER ER ISPD - 2001 UCLA UCLA

FM (and FM++): The Industry FM (and FM++): The Industry FM (and FM++): The Industry Standard for Bi- -Partitioning Partitioning Standard for Bi Standard for Bi-Partitioning +1 +0 -1 -1 +1 -1 -1 +0 +1 � Each vertex has a “gain” associated with it. Each vertex has a “gain” associated with it. � � The vertex with the biggest gain will be moved. The vertex with the biggest gain will be moved. � ER ER ISPD - 2001 UCLA UCLA

Possible Approaches For Multi- -Way Way Possible Approaches For Multi Possible Approaches For Multi-Way Partitioning Partitioning Partitioning � Direct extension of FM. Each target vertex has � Direct extension of FM. Each target vertex has k-1 possible moving destinations. k-1 possible moving destinations. � Using bi-partitioning heuristics (problem transf): � Using bi-partitioning heuristics (problem transf): • Hierarchical approach: Recursively bi Hierarchical approach: Recursively bi- -partition partition the target graph. (1+2+4 bipartition to do 8 (1+2+4 bipartition to do 8- -way) way) the target graph. • Flat approach: Iteratively improve the k Flat approach: Iteratively improve the k- -way way • partition result by performing local bi- - partition result by performing local bi partitioning ( C(8,2) = 28 or more bipartition to ( C(8,2) = 28 or more bipartition to partitioning do 8- -way) way) do 8 •Question: which one is more powerful? Question: which one is more powerful? • ER ER ISPD - 2001 UCLA UCLA

Why problem transformation? Why problem transformation? Why problem transformation? � The direct extension of FM approach does not � The direct extension of FM approach does not yield good partitioning results in general. yield good partitioning results in general. � The state-of-the-art bi-partitioning tools are very � The state-of-the-art bi-partitioning tools are very effective. effective. � It is straightforward (little R&D) to solve multi- � It is straightforward (little R&D) to solve multi- way partitioning problem using existing bi- way partitioning problem using existing bi- partitioning tools via hierarchical or flat partitioning tools via hierarchical or flat approaches. approaches. ER ER ISPD - 2001 UCLA UCLA

Bi- -partition Algorithms partition Algorithms Bi Bi-partition Algorithms � A δ -approximation algorithm: the bi-partition � A δ -approximation algorithm: the bi-partition result is less than or equal to δ C opt . result is less than or equal to δ C opt . � An α -balanced bi-section problem: the number � An α -balanced bi-section problem: the number of nodes in each partition is between α n and (1- of nodes in each partition is between α n and (1- α )n. (A perfectly balanced bi-section problem is α )n. (A perfectly balanced bi-section problem is a 0.5-balanced problem.) a 0.5-balanced problem.) ER ER ISPD - 2001 UCLA UCLA

The First Cut The First Cut The First Cut e 1 e 1 e 4 e 3 e 4 e 3 e 6 e 5 e 2 e 2 Cut 1 = e 1 + e 2 + e 3 + e 4 <= e 1 + e 2 + e 3 + e 4 + e 5 + e 6 <= δ OPT ER ER ISPD - 2001 UCLA UCLA

The Second Cut The Second Cut The Second Cut e 1 Optimal solution e 4 e 3 Cut 2 e 6 e 5 Cut 2 e 2 Cut 2 <= δ (e 1 + e 2 + e 3 + e 4 + e 5 + e 6 ) e 1 e 1 Cut 2 <= δ (e 1 + e 2 + e 3 + e 4 + e 5 + e 6 ) e 4 e 3 e 4 e 3 e 6 e 5 e 6 e 5 e 2 e 2 Cut 2 <= δ OPT ER ER ISPD - 2001 UCLA UCLA

Conclusion Conclusion Conclusion � For hierarchical approach: � For hierarchical approach: C hie = O( δ logk)C opt ER ER ISPD - 2001 UCLA UCLA

The Flat Approach The Flat Approach The Flat Approach C hie = O( δ k)C opt ER ER ISPD - 2001 UCLA UCLA

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.