Optimization Techniques

Reading: C.M.Bishop NNPR §7 15-486/782: Artificial Neural Networks Dave Touretzky Fall 2006 (based on slides by A. Courville, Spring 2002, and K. Laskowski, Spring 2004)

What is Parameter Optimization?

A fancy name for training: the selection of parameter values, which are optimal in some desired sense (eg. minimize an objective function you choose over a dataset you choose). The parameters are the weights and biases of the network. In this lecture, we will not address learning of network structure. We assume a fixed number of layers and a fixed number of hidden units. In neural networks, training is typically iterative and time-consuming. It is in our interests to reduce the training time as much as possible.

2

Lecture Outline

In detail:

- 1. Gradient Descent (& some extensions)

- 2. Line Search

- 3. Conjugate Gradient Search

In passing:

- 4. Newton’s method

- 5. Quasi-Newton methods

We will not cover Model Trust Region methods (Scaled Conjugate Gradients, Levenberg-Marquart).

3

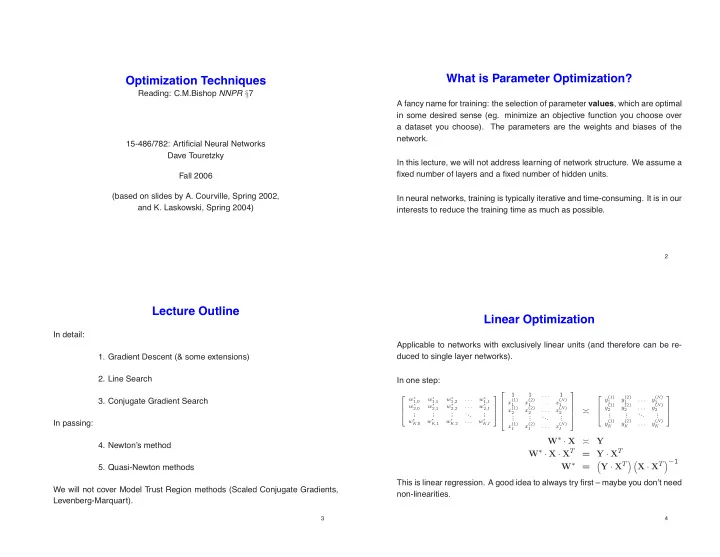

Linear Optimization

Applicable to networks with exclusively linear units (and therefore can be re- duced to single layer networks). In one step:

w∗

1,0

w∗

1,1

w∗

1,2

. . . w∗

1,I

w∗

2,0

w∗

2,1

w∗

2,2

. . . w∗

2,I

. . . . . . . . . ... . . . w∗

K,0

w∗

K,1

w∗

K,2

. . . w∗

K,I

1 1 . . . 1 x(1)

1

x(2)

1

. . . x(N)

1

x(1)

2

x(2)

2

. . . x(N)

2

. . . . . . ... . . . x(1)

I

x(2)

I

. . . x(N)

I

≍

y(1)

1

y(2)

1

. . . y(N)

1

y(1)

2

y(2)

2

. . . y(N)

2

. . . . . . ... . . . y(1)

K

y(2)

K

. . . y(N)

K

W∗ · X ≍ Y W∗ · X · XT = Y · XT W∗ =

- Y · XT

X · XT −1 This is linear regression. A good idea to always try first – maybe you don’t need non-linearities.

4