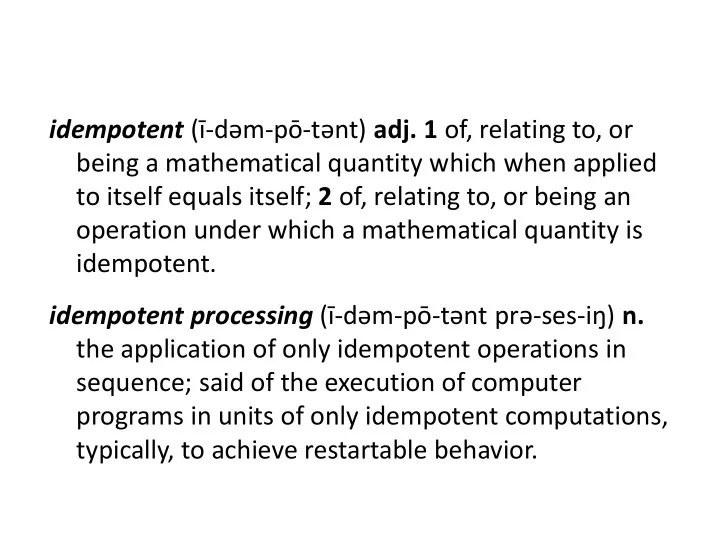

idempotent (ī-dəm-pō-tənt) adj. 1 of, relating to, or being a mathematical quantity which when applied to itself equals itself; 2 of, relating to, or being an

- peration under which a mathematical quantity is

being a mathematical quantity which when applied to itself equals - - PowerPoint PPT Presentation

idempotent ( - dm - p - tnt ) adj. 1 of, relating to, or being a mathematical quantity which when applied to itself equals itself; 2 of, relating to, or being an operation under which a mathematical quantity is idempotent. idempotent

PLDI 2012, Beijing

2

3

4

5

6

int int sum(int int *data, int int len) { int int x = 0; for for (int int i = 0; i < len; ++i) x += data[i]; return return x; }

7

8

9

10

11

12

13

14

15

16

17

18

clobber antidependences region boundaries region identification

depends on

19

20

clobber antidependences region boundaries region identification

depends on

21

22

23

sources of overhead

rough sketch

24

25

26

27

28

29

2 4 6 8 10 12 instruction count execution time

percent overhead

7.6 7.7 benchmark suites (gmean) (gmean)

30

1 10 100 compiler- generated

28

dynamic region size

(gmean) benchmark suites (gmean)

31

5 10 15 20 25 30 35 idempotence checkpoint/log instruction TMR

(gmean) 8.2 24.0 30.5

percent overhead

benchmark suites (gmean)

32

33

34

35

36

37

38

Technique Year Domain Sentinel Scheduling 1992 Speculative memory re-ordering Fast Mutual Exclusion 1992 Uniprocessor mutual exclusion Multi-Instruction Retry 1995 Branch and hardware fault recovery Atomic Heap Transactions 1999 Atomic memory allocation Reference Idempotency 2006 Reducing speculative storage Restart Markers 2006 Virtual memory in vector machines Data-Triggered Threads 2011 Data-triggered multi-threading Idempotent Processors 2011 Hardware simplification for exceptions Encore 2011 Hardware fault recovery iGPU 2012 GPU exception/speculation support

39

5 10 15 20 25 30 instruction count execution time

suites (gmean)

(gmean)

percent overhead

7.6 7.7 non-idempotent inner loops + high register pressure

40

1 10 100 1000 10000 compiler ideal Ideal w/o outliers

suites (gmean)

(gmean) 28

/

116 45 >1,000,000 limited aliasing information