C H A P T E R

9

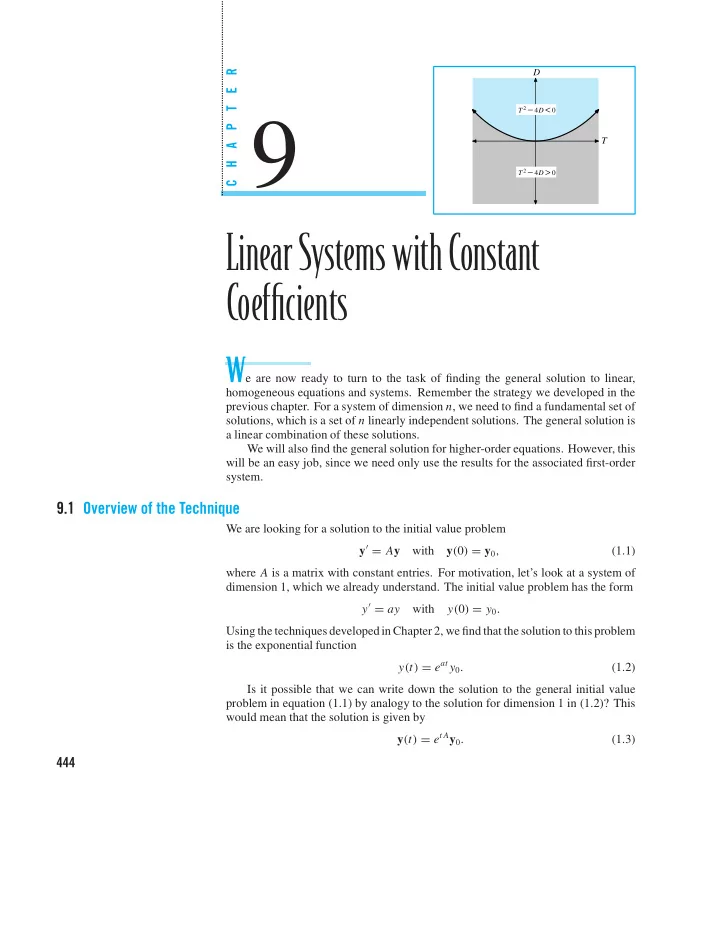

T D

T 2 4D

− <

T 2 4D

− >

LinearSystemswithConstant Coefficients

We are now ready to turn to the task of finding the general solution to linear,

homogeneous equations and systems. Remember the strategy we developed in the previous chapter. For a system of dimension n, we need to find a fundamental set of solutions, which is a set of n linearly independent solutions. The general solution is a linear combination of these solutions. We will also find the general solution for higher-order equations. However, this will be an easy job, since we need only use the results for the associated first-order system.

9.1 Overview of the Technique

We are looking for a solution to the initial value problem y′ = Ay with y(0) = y0, (1.1) where A is a matrix with constant entries. For motivation, let’s look at a system of dimension 1, which we already understand. The initial value problem has the form y′ = ay with y(0) = y0. Using the techniques developedin Chapter 2, we find that the solution to this problem is the exponential function y(t) = eat y0. (1.2) Is it possible that we can write down the solution to the general initial value problem in equation (1.1) by analogy to the solution for dimension 1 in (1.2)? This would mean that the solution is given by y(t) = et Ay0. (1.3)

444