STK-IN4300 Statistical Learning Methods in Data Science

Riccardo De Bin

debin@math.uio.no

STK4030: lecture 2 1/ 38 STK-IN4300 - Statistical Learning Methods in Data Science

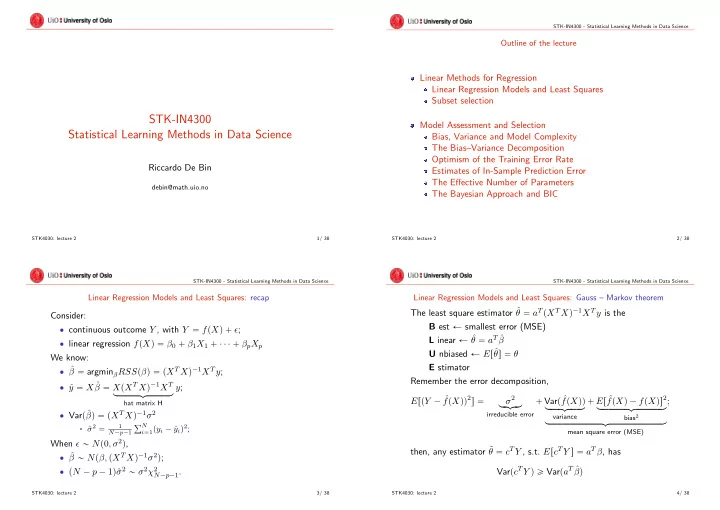

Outline of the lecture

Linear Methods for Regression Linear Regression Models and Least Squares Subset selection Model Assessment and Selection Bias, Variance and Model Complexity The Bias–Variance Decomposition Optimism of the Training Error Rate Estimates of In-Sample Prediction Error The Effective Number of Parameters The Bayesian Approach and BIC

STK4030: lecture 2 2/ 38 STK-IN4300 - Statistical Learning Methods in Data Science

Linear Regression Models and Least Squares: recap

Consider: ‚ continuous outcome Y , with Y “ fpXq ` ǫ; ‚ linear regression fpXq “ β0 ` β1X1 ` ¨ ¨ ¨ ` βpXp We know: ‚ ˆ β “ argminβRSSpβq “ pXT Xq´1XT y; ‚ ˆ y “ X ˆ β “ XpXT Xq´1XT loooooooomoooooooon

hat matrix H

y; ‚ Varpˆ βq “ pXT Xq´1σ2

§ ˆ

σ2 “

1 N´p´1

řN

i“1pyi ´ ˆ

yiq2;

When ǫ „ Np0, σ2q, ‚ ˆ β „ Npβ, pXT Xq´1σ2q; ‚ pN ´ p ´ 1qˆ σ2 „ σ2χ2

N´p´1.

STK4030: lecture 2 3/ 38 STK-IN4300 - Statistical Learning Methods in Data Science

Linear Regression Models and Least Squares: Gauss – Markov theorem

The least square estimator ˆ θ “ aT pXT Xq´1XT y is the B est Ð smallest error (MSE) L inear Ð ˆ θ “ aT ˆ β U nbiased Ð Erˆ θs “ θ E stimator Remember the error decomposition, ErpY ´ ˆ fpXqq2s “ σ2 lo

- mo

- n

irreducible error

` Varp ˆ fpXqq looooomooooon

variance

` Er ˆ fpXq ´ fpXqs2 loooooooooomoooooooooon

bias2

looooooooooooooooooomooooooooooooooooooon

mean square error (MSE)

; then, any estimator ˜ θ “ cT Y , s.t. ErcT Y s “ aT β, has VarpcT Y q ě VarpaT ˆ βq

STK4030: lecture 2 4/ 38