1

Lecture 3:

Small-Scale Shared Address Space Multiprocessors & Shared memory programming (OpenMP + POSIX threads)

2

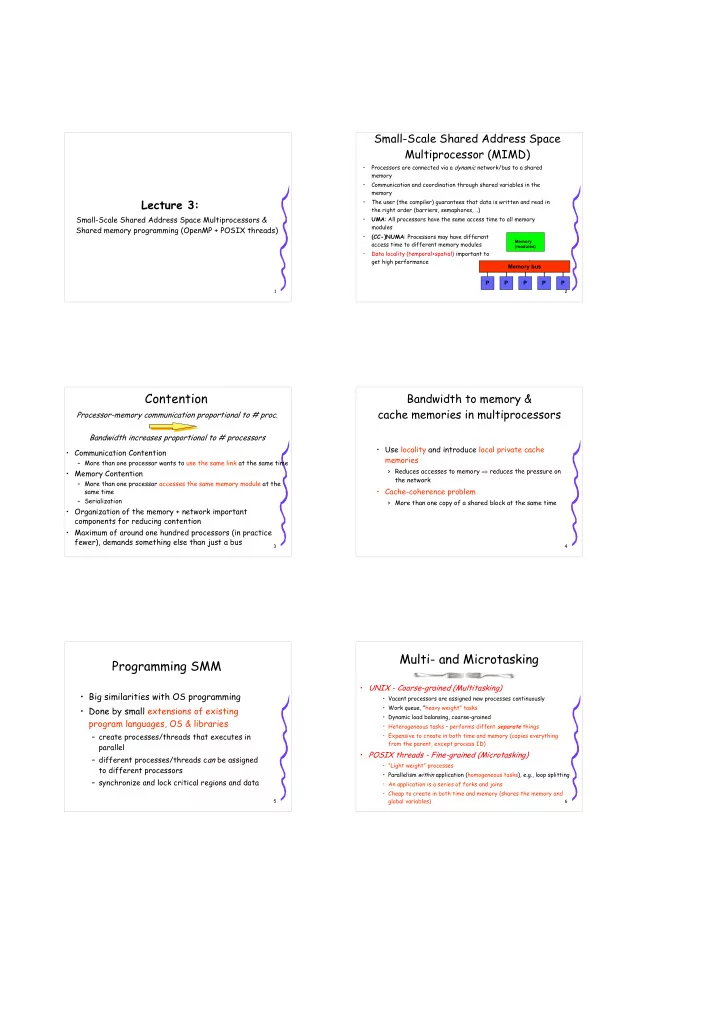

Small-Scale Shared Address Space Multiprocessor (MIMD)

- Processors are connected via a dynamic network/bus to a shared

memory

- Communication and coordination through shared variables in the

memory

- The user (the compiler) guarantees that data is written and read in

the right order (barriers, semaphores, ..)

- UMA: All processors have the same access time to all memory

modules

- (CC-)NUMA: Processors may have different

access time to different memory modules

- Data locality (temporal+spatial) important to

get high performance P P P P P

Memory (modules)

Memory bus

3

Processor-memory communication proportional to # proc. Bandwidth increases proportional to # processors

- Communication Contention

– More than one processor wants to use the same link at the same time

- Memory Contention

– More than one processor accesses the same memory module at the same time – Serialization

- Organization of the memory + network important

components for reducing contention

- Maximum of around one hundred processors (in practice

fewer), demands something else than just a bus

Contention

4

Bandwidth to memory & cache memories in multiprocessors

- Use locality and introduce local private cache

memories

> Reduces accesses to memory ⇒ reduces the pressure on the network

- Cache-coherence problem

> More than one copy of a shared block at the same time

5

Programming SMM

- Big similarities with OS programming

- Done by small extensions of existing

program languages, OS & libraries

– create processes/threads that executes in parallel – different processes/threads can be assigned to different processors – synchronize and lock critical regions and data

6

Multi- and Microtasking

- UNIX - Coarse-grained (Multitasking)

- Vacant processors are assigned new processes continuously

- Work queue, ”heavy weight” tasks

- Dynamic load balansing, coarse-grained

- Heterogeneous tasks – performs diffent separate things

- Expensive to create in both time and memory (copies everything

from the parent, except process ID)

- POSIX threads - Fine-grained (Microtasking)

- ”Light weight” processes

- Parallelism within application (homogeneous tasks), e.g., loop splitting

- An application is a series of forks and joins

- Cheap to create in both time and memory (shares the memory and

global variables)