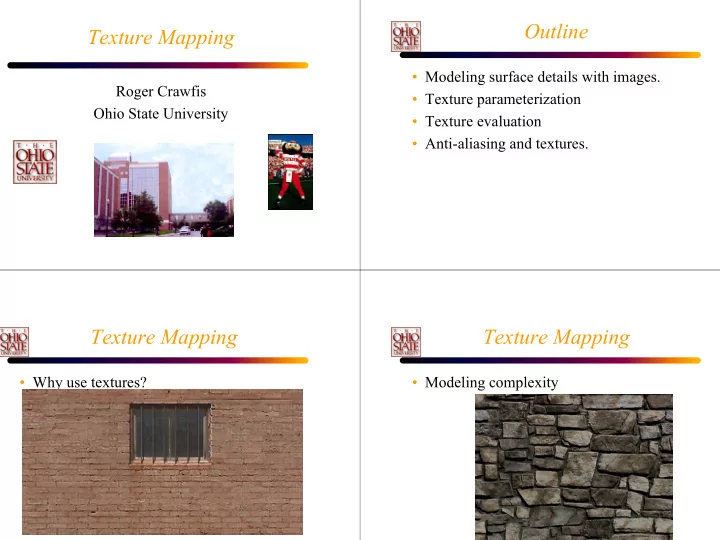

Texture Mapping

Roger Crawfis Ohio State University

Outline

- Modeling surface details with images.

- Texture parameterization

- Texture evaluation

- Anti-aliasing and textures.

Texture Mapping

- Why use textures?

Texture Mapping

- Modeling complexity

Outline Texture Mapping Modeling surface details with images. - - PowerPoint PPT Presentation

Outline Texture Mapping Modeling surface details with images. Roger Crawfis Texture parameterization Ohio State University Texture evaluation Anti-aliasing and textures. Texture Mapping Texture Mapping Why use textures?

[digital] Texturing & Painting, 2002

Jason Bryan

Jason Bryan

Jason Bryan

Jason Bryan

Jason Bryan

Camuto 1998

Lao 1998

Lao 1998

Lao 1998

4 2 2

π π π

A B A A B A

Works well for roughly spherical shapes

Watt

Watt

Watt

2 2 2 1 1 1

l + B/(A+B)u2 l

l + B/(A+B)w2 l = 1

l + Bu2 l)/(A + B)

l + Bu2 l)/(A + B)

l/w1 l + bu2 l/w2 l )/(a 1/w1 l + b 1/w2 l)

glTexImage2D (GL_TEXTURE_2D, 0, GL_RGB, imageWidth, imageHeight, 0, GL_RGB, GL_UNSIGNED_BYTE, imageData);

glBindTexture (GL_TEXTURE_2D, 13);

glBegin (GL_QUADS);

glTexCoord2f (0.0, 0.0); glVertex3f (0.0, 0.0, 0.0); glTexCoord2f (1.0, 0.0); glVertex3f (10.0, 0.0, 0.0); glTexCoord2f (1.0, 1.0); glVertex3f (10.0, 10.0, 0.0); glTexCoord2f (0.0, 1.0); glVertex3f (0.0, 10.0, 0.0);

glEnd ();

– GL_S, GL_T, GL_R, GL_Q

– GL_TEXTURE_GEN_MODE – GL_OBJECT_PLANE – GL_EYE_PLANE

– GL_OBJECT_LINEAR – GL_EYE_LINEAR – GL_SPHERE_MAP

– Useful for placing text on the screen.

Lastra

Lastra

Crawfis 1991

Crawfis 1991

N

N D N’

D N D N N N N A

= + ⊗ = ′ ⊗ =

(perhaps RGB triplets: IA, IB, IC)

rIB = (1-KB)IB + KBrIC rIA = (1-KA)IA + KA[(1-KB)IB + KBIC] <- A to B to C

rIA = (1 - 0.2)(1) + (0.2)[(1 - 0.5)(0.5) + (0.5)(1)] = 0.95 rIB = (1 - 0.5)(0.5) + (0.5)[(1 - 0.2)(1) + (0.2)(1)] = 0.75

N N

256 pixels 128 64 32 16 8 4 2 1 Note: This only requires an additional 1/3 amount of texture memory: 1/4 + 1/16 + 1/64 +…

− = + + − 1 1 1 2 1 n i i i i i

2 2 2 2

y y x x

2

1 2 3

isotropic

across a row.

going down a column.

equivalent mip-map.

Compression in u is 1.7 Compression in v is 6.8 Determine weights from each sample

pixel

(u2,v2) (u1,v1) (u4,v4)

– Color = SAT(u3,v3)-SAT(u4,v4)-SAT(u2,v2)+SAT(u1,v1) – Color = Color / ( (u3-u1)*(v3-v1) )

– For 8-bit colors, we could have a maximum SAT value of 255*nx*ny – 32-bit pixels would handle a 4kx4k texture with 8-bit values. – RGB images imply 12-bytes per pixel.

2 +vy 2

2 +uy 2

2 3 , , , , 1 1 1 , ,

+ = + = + =

k j i k j i x x i y y j z z k k j i

i i i