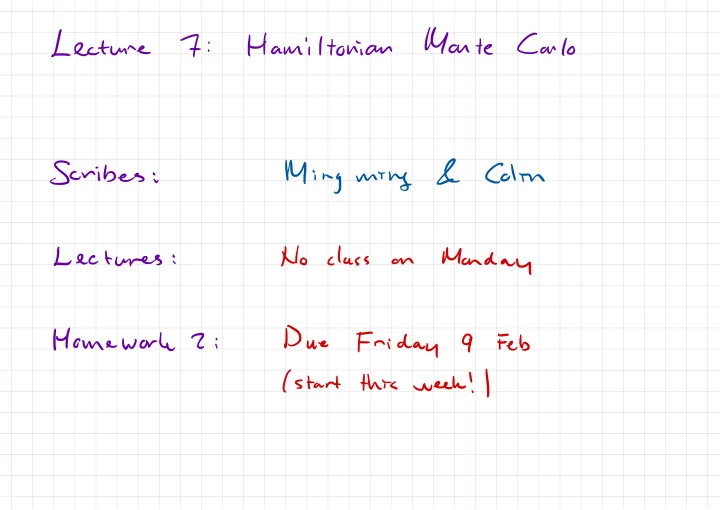

SLIDE 1 Lecture

7

:

Hamiltonian

Monte

Carlo

Scribes

:

Ming

mthg

&

Colin Lectures

:

No class

- n