1

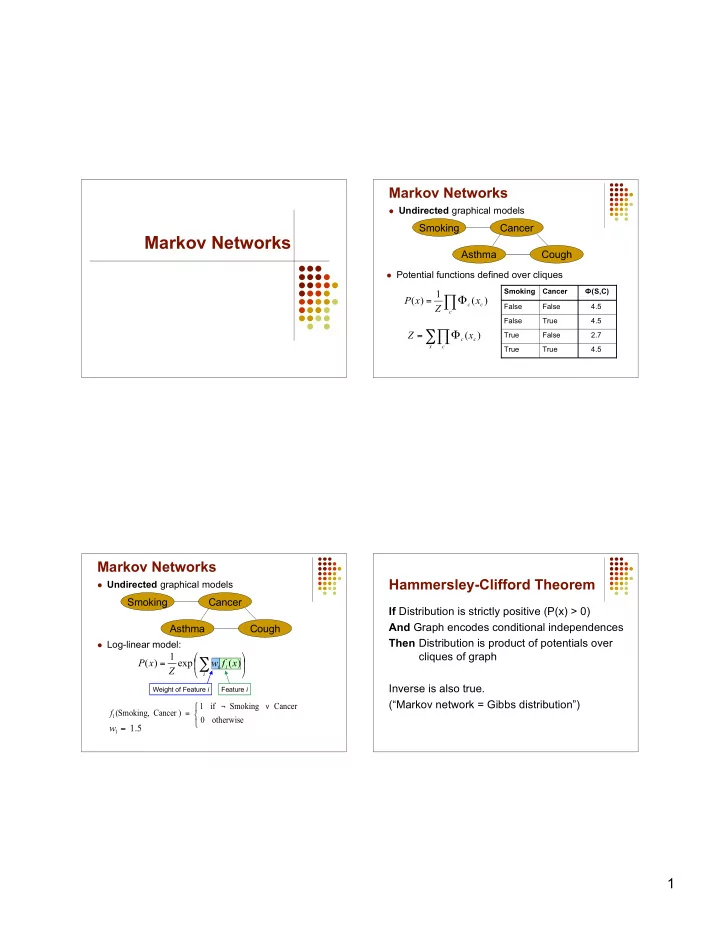

Markov Networks

Markov Networks

Undirected graphical models

Cancer Cough Asthma Smoking

Potential functions defined over cliques 4.5 True True 2.7 False True 4.5 True False 4.5 False False Ф(S,C) Cancer Smoking

- =

c c c x

Z x P ) ( 1 ) (

- =

x c c c x

Z ) (

Markov Networks

Undirected graphical models Log-linear model: Weight of Feature i Feature i

- ¬

=

- therwise

Cancer Smoking if 1 ) Cancer Smoking, (

1

f

5 . 1

1 =

w

Cancer Cough Asthma Smoking

- =

- i

i i