SLIDE 1

RAID

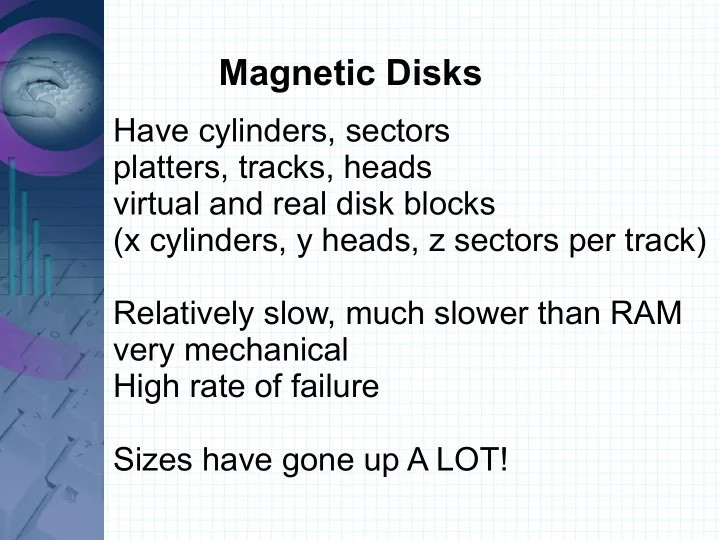

Redundant Array of Inexpensive (or Independent) Disks Improve performance by using multiple disks in parallel. Have several disks seem like one. Disks also have tendency to fail

- more disks, more failures likely

- providing more reliability would be