Limit Distributions for Smooth Total Variation and 2 -Divergence in - PowerPoint PPT Presentation

Limit Distributions for Smooth Total Variation and 2 -Divergence in High Dimensions Ziv Goldfeld and Kengo Kato Cornell University The 2020 International Symposium on Information Theory June 2020 Statistical Distances Definition: Measure

Limit Distributions for Smooth Total Variation and χ 2 -Divergence in High Dimensions Ziv Goldfeld and Kengo Kato Cornell University The 2020 International Symposium on Information Theory June 2020

Statistical Distances Definition: Measure discrepancy between prob. distributions 2/12

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q 2/12

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q If symmetric & δ ( P, Q ) ≤ δ ( P, R ) + δ ( R, Q ) then δ is a metric 2/12

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q If symmetric & δ ( P, Q ) ≤ δ ( P, R ) + δ ( R, Q ) then δ is a metric Popular Examples: 2/12

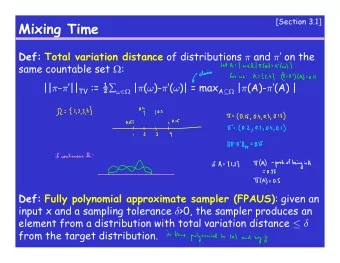

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q If symmetric & δ ( P, Q ) ≤ δ ( P, R ) + δ ( R, Q ) then δ is a metric Popular Examples: � � �� d P f -divergence: D f ( P � Q ) := E Q , convex f : R → [0 , ∞ ) f d Q (KL divergence, total variation, χ 2 -divergence, etc.) 2/12

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q If symmetric & δ ( P, Q ) ≤ δ ( P, R ) + δ ( R, Q ) then δ is a metric Popular Examples: � � �� d P f -divergence: D f ( P � Q ) := E Q , convex f : R → [0 , ∞ ) f d Q (KL divergence, total variation, χ 2 -divergence, etc.) � � X − Y � p �� 1 /p � p -Wasserstein dist.: W p ( P, Q ) := π ∈ Π( P,Q ) E π inf Π( P, Q ) is the set of coupling of P, Q 2/12

Statistical Distances Definition: Measure discrepancy between prob. distributions δ : P ( R d ) × P ( R d ) → [0 , ∞ ) s.t. δ ( P, Q ) = 0 ⇐ ⇒ P = Q If symmetric & δ ( P, Q ) ≤ δ ( P, R ) + δ ( R, Q ) then δ is a metric Popular Examples: � � �� d P f -divergence: D f ( P � Q ) := E Q , convex f : R → [0 , ∞ ) f d Q (KL divergence, total variation, χ 2 -divergence, etc.) � � X − Y � p �� 1 /p � p -Wasserstein dist.: W p ( P, Q ) := π ∈ Π( P,Q ) E π inf Π( P, Q ) is the set of coupling of P, Q Integral probability metrics: γ F ( P, Q ) := sup f ∈F E P [ f ] − E Q [ f ] (W 1 , TV, MMD, Dudley, Sobolev) 2/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . Recently: Variety of applications in machine learning 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . Recently: Variety of applications in machine learning Implicit generative modeling 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . Recently: Variety of applications in machine learning Implicit generative modeling Barycenter computation 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . Recently: Variety of applications in machine learning Implicit generative modeling Barycenter computation Anomaly detection, model ensembling, etc. 3/12

Statistical Distances: Why are they useful? Historically: Prob. theory, mathematical statistics, information theory Topological and metric structure of P ( R d ) Inequalities (Pinsker, Talagrand, joint-range, etc.) Hypothesis testing, goodness-of-fit tests, etc. Fundamental performance limits of operational problems . . . Recently: Variety of applications in machine learning Implicit generative modeling Barycenter computation Anomaly detection, model ensembling, etc. 3/12

Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution 4/12

Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable 4/12

Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable Latent variable Z ∼ Q Z ∈ P ( R p ) , p ≪ d 4/12

Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable Latent variable Z ∼ Q Z ∈ P ( R p ) , p ≪ d Expand Z to R d space via (random) transformation Q ( θ ) X | Z 4/12

Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable Latent variable Z ∼ Q Z ∈ P ( R p ) , p ≪ d Expand Z to R d space via (random) transformation Q ( θ ) X | Z R d 0 Q ( θ ) ⇒ Generative model: Q θ ( · ) := � X | Z ( ·| z ) d Q Z ( z ) = 4/12

� � � � ��� � Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable Latent variable Z ∼ Q Z ∈ P ( R p ) , p ≪ d Expand Z to R d space via (random) transformation Q ( θ ) X | Z R d 0 Q ( θ ) ⇒ Generative model: Q θ ( · ) := � X | Z ( ·| z ) d Q Z ( z ) = Target � Space Latent � Space � � | � 4/12

� � � ��� � � Implicit (Latent Variable) Generative Models Goal: Learn a model Q θ ≈ P to approximate data distribution Method: Complicated transformation of a simple latent variable Latent variable Z ∼ Q Z ∈ P ( R p ) , p ≪ d Expand Z to R d space via (random) transformation Q ( θ ) X | Z R d 0 Q ( θ ) ⇒ Generative model: Q θ ( · ) := � X | Z ( ·| z ) d Q Z ( z ) = Target � Space Latent � Space � � | � θ ⋆ ∈ argmin � P, Q θ � Minimum Distance Estimation: Solve δ θ 4/12

Implicit (Latent Variable) Generative Models 2 Goal: Solve OPT := inf θ δ ( P, Q θ ) exactly (find θ ⋆ ) 5/12

Implicit (Latent Variable) Generative Models 2 Goal: Solve OPT := inf θ δ ( P, Q θ ) exactly (find θ ⋆ ) Estimation: We don’t have P but data 5/12

Implicit (Latent Variable) Generative Models 2 Goal: Solve OPT := inf θ δ ( P, Q θ ) exactly (find θ ⋆ ) Estimation: We don’t have P but data { X i } n i =1 are i.i.d. samples from P ∈ P ( R d ) 5/12

Implicit (Latent Variable) Generative Models 2 Goal: Solve OPT := inf θ δ ( P, Q θ ) exactly (find θ ⋆ ) Estimation: We don’t have P but data { X i } n i =1 are i.i.d. samples from P ∈ P ( R d ) n Empirical distribution P n := 1 � δ X i n i =1 5/12

Implicit (Latent Variable) Generative Models 2 Goal: Solve OPT := inf θ δ ( P, Q θ ) exactly (find θ ⋆ ) Estimation: We don’t have P but data { X i } n i =1 are i.i.d. samples from P ∈ P ( R d ) n Empirical distribution P n := 1 � δ X i n i =1 ⇒ Inherently we work with δ ( P n , Q θ ) = 5/12

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.