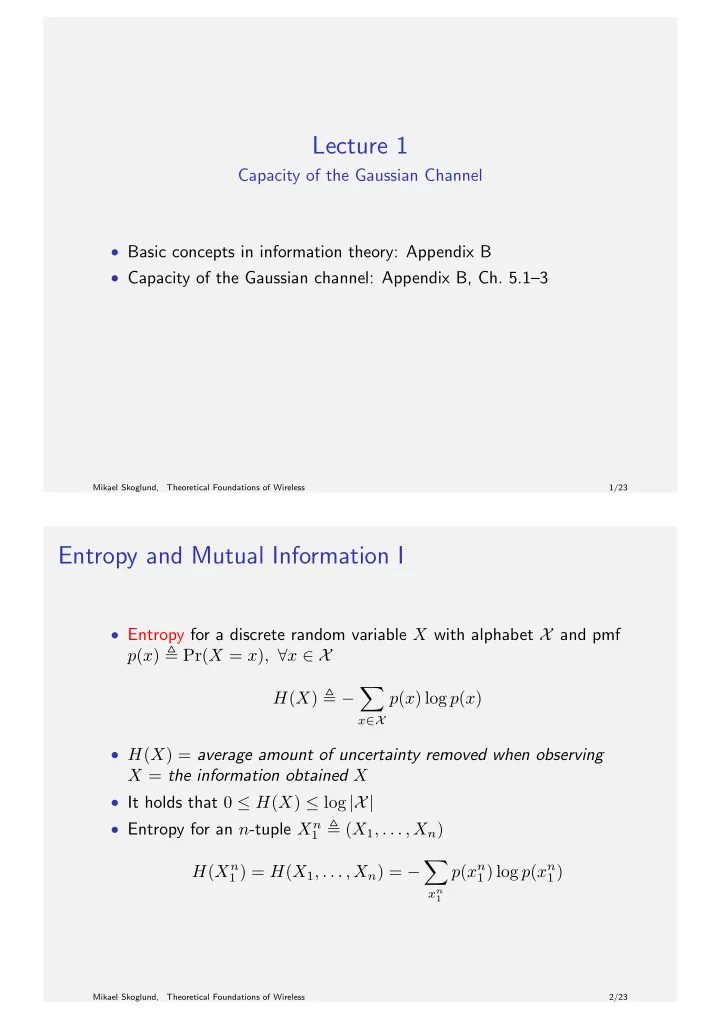

Lecture 1

Capacity of the Gaussian Channel

- Basic concepts in information theory: Appendix B

- Capacity of the Gaussian channel: Appendix B, Ch. 5.1–3

Mikael Skoglund, Theoretical Foundations of Wireless 1/23

Entropy and Mutual Information I

- Entropy for a discrete random variable X with alphabet X and pmf

p(x) Pr(X = x), ∀x ∈ X H(X) −

- x∈X

p(x) log p(x)

- H(X) = average amount of uncertainty removed when observing

X = the information obtained X

- It holds that 0 ≤ H(X) ≤ log |X|

- Entropy for an n-tuple Xn

1 (X1, . . . , Xn)

H(Xn

1 ) = H(X1, . . . , Xn) = −

- xn

1

p(xn

1) log p(xn 1)

Mikael Skoglund, Theoretical Foundations of Wireless 2/23