L i Vi l Di t F ti Learning Visual Distance Function for Identification from one Example for Identification from one Example.

E i N k d F d i J i Eric Nowak and Frederic Jurie Bertin Technologies / CNRS LEAR Group – INRIA - France

Learning Visual Distance Function L i Vi l Di t F ti for - - PowerPoint PPT Presentation

Learning Visual Distance Function L i Vi l Di t F ti for Identification from one Example for Identification from one Example. Eric Nowak and Frederic Jurie E i N k d F d i J i Bertin Technologies / CNRS LEAR Group INRIA - France

E i N k d F d i J i Eric Nowak and Frederic Jurie Bertin Technologies / CNRS LEAR Group – INRIA - France

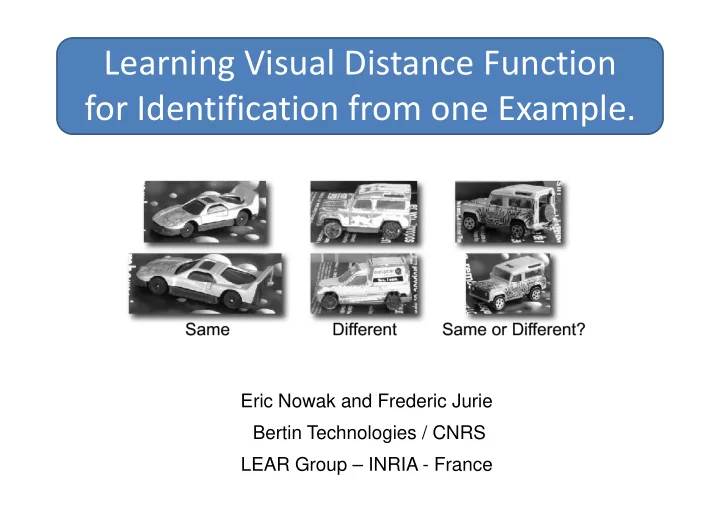

This is an object you've never seen before … … can you recognize it in the following images?

This is an object you've never seen before … … can you recognize it in the following images?

“obviously” different same pose and shape but different object same pose and shape, but different object different pose and light, but same object j

This is an object you've never seen before … … can you recognize it in the following images? Car A Car A Car A Car A Car B Car B

Car A Car B Car ANot Possible! Car A Car A Car B Car B Car B Car A Car B

This is an object you've never seen before … … can you recognize it in the following images?

This is an object you've never seen before … … can you recognize it in the following images? This is an object you've never seen before … can you recognize it in the following images? … can you recognize it in the following images?

Knowledge about categories

Different Same

cars)

equivalence constraints

Distance

(Euclidean)

Representation Space p p (Histograms, etc.)

Negative Constaint

Positive Constaint Constaint Representation Space (Hi t t ) (Histograms, etc.)

Robust combination” of local distances: S=f(d1 d2 d ) S=f(d1,d2,…,dn)

P0

(quadratic in size, uniform in position)

P0

P0 around P0. Search region:

P1

g extension of P0 in all directions.

into the np patch pairs sampled from it. from it.

Patch 1

P(d|same)

Patch 2

P(d|same) P(d|different)

Patch 2

P(d|same)

Patch i

P(d|same)

Patch n

d d

P(d|different) P(d|different) P(d|same) P(d|different)

d d d

P(d|different)

Likelihood->Similarity [Ferencz et al Iccv 05] [Ferencz et al. Iccv 05]

Patch independence: a bad assumption

D1 D2 D3 D4 D2 D4 D8 D5 D6 D7

Space of patch Space of patch pairs differences =>Vector quantization

x=[ 1 0 1 1 0 1 0 1 ]

Tree creation (EXTRA-Trees [Geurts et al. ML06, Moosman et al.

NIPS06]):

– create a root node with positive and negative patch pairs. – recursively split the nodes until they contain only pos or neg pairs:

simple parametric tests on pixel intensity, gradient, geometry, etc. simple parametric tests on pixel intensity, gradient, geometry, etc. random <=> parameters drawned randomly

lit th d i t t b d

The positive patches of three The positive patches of three different nodes during tree construction construction

(''faces in the news'' dataset)

Sim ( ) =

The similarity measure is a linear combination f th l t

(

)

membership

the larger the more similar

[ 1 0 1 1 0 1 0 1 ]

as the normal of the linear as the normal of the linear SVM hyperplane separating the descriptors of positive and negative learn set image pairs.

pairs pairs.

extremely randomized trees. extremely randomized trees.

linear combination of the cluster membership. x=[ 1 0 1 1 0 0 1 1 ] [ ]

Sim ( ) = Sim (

,

) =

different training object pairs of the same category.

– (a) finding similar patches – (b) clustering the set of patch pair differences with an ensemble of extremely randomized trees – (c) combining the cluster memberships of the pairs of local regions to make a global decision about the two images. g g

i l t visual concepts

feature types feature types

training set of equivalence constraints.