HACC: Extreme Scaling and Performance Across Diverse Architectures

DES LSST

HACC: Extreme Scaling and Performance Across Diverse Architectures - - PowerPoint PPT Presentation

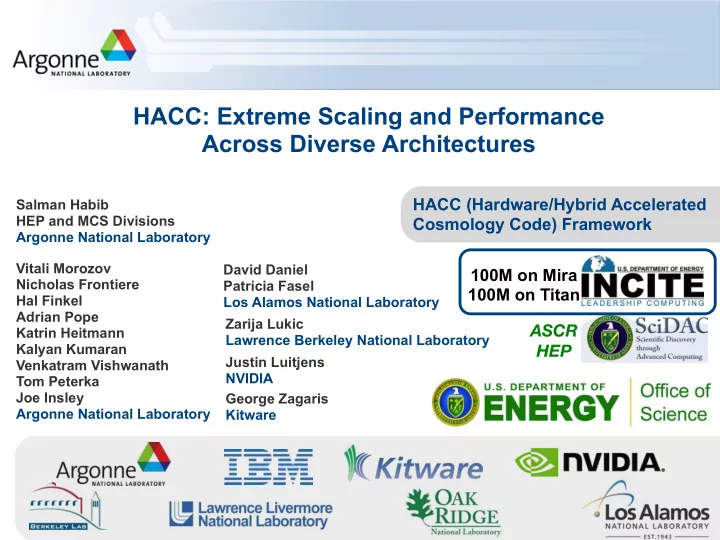

HACC: Extreme Scaling and Performance Across Diverse Architectures HACC (Hardware/Hybrid Accelerated Salman Habib HEP and MCS Divisions Cosmology Code) Framework Argonne National Laboratory Vitali Morozov David Daniel 100M on Mira Nicholas

DES LSST

SPT

SDSS BOSS

2 Mpc

Time

Roadrunner Hopper Mira/Sequoia Titan Edison

0.995 0.996 0.997 0.998 0.999 1 1.001 1.002 1.003 1.004 1.005 0.1 1 P(k) Ratio with respect to GPU code k[h/Mpc] RCB TreePM on BG/Q/GPU P3M RCB TreePM on Hopper/GPU P3M Cell P3M/GPU P3M Gadget-2/GPU P3M

Mira/Sequoia

Mira/Sequoia

Mira/Sequ

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 1 2 3 4 5 6 7 8

Newtonian Force Noisy CIC PM Force 6th-Order sinc-Gaussian spectrally filtered PM Force Two-particle Force

Block 3 ¡Grid ¡units Push ¡to ¡GPU

The Outer Rim Simulation