1

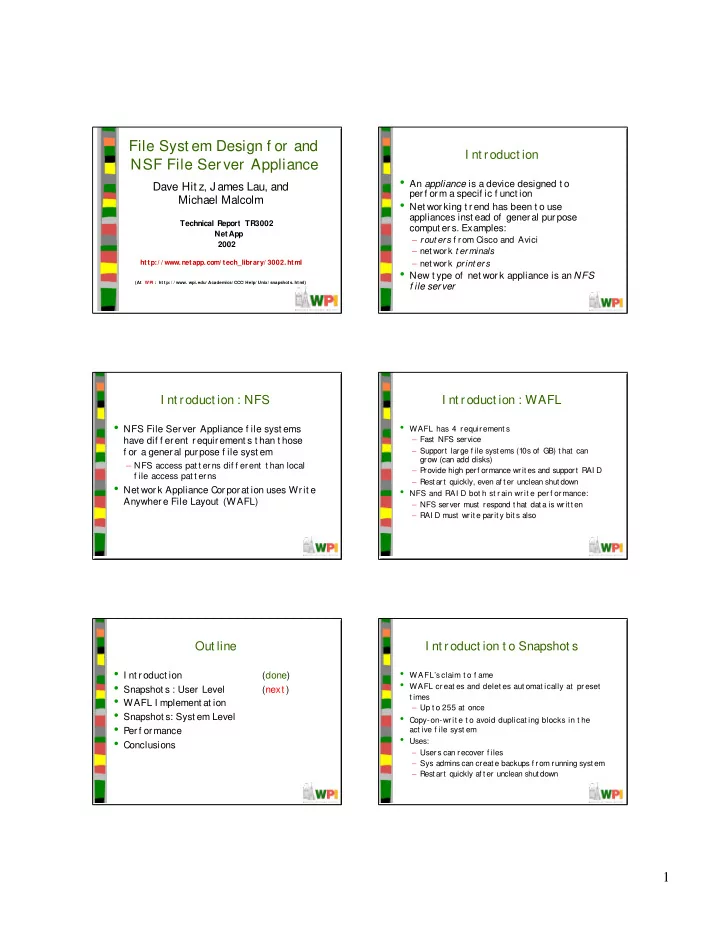

File Syst em Design f or and NSF File Ser ver Appliance

Dave Hit z, J ames Lau, and Michael Malcolm

Technical Report TR3002 NetApp 2002

http:/ / www.netapp.com/ tech_library/ 3002.html

(At WPI : ht t p: / / www. wpi.edu/ Academics/ CCC/ Help/ Unix/ snapshot s. ht ml)

I nt roduct ion

- An appliance is a device designed t o

perf orm a specif ic f unct ion

- Net working t rend has been t o use

appliances inst ead of general purpose comput ers. Examples:

– rout ers f rom Cisco and Avici – net work t er minals – net work print ers

- New t ype of net work appliance is an NFS

f ile server

I nt roduct ion : NFS

- NFS File Server Appliance f ile syst ems

have dif f erent requirement s t han t hose f or a general purpose f ile syst em

– NFS access pat t erns dif f erent t han local f ile access pat t erns

- Net work Appliance Corporat ion uses Writ e

Anywhere File Layout (WAFL)

I nt roduct ion : WAFL

- WAFL has 4 r equir ement s

– Fast NFS service – Support large f ile syst ems (10s of GB) t hat can grow (can add disks) – P rovide high perf ormance writ es and support RAI D – Rest art quickly, even af t er unclean shut down

- NFS and RAI D bot h st r ain wr it e per f or mance:

– NFS server must respond t hat dat a is writ t en – RAI D must writ e parit y bit s also

Out line

- I nt roduct ion

(done)

- Snapshot s : User Level

(next )

- WAFL I mplement at ion

- Snapshot s: Syst em Level

- Per f or mance

- Conclusions

I nt roduct ion t o Snapshot s

- WAFL’sclaim t o f ame

- WAFL cr eat es and delet es aut omat ically at pr eset

t imes – Up t o 255 at once

- Copy-on-wr it e t o avoid duplicat ing blocks in t he

act ive f ile syst em

- Uses: