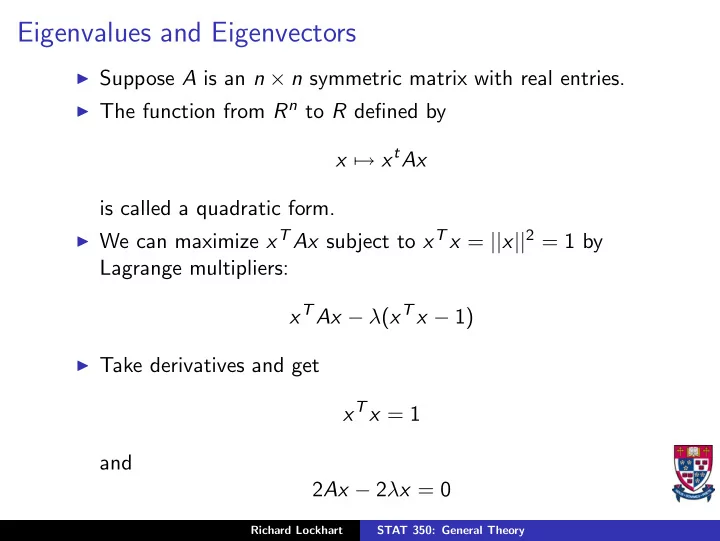

Eigenvalues and Eigenvectors

◮ Suppose A is an n × n symmetric matrix with real entries. ◮ The function from Rn to R defined by

x → xtAx is called a quadratic form.

◮ We can maximize xT Ax subject to xT x = ||x||2 = 1 by

Lagrange multipliers: xTAx − λ(xT x − 1)

◮ Take derivatives and get

xTx = 1 and 2Ax − 2λx = 0

Richard Lockhart STAT 350: General Theory