1

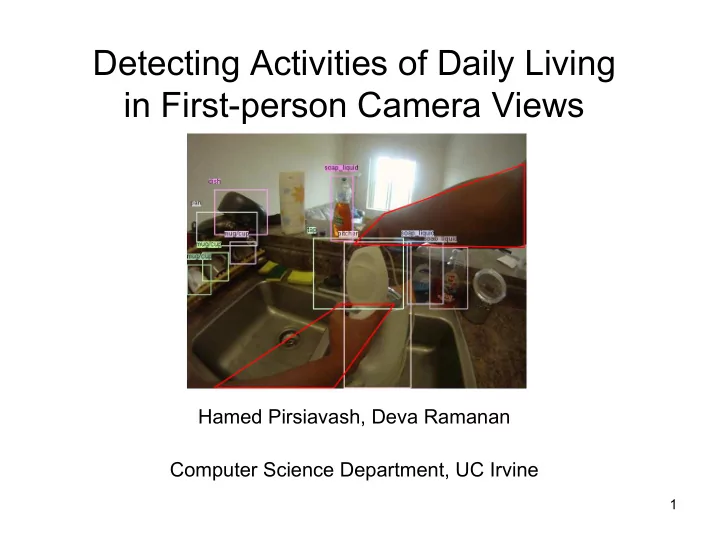

Detecting Activities of Daily Living in First-person Camera Views

Hamed Pirsiavash, Deva Ramanan Computer Science Department, UC Irvine

Detecting Activities of Daily Living in First-person Camera Views - - PowerPoint PPT Presentation

Detecting Activities of Daily Living in First-person Camera Views Hamed Pirsiavash, Deva Ramanan Computer Science Department, UC Irvine 1 Motivation A sample video of Activities of Daily Living 2 Applications Tele-rehabilitation Long-term

1

Hamed Pirsiavash, Deva Ramanan Computer Science Department, UC Irvine

2

3

Long-term at-home monitoring

So far, mostly “write-only” memory! This is the right time for computer vision community to get involved.

4

There are quite a few video benchmarks for action recognition. Collecting interesting but natural video is surprisingly hard. It is difficult to define action categories outside “sports” domain

5 UCF sports, CVPR’08 UCF Youtube, CVPR’08 KTH, ICPR’04 Hollywood, CVPR’09 Olympics sport, BMVC’10 VIRAT, CVPR’11 5

6

It is easy to collect natural data

6

7

ADL actions derived from medical literature on patient rehabilitation It is easy to collect natural data

7

– What features to use? – Appearance model – Temporal model

– “Active” vs “passive” objects – Temporal pyramid

8

8

Low level features (Weak semantics) High level features (Strong semantics) Human pose Difficulties of pose:

Space-time interest points Laptev, IJCV’05

9

Low level features (Weak semantics) High level features (Strong semantics) Human pose Object-centric features Space-time interest points Laptev, IJCV’05 Difficulties of pose:

10

12

time Start boiling water Do other things (while waiting) Pour in cup Drink tea

Difficult for HMMs to capture long-term temporal dependencies

– What features to use? – Appearance model – Temporal model

– “Active” vs “passive” objects – Temporal pyramid

15

15

16

Passive Active

17

Passive Active Better object detection (visual phrases CVPR’11) Better features for action classification (active vs passive)

18

Bag of detected objects

fridge TV stove fridge TV stove

SVM classifier Video clip

19

Bag of detected objects SVM classifier Video clip

Active fridge Active stove Passive fridge Active fridge Active stove Passive fridge

20

Inspired by “Spatial Pyramid” CVPR’06 and “Pyramid Match Kernels” ICCV’05

Coarse to fine correspondence matching with a multi-layer pyramid

Temporal pyramid descriptor

SVM classifier

time

21

– What features to use? – Appearance model – Temporal model

– “Active” vs “passive” objects – Temporal pyramid

22

22

23

24

– Actions – Object bounding boxes – Active-passive objects – Object IDs

24

Prior work:

Active Passive Passive Active Passive

25

Active objects tend to appear on the right hand side and closer

– Right-handed people are dominant – We cannot mirror-flip images in training

– What features to use? – Appearance model – Temporal model

– “Active” vs “passive” objects – Temporal pyramid

26

26

Low level features High level features

Object-centric features 24 object categories

Space-time interest points (STIP) Laptev et al, BMVC’09

27

28

29

30

30

31

31

32

32

33

33

34

Results on temporally continuous video and taxonomy loss are included in the paper

34

35

Data and code will be available soon!

36

Data and code will be available soon!

37

Data and code will be available soon!

38