CS325 Artificial Intelligence

- Ch. 15,20 – Hidden Markov Models and Particle Filtering

Cengiz Günay, Emory Univ.

Günay

- Ch. 15,20 – Hidden Markov Models and Particle Filtering

Spring 2013 1 / 21

CS325 Artificial Intelligence Ch. 15,20 Hidden Markov Models and - - PowerPoint PPT Presentation

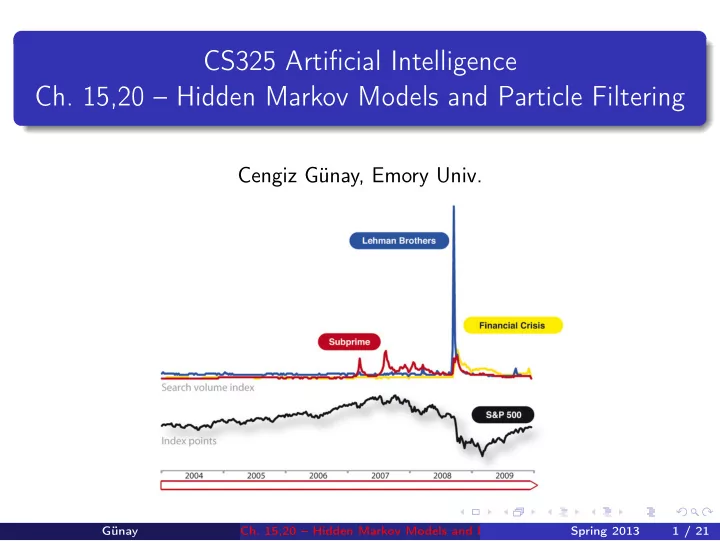

CS325 Artificial Intelligence Ch. 15,20 Hidden Markov Models and Particle Filtering Cengiz Gnay, Emory Univ. Gnay Ch. 15,20 Hidden Markov Models and Particle Filtering Spring 2013 1 / 21 Get Rich Fast! Gnay Ch. 15,20

Günay

Spring 2013 1 / 21

Günay

Spring 2013 2 / 21

Günay

Spring 2013 2 / 21

Günay

Spring 2013 2 / 21

Günay

Spring 2013 3 / 21

Günay

Spring 2013 3 / 21

Günay

Spring 2013 4 / 21

Günay

Spring 2013 5 / 21

Günay

Spring 2013 5 / 21

Günay

Spring 2013 5 / 21

Günay

Spring 2013 5 / 21

Günay

Spring 2013 5 / 21

Günay

Spring 2013 6 / 21

Günay

Spring 2013 6 / 21

Günay

Spring 2013 7 / 21

Günay

Spring 2013 7 / 21

Günay

Spring 2013 7 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 9 / 21

Günay

Spring 2013 10 / 21

Günay

Spring 2013 10 / 21

Günay

Spring 2013 10 / 21

Günay

Spring 2013 10 / 21

Günay

Spring 2013 10 / 21

Günay

Spring 2013 11 / 21

Günay

Spring 2013 11 / 21

Günay

Spring 2013 11 / 21

Günay

Spring 2013 11 / 21

Günay

Spring 2013 11 / 21

Günay

Spring 2013 12 / 21

Günay

Spring 2013 12 / 21

Günay

Spring 2013 12 / 21

Günay

Spring 2013 13 / 21

Günay

Spring 2013 13 / 21

Günay

Spring 2013 13 / 21

Günay

Spring 2013 14 / 21

Günay

Spring 2013 14 / 21

Günay

Spring 2013 15 / 21

Günay

Spring 2013 15 / 21

Günay

Spring 2013 15 / 21

Günay

Spring 2013 15 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 16 / 21

Günay

Spring 2013 17 / 21

Günay

Spring 2013 17 / 21

Günay

Spring 2013 17 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 18 / 21

Günay

Spring 2013 19 / 21

Günay

Spring 2013 20 / 21

Günay

Spring 2013 21 / 21