Can Charlie distinguish Alice and Bob? Automated verification of - PowerPoint PPT Presentation

Can Charlie distinguish Alice and Bob? Automated verification of equivalence properties Steve Kremer joint work with: Myrto Arapinis, David Baelde, Rohit Chadha, Vincent Cheval, S tefan Ciob ac a, V eronique Cortier, St ephanie

Can Charlie distinguish Alice and Bob? Automated verification of equivalence properties Steve Kremer joint work with: Myrto Arapinis, David Baelde, Rohit Chadha, Vincent Cheval, S ¸tefan Ciob˘ acˆ a, V´ eronique Cortier, St´ ephanie Delaune, Ivan Gazeau, Itsaka Rakotonirina, Mark Ryan 29th IEEE Computer Security Foundations Symposium 1/30

Cryptographic protocols everywhere! ◮ Distributed programs that ◮ use crypto primitives (encryption, digital signature ,. . . ) ◮ to ensure security properties (confidentiality, authentication, anonymity,. . . ) 2/30

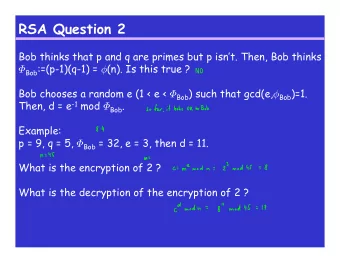

Symbolic models for protocol verification Main ingredient of symbolic models ◮ messages = terms enc pair k s 1 s 2 ◮ perfect cryptography (equational theories) dec(enc( x , y ) , y ) = x fst(pair( x , y )) = x snd(pair( x , y )) = y ◮ the network is the attacker ◮ messages can be eavesdropped ◮ messages can be intercepted ◮ messages can be injected Dolev, Yao: On the Security of Public Key Protocols. FOCS’81 3/30

Cryptographic protocols are tricky! 4/30

Cryptographic protocols are tricky! Bhargavan et al.:FREAK, Logjam, SLOTH, . . . Cremers et al., S&P’16 4/30

Cryptographic protocols are tricky! Arapinis et al., CCS’12 Bhargavan et al.:FREAK, Logjam, SLOTH, . . . Cremers et al., S&P’16 4/30

Cryptographic protocols are tricky! Arapinis et al., CCS’12 Bhargavan et al.:FREAK, Logjam, SLOTH, . . . Cremers et al., S&P’16 Cortier & Smyth, CSF’11 4/30

Cryptographic protocols are tricky! Arapinis et al., CCS’12 Bhargavan et al.:FREAK, Logjam, SLOTH, . . . Cremers et al., S&P’16 Cortier & Smyth, CSF’11 Steel et al., CSF’08, CCS’10 4/30

Modelling the protocol Protocols modelled in a process calculus, e.g. the applied pi calculus P ::= 0 | in( c , x ) . P input | out( c , t ) . P output | if t 1 = t 2 then P else Q conditional | P | | Q parallel | ! P replication | new n . P restriction 5/30

Modelling the protocol Protocols modelled in a process calculus, e.g. the applied pi calculus P ::= 0 | in( c , x ) . P input | out( c , t ) . P output | if t 1 = t 2 then P else Q conditional | P | | Q parallel | ! P replication | new n . P restriction Specificities: ◮ messages are terms (not just names as in the pi calculus) ◮ equality in conditionals interpreted modulo an equational theory 5/30

Reasoning about attacker knowledge Terms output by a process are organised in a frame : n . { t 1 / x 1 , . . . , t n / x n } φ = new ¯ 6/30

Reasoning about attacker knowledge Terms output by a process are organised in a frame : n . { t 1 / x 1 , . . . , t n / x n } φ = new ¯ Deducibility: φ ⊢ R t if R is a public term and R φ = E t Example ϕ = new n 1 , n 2 , k 1 , k 2 . { enc( n 1 , k 1 ) / x 1 , enc( n 2 , k 2 ) / x 2 , k 1 / x 3 } ϕ ⊢ dec( x 1 , x 3 ) n 1 ϕ ⊢ 1 1 ϕ �⊢ n 2 6/30

Reasoning about attacker knowledge Terms output by a process are organised in a frame : n . { t 1 / x 1 , . . . , t n / x n } φ = new ¯ Static equivalence: φ 1 ∼ s φ 2 if ∀ public terms R , R ′ . R φ 1 = R ′ φ 1 ⇔ R φ 2 = R ′ φ 2 Examples new k . { enc( 0 , k ) / x 1 } ∼ s new k . { enc( 1 , k ) / x 1 } 6/30

Reasoning about attacker knowledge Terms output by a process are organised in a frame : n . { t 1 / x 1 , . . . , t n / x n } φ = new ¯ Static equivalence: φ 1 ∼ s φ 2 if ∀ public terms R , R ′ . R φ 1 = R ′ φ 1 ⇔ R φ 2 = R ′ φ 2 Examples new n 1 , n 2 . { n 1 / x 1 , n 2 / x 2 } �∼ s new n 1 , n 2 . { n 1 / x 1 , n 1 / x 2 } ? Check ( x 1 = x 2 ) 6/30

Reasoning about attacker knowledge Terms output by a process are organised in a frame : n . { t 1 / x 1 , . . . , t n / x n } φ = new ¯ Static equivalence: φ 1 ∼ s φ 2 if ∀ public terms R , R ′ . R φ 1 = R ′ φ 1 ⇔ R φ 2 = R ′ φ 2 Examples { enc( n , k ) / x 1 , k / x 2 } �∼ s { enc( 0 , k ) / x 1 , k / x 2 } Check ( dec ( x 1 , x 2 ) ? = 0 ) 6/30

From authentication to privacy Many good tools: AVISPA, Casper, Maude-NPA, ProVerif, Scyther, Tamarin, . . . Good at verifying trace properties (predicates on system behavior), e.g., ◮ (weak) secrecy of a key ◮ authentication (correspondence properties) If B ended a session with A (and parameters p) then A must have started a session with B (and parameters p ′ ). 7/30

From authentication to privacy Many good tools: AVISPA, Casper, Maude-NPA, ProVerif, Scyther, Tamarin, . . . Good at verifying trace properties (predicates on system behavior), e.g., ◮ (weak) secrecy of a key ◮ authentication (correspondence properties) If B ended a session with A (and parameters p) then A must have started a session with B (and parameters p ′ ). Not all properties can be expressed on a trace. � recent interest in indistinguishability properties . 7/30

Indistinguishability as a process equivalence Naturally modelled using equivalences from process calculi Testing equivalence ( P ≈ Q ) for all processes A , we have that: A | P ⇓ c if, and only if, A | Q ⇓ c − → P ⇓ c when P can send a message on the channel c . 8/30

A tour to the (equivalence) zoo testing equiv. obs. equiv. Abadi, Gordon. A Calculus for Cryptographic Protocols: The Spi Calculus. CCS’97, Inf.& Comp.’99 Abadi, Fournet. Mobile values, new names, and secure communication. POPL’01 9/30

A tour to the (equivalence) zoo testing equiv. obs. equiv. labelled bisim. Abadi, Fournet. Mobile values, new names, and secure communication. POPL’01 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. labelled bisim. Blanchet et al.: Automated Verification of Selected Equivalences for Security Protocols. LICS’05 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. labelled bisim. Diff equivalence too fine grained for several properties. Blanchet et al.: Automated Verification of Selected Equivalences for Security Protocols. LICS’05 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. symbolic bisim. labelled bisim. Delaune et al. Symbolic bisimulation for the applied pi calculus. JCS’10 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. symbolic bisim. labelled bisim. Liu, Lin. A complete symbolic bisimulation for full applied pi calculus.TCS’12 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. trace equiv. symbolic bisim. labelled bisim. Cheval et al.: Deciding equivalence-based properties using constraint solving. TCS’13 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. trace equiv. symbolic bisim. labelled bisim. For a bounded number of sessions (no replication). Cheval et al.: Deciding equivalence-based properties using constraint solving. TCS’13 9/30

A tour to the (equivalence) zoo diff equiv. testing equiv. obs. equiv. trace equiv. symbolic bisim. labelled bisim. For a class of determinate processes . Cheval et al.: Deciding equivalence-based properties using constraint solving. TCS’13 9/30

A few security properties “Strong” secrecy (non-interference) in( c , x 1 ) . in( c , x 2 ) . P { x 1 / s } ≈ in( c , x 1 ) . in( c , x 2 ) . P { x 2 / s } 10/30

A few security properties “Strong” secrecy (non-interference) in( c , x 1 ) . in( c , x 2 ) . P { x 1 / s } ≈ in( c , x 1 ) . in( c , x 2 ) . P { x 2 / s } Real-or-random secrecy P . out( c , s ) ≈ P . new r . out( c , r ) 10/30

A few security properties “Strong” secrecy (non-interference) in( c , x 1 ) . in( c , x 2 ) . P { x 1 / s } ≈ in( c , x 1 ) . in( c , x 2 ) . P { x 2 / s } Real-or-random secrecy P . out( c , s ) ≈ P . new r . out( c , r ) Simulation based security ( I is an ideal functionality) ∃ S . P ≈ S [ I ] 10/30

A few security properties “Strong” secrecy (non-interference) in( c , x 1 ) . in( c , x 2 ) . P { x 1 / s } ≈ in( c , x 1 ) . in( c , x 2 ) . P { x 2 / s } Real-or-random secrecy P . out( c , s ) ≈ P . new r . out( c , r ) Simulation based security ( I is an ideal functionality) ∃ S . P ≈ S [ I ] Anonymity P { a / id } ≈ P { b / id } 10/30

A few security properties “Strong” secrecy (non-interference) in( c , x 1 ) . in( c , x 2 ) . P { x 1 / s } ≈ in( c , x 1 ) . in( c , x 2 ) . P { x 2 / s } Real-or-random secrecy P . out( c , s ) ≈ P . new r . out( c , r ) Simulation based security ( I is an ideal functionality) ∃ S . P ≈ S [ I ] Anonymity P { a / id } ≈ P { b / id } Vote privacy Unlinkability 10/30

How to model vote privacy? How can we model “ the attacker does not learn my vote (0 or 1) ”? 11/30

How to model vote privacy? How can we model “ the attacker does not learn my vote (0 or 1) ”? ◮ The attacker cannot learn the value of my vote 11/30

How to model vote privacy? How can we model “ the attacker does not learn my vote (0 or 1) ”? ◮ The attacker cannot learn the value of my vote � but the attacker knows values 0 and 1 11/30

How to model vote privacy? How can we model “ the attacker does not learn my vote (0 or 1) ”? ◮ The attacker cannot learn the value of my vote ◮ The attacker cannot distinguish A votes and B votes: V A ( v ) ≈ V B ( v ) 11/30

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.