Algorithms for Mobile Robot Localization and Mapping, Incorporating - PowerPoint PPT Presentation

Algorithms for Mobile Robot Localization and Mapping, Incorporating Detailed Noise Modeling and Multi-scale Feature Extraction Samuel T. Pfister April 14, 2006 04/14/2006 Mobile Robot Navigation Navigation Applications Unmanned

Algorithms for Mobile Robot Localization and Mapping, Incorporating Detailed Noise Modeling and Multi-scale Feature Extraction Samuel T. Pfister April 14, 2006 04/14/2006

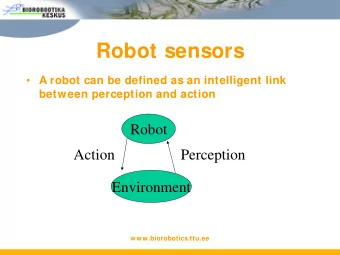

Mobile Robot Navigation Navigation Applications • Unmanned exploration • Convoys for military supplies • Autonomous highway driving Robot localization is critical for: • Effective path planning • Accurate construction and use of global maps 04/14/2006 1

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) Environment Boundary • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] Robot • Feature based mapping - [Chatila & Laumond] Global frame 04/14/2006 2

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 3

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] Beacons • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 4

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) Known Map • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 5

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 6

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 7

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 8

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 9

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 10

Sensor Based Localization and Mapping Localization Methods Features • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 11

Sensor Based Localization and Mapping Localization Methods • Dead reckoning [Lu&Milios] • Beacon based localization (GPS) • Localization using known maps [Borenstein] • Localization with no prior knowledge of environment - Requires sensor based mapping Mapping Methods • Grid based mapping - [Elfes] • Feature based mapping - [Chatila & Laumond] 04/14/2006 12

Sensor Based Localization and Mapping Critical Goals • Accurate estimates of – robot position – map feature position – measurement uncertainty • Robustness for long term operation • Computational efficiency 04/14/2006 13

Sensor Based Localization and Mapping Critical Challenges • Data association accuracy and efficiency – Feature correspondence • Sensor noise compensation • Unmodeled errors and effects – Changing environment – Bad data 04/14/2006 14

Overview Three localization and mapping methods are presented Assumptions: Planar robot motion in SE(2) Sensors: Dense planar range scanner, Simple odometry 04/14/2006 15

Overview Three localization and mapping methods are presented 1. Range point based dead reckoning: scan matching 2. Line feature based mapping and global localization 3. Multi-scale feature based mapping and global localization 04/14/2006 16

Overview Three localization and mapping methods are presented 1. Range point based dead reckoning: scan matching 2. Line feature based mapping and global localization 3. Multi-scale feature based mapping and global localization 04/14/2006 17

Overview Three localization and mapping methods are presented 1. Range point based dead reckoning: scan matching 2. Line feature based mapping and global localization 3. Multi-scale feature based mapping and global localization 04/14/2006 18

Overview Three localization and mapping methods are presented 1. Range point based Critical Goals dead reckoning: scan matching • Accurate estimates of – robot position 2. Line feature based – map feature position mapping and global – measurement uncertainty localization • Robustness for long term operation 3. Multi-scale feature • Computational efficiency based mapping and global localization 04/14/2006 19

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 20

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 21

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 22

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 23

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 24

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 25

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 26

Method 1) Weighted Scan Matching Initial Displacement Scan 1 Scan2 Guess Point Correspondence Scan Matching Iterate Displacement Estimate • Correlate range measurements to estimate displacement • Can improve (or even replace) odometry - [Roumeliotis] • Previous Work - Vision community and Lu & Milios ’97 04/14/2006 27

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.