SLIDE 1 Review

- f

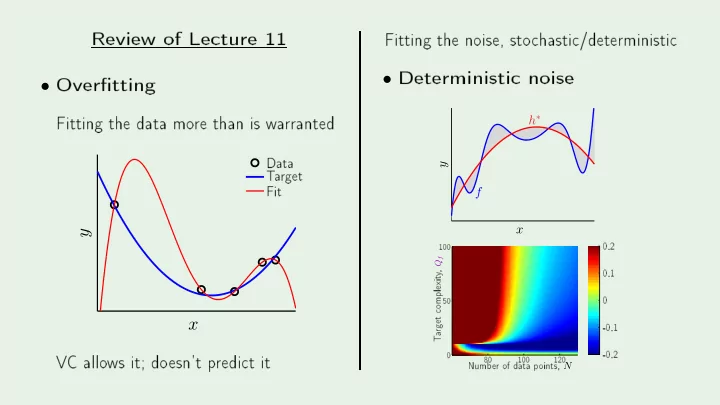

- Overtting

x y

Fit Data T a rget Fit- 1

- 0.5

- 30

- 20

- 10

- Deterministi

x y h∗ f

- 0.8

- 0.6

- 0.4

- 0.2

- 100

- 80

- 60

- 40

- 20

- f

- ints, N

- mplexit

- 0.2

- 0.1