Vi Visual G Gro rounding i in Vi Video eo fo for r Un Unsup - PowerPoint PPT Presentation

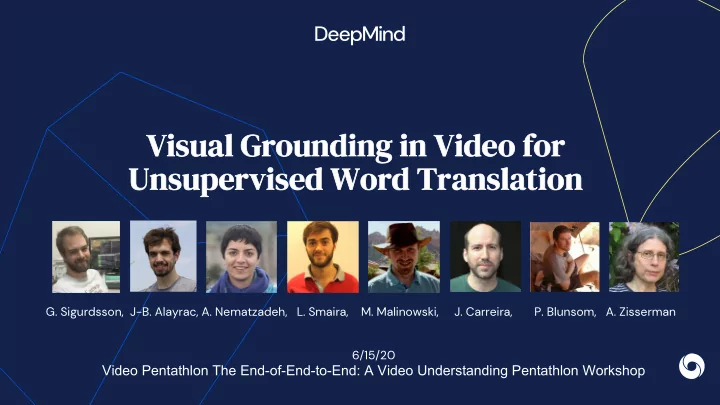

Vi Visual G Gro rounding i in Vi Video eo fo for r Un Unsup upervised Word Transla lation G. Sigurdsson, J-B. Alayrac, A. Nematzadeh, L. Smaira, M. Malinowski, J. Carreira, P. Blunsom, A. Zisserman 6/15/20 Video

Vi Visual G Gro rounding i in Vi Video eo fo for r Un Unsup upervised Word Transla lation G. Sigurdsson, J-B. Alayrac, A. Nematzadeh, L. Smaira, M. Malinowski, J. Carreira, P. Blunsom, A. Zisserman 6/15/20 Video Pentathlon The End-of-End-to-End: A Video Understanding Pentathlon Workshop

How can we learn a link between different languages from unpaired narrated videos?

Our goal: relate different languages through the visual domain Je casse les oeufs.* I need to mix the eggs with the flour. * I break the eggs.

Our setup: unsupervised word translation Je casse les oeufs. different videos in each language ( no paired data ) I need to mix the eggs with the flour.

Dataset: HowToWorld dataset We extend the HowTo100M[a] dataset in other languages (we follow the same collection procedure but obtain different videos narrated in their original language). [a] [HowTo100M: Learning a Text-Video Embedding by Watching Hundred Million Narrated Video Clips, Miech, Zhukov, Alayrac, Tapaswi, Laptev and Sivic, ICCV19]

Base Model: learn a joint space between languages and video mix eggs with flour Contrastive loss [b] [b] MIL-NCE: [End-to-End Learning of Visual Representations from Uncurated Instructional Videos, Miech, Alayrac, Smaira, Laptev, Sivic and Zisserman, CVPR20]

Base Model: learn a joint space between languages and video Contrastive loss Bilingual-visual joint space je casse les oeufs

Base Model: learn a joint space between languages and video Contrastive loss Bilingual-visual joint space Next, we evaluate the quality of the joint bilingual space with English to French word retrieval: je casse les oeufs “Given a word in English, we score all French words using dot products in the joint space and report the percentage of time a correct translation is retrieved in top 1 (R@1)”

Quantitative results for the Base Model Dictionary: 1000 words in English and French coming from (Conneau et al, 2017) Simple words: Top 1000 words from Wikipedia Visual: restrict to “visual” words (remove abstracts concepts). Dictionary Simple Words (Conneau et al., 2017) (top 1000 words Wikipedia) English-French (reporting recall@1) All Visual All Visual Random Chance 0.1 0.2 0.1 0.2 Base Model 9.1 15.2 28.0 45.3

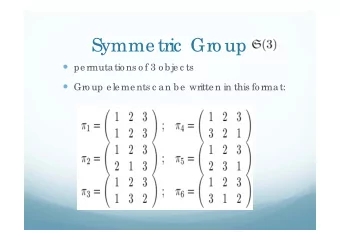

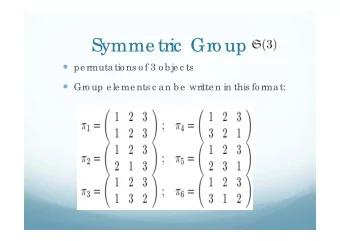

Do we need videos at all? It has been shown that one can align word embeddings in different languages via a simple transformation (rotation). Only a few correspondences are required to estimate this transformation: Unsupervised approaches are possible that do not require any paired data to learn that alignment: [ MUSE , Conneau, et al , ICLR2018], [ VecMap : Artetxe, et al. ACL 2018] These methods have robustness issues (e.g. language similarity / training corpora statistics), can vision help there?

MUSE MUSE: 1) Find an initial linear mapping via an adversarial approach 2) From initialization find the most aligned word pairs and use them as Aligned anchors to refine the mapping with space the Procrustes algorithm 3) Normalizing the distances using the local neighborhood

MUVE: Multilingual Unsupervised Visual Embeddings MUSE: MUSE: MUVE 1) Find an initial linear mapping via an 1) Use AdaptLayer as an initial linear adversarial approach mapping 2) From initialization find the most aligned word pairs and use them as Aligned anchors to refine the mapping with space the Procrustes algorithm 3) Normalizing the distances using the local neighborhood

MUVE vs Base model Dictionary Simple Words (Conneau et al., 2017) (top 1000 words) English-French (reporting R@1) All Visual All Visual Random Chance 0.1 0.2 0.1 0.2 Base Model 9.1 15.2 28.0 45.3 MUVE 28.9 39.5 58.3 67.5

Performance of models across language pairs Larger gap in performance for more distant languages reporting R@1 En-Fr En-Ko En-Ja MUSE 26.3 11.8 11.6 (Conneau et al. , 2017) VecMap 28.4 13.0 13.7 (Artetxe et al. , 2018) MUVE 28.9 17.7 15.1 (ours) Supervised 57.9 41.8 41.1

Robustness to dissimilarity of text corpora for embedding pretraining HowTo-Fr MUSE VecMap MUVE reporting R@10 (Conneau et al. , 2017) (Artetxe et al. , 2018) (ours) HowTo-En 45.8 45.4 47.3 WMT-En 0.3 0.2 26.4 Wiki-En 0.3 0.1 32.6

Conclusion and Future work

Takeaways Conclusion Unsupervised word translation through visual grounding is possible based on unpaired and uncurated narrated videos. The information contained in vision is complementary to the one contained in the structure of the languages which enables to have a better and more robust approach (MUVE) for unsupervised word translation. Future work From words to sentences. Using multilingual datasets to learn better visual representation (more data sources, less biased, …) Links: Paper, Blog post Q&A Time CVPR2020: Thursday, June 18, 2020 9-11AM and 9–11PM PT

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.