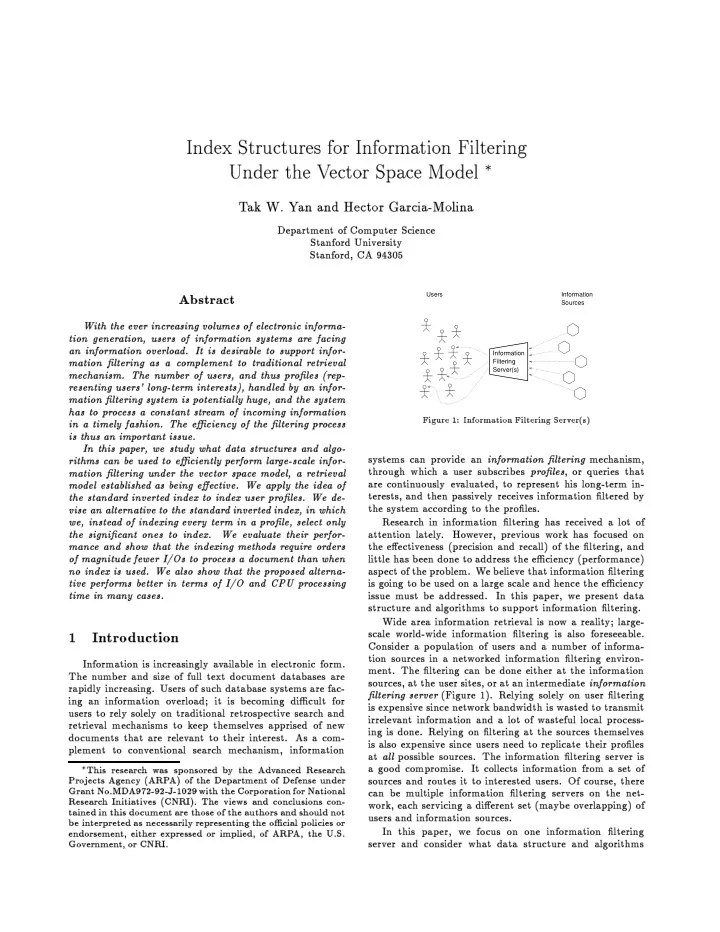

SLIDE 9 10 20 30 40 50 60 70 80 90 100 1 2 3 4 5 6 7 8 9 10 Blocks (x 1000) Profile Length BF PI (Contiguous) PI (Fragmented) SPI (Contiguous) SPI (Fragmented)

Figure 8: T

Size vs. Prole Length p

120 130 140 150 160 170 180 190 200 1 2 3 4 5 6 7 8 9 10 Blocks Profile Length PI SPI

Figure 9: Disk I/Os P er Do cumen t vs. Prole Length p in the

metho ds b etter. The sharp drop in the n um- b er

I/Os required corresp

to the shrinking

the list length (from 2 blo c ks to 1 blo c k). Thereafter, the n um b er

I/Os increases, as the n um b er

lists read p er do cumen t increases (due to the increase in the queried v

size). The rise is more prominen t in PI than in SPI. F

the n um b er

m ultiplica tion s p er do cumen t (Fig- ure 7), SPI is b etter throughout than the

metho ds. The trend is do wn w ard for all metho ds, as more infrequen t terms app ear in proles. 5.3 Prole Length The next parameter that w e v ary is the prole length. Figures 8 and 9 sho w the results. F

con tiguous allo cation, w e see the total space require- men t gro ws with p for all metho ds (Figure 8). F

frag- men ted allo cation, with a small p, the in v erted lists eac h t in

blo c k, so the size remains constan t at the queried v

size. With larger p, the lists gro w in length, so the total space requiremen t gro ws also. The SPI metho d gro ws at a faster rate than the PI metho d. The n um b er

disk I/Os required b y the SPI metho d

10 20 30 40 50 60 70 80 90 100 110 100 200 300 400 500 600 700 800 Blocks (x 1000) Number of Profiles (x 1000) BF PI (Contiguous) PI (Fragmented) SPI (Contiguous) SPI (Fragmented)

Figure 10: T

Size vs. Num b er

Proles n

120 140 160 180 200 220 240 260 280 300 100 200 300 400 500 600 700 800 Blocks Number of Profiles (x 1000) PI SPI

Figure 11: Disk I/Os P er Do cumen t vs. Num b er

Proles n initiall y de cr e ases as p is increased from 1 (Figure 9). This is b ecause it b ecomes more lik ely that a prole includes infrequen t terms and is th us indexed b y those terms. With the longer lists at larger p (greater than 7), its p erformance deteriorates and then stabilizes. On the

hand, for the n um b er

m ultiplicati

, SPI is alw a ys b etter than the t w

metho ds (graphs

5.4 Num b er

Proles W e v ary the n um b er

proles from 100,000 to 800,000. F

the total space requiremen t (results sho wn in Figure 10), w e ha v e a similar graph as that for p. F

con tiguous allo cation, the space requiremen t gro ws linearly with n. F

fragmen ted allo cation, the space required is at rst constan t and then increases. Eac h in v erted list ts in 1 blo c k at the b eginning, but as n increases, 2 blo c ks are needed to hold a list. The lists gro w at a faster rate in the SPI metho d initiall y , but PI so

catc hes up with it. Figure 11 sho ws the results for the n um b er

disk I/Os required p er do cumen t. Those for the BF metho d are

ted. W e see there is a range

n v alues where SPI requires more I/Os p er do cumen t; this happ ens when an SPI in-