CIS 501: Comp. Arch. | Prof. Milo Martin | Instruction Sets 1

CIS 501: Computer Architecture

Unit 2: Instruction Set Architectures

Slides'developed'by'Milo'Mar0n'&'Amir'Roth'at'the'University'of'Pennsylvania' ' with'sources'that'included'University'of'Wisconsin'slides ' by'Mark'Hill,'Guri'Sohi,'Jim'Smith,'and'David'Wood '

CIS 501: Comp. Arch. | Prof. Milo Martin | Instruction Sets 2

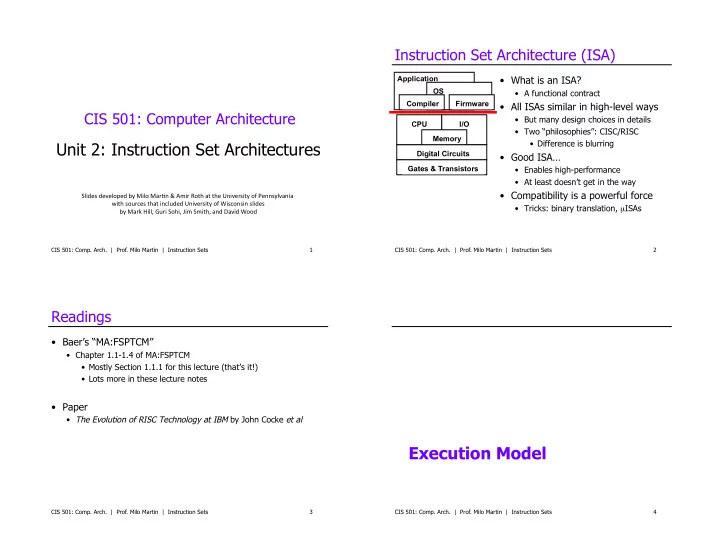

Instruction Set Architecture (ISA)

- What is an ISA?

- A functional contract

- All ISAs similar in high-level ways

- But many design choices in details

- Two “philosophies”: CISC/RISC

- Difference is blurring

- Good ISA…

- Enables high-performance

- At least doesn’t get in the way

- Compatibility is a powerful force

- Tricks: binary translation, µISAs

Application OS Firmware Compiler CPU I/O Memory Digital Circuits Gates & Transistors

CIS 501: Comp. Arch. | Prof. Milo Martin | Instruction Sets 3

Readings

- Baer’s “MA:FSPTCM”

- Chapter 1.1-1.4 of MA:FSPTCM

- Mostly Section 1.1.1 for this lecture (that’s it!)

- Lots more in these lecture notes

- Paper

- The Evolution of RISC Technology at IBM by John Cocke et al

Execution Model

CIS 501: Comp. Arch. | Prof. Milo Martin | Instruction Sets 4