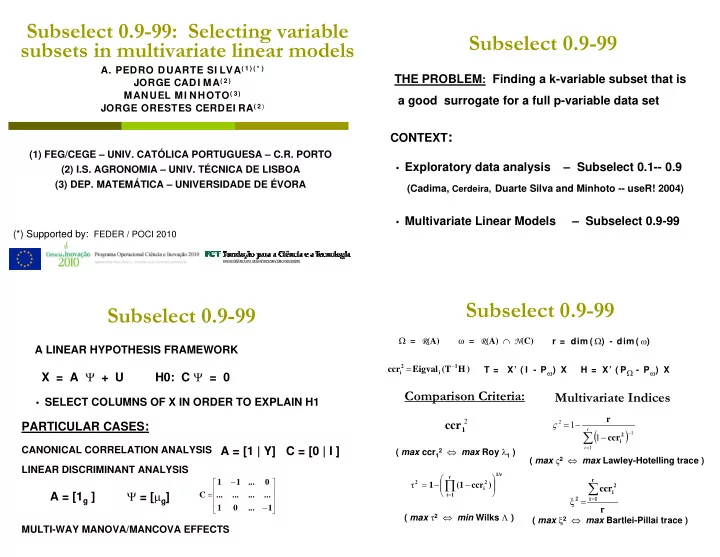

Subselect 0.9-99: Selecting variable subsets in multivariate linear models

(1) FEG/CEGE – UNIV. CATÓLICA PORTUGUESA – C.R. PORTO (2) I.S. AGRONOMIA – UNIV. TÉCNICA DE LISBOA (3) DEP. MATEMÁTICA – UNIVERSIDADE DE ÉVORA

- A. PEDRO DUARTE SI LVA( 1 ) ( * )

JORGE CADI MA( 2 ) MANUEL MI NHOTO( 3 ) JORGE ORESTES CERDEI RA( 2 ) (*) Supported by: FEDER / POCI 2010

Subselect 0.9-99

THE PROBLEM: Finding a k-variable subset that is a good surrogate for a full p-variable data set CONTEXT:

- Exploratory data analysis

- Multivariate Linear Models

(Cadima, Cerdeira, Duarte Silva and Minhoto -- useR! 2004)

– Subselect 0.1-- 0.9 – Subselect 0.9-99

A LINEAR HYPOTHESIS FRAMEWORK

X = A Ψ + U

- SELECT COLUMNS OF X IN ORDER TO EXPLAIN H1

PARTICULAR CASES: H0: C Ψ = 0

CANONICAL CORRELATION ANALYSIS LINEAR DISCRIMINANT ANALYSIS MULTI-WAY MANOVA/MANCOVA EFFECTS

A = [1 | Y] C = [0 | I ] A = [1g ] Ψ = [µg]

⎥ ⎥ ⎥ ⎦ ⎤ ⎢ ⎢ ⎢ ⎣ ⎡

− − = 1 ... 1 ... ... ... ... ... 1 1 C

Subselect 0.9-99 Subselect 0.9-99

Comparison Criteria: Multivariate Indices

2 1

ccr

1/r r 1 i 2 i 2

) ccr (1 1 ⎟ ⎟ ⎠ ⎞ ⎜ ⎜ ⎝ ⎛ − − =

∏

=

τ

( )

∑

= −

− − =

r i 1 1 2

1 1

2 i

ccr r ς r ccr

r 1 i 2 i 2 ∑ =

= ξ ) H (T Eigval ccr

1 i 2 i −

= T = X’ ( I - Pω) X H = X’ ( PΩ - Pω) X Ω = R(A) ω = R(A) ∩ N(C) r = dim ( Ω) - dim ( ω) ( max ccr1

2 ⇔ max Roy λ1 )

( max ς2 ⇔ max Lawley-Hotelling trace ) ( max τ2 ⇔ min Wilks Λ ) ( max ξ2 ⇔ max Bartlei-Pillai trace )