ST 516 Experimental Statistics for Engineers II

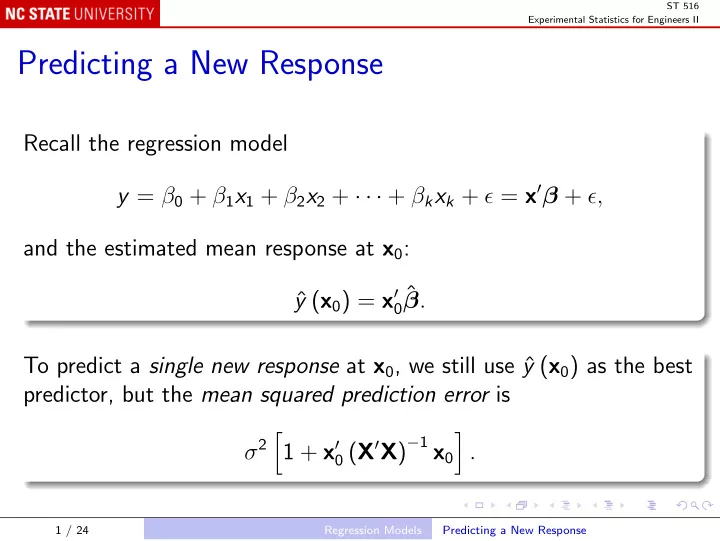

Predicting a New Response

Recall the regression model y = β0 + β1x1 + β2x2 + · · · + βkxk + ǫ = x′β + ǫ, and the estimated mean response at x0: ˆ y (x0) = x′

0ˆ

β. To predict a single new response at x0, we still use ˆ y (x0) as the best predictor, but the mean squared prediction error is σ2 1 + x′

0 (X′X)−1 x0

- .

1 / 24 Regression Models Predicting a New Response