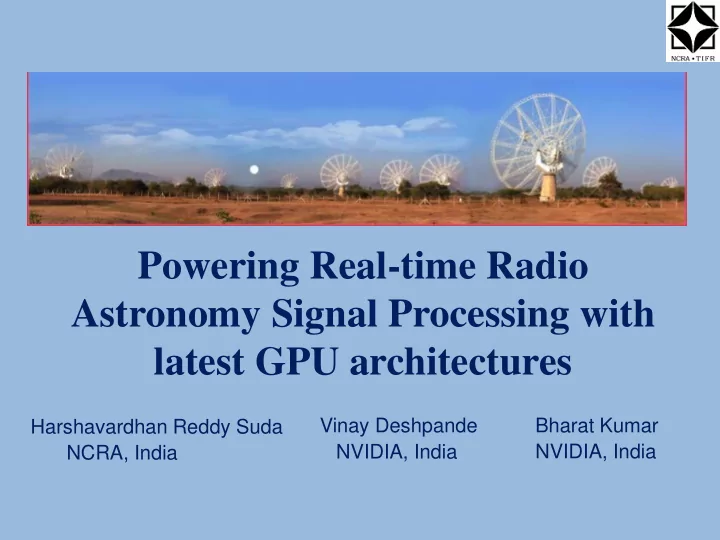

Powering Real-time Radio Astronomy Signal Processing with latest GPU architectures

Harshavardhan Reddy Suda NCRA, India Vinay Deshpande NVIDIA, India Bharat Kumar NVIDIA, India

Powering Real-time Radio Astronomy Signal Processing with latest - - PowerPoint PPT Presentation

Powering Real-time Radio Astronomy Signal Processing with latest GPU architectures Vinay Deshpande Bharat Kumar Harshavardhan Reddy Suda NVIDIA, India NVIDIA, India NCRA, India What signals we are processing? Digitized baseband signals

Harshavardhan Reddy Suda NCRA, India Vinay Deshpande NVIDIA, India Bharat Kumar NVIDIA, India

▪ The Giant Meter-wave Radio Telescope (GMRT) is a world class instrument for studying astrophysical phenomena at low radio frequencies ▪ Located 80 km north of Pune, 160 km east of Mumbai ▪ Array telescope with 30 antennas of 45 m diameter, operating at meter wavelengths

▪ Supports two modes of operation :

▪ Frequency bands :

▪ Maximum instantaneous bandwidth : 400 MHz (Legacy GMRT = 32 MHz) ▪ Effective collecting area (2-3% of SKA)

Image courtesy : Ajith Kumar, NCRA

Sampler Fourier Transform O(NlogN) Phase Correction MAC M(M+1)/2

Antenna Signals(M=64)

Maximum Bandwidth 400 MHz

16k point spectral channels – 3 TFlops 0.1 TFlops 6.6 TFlops Total ~ 10 TFlops

A 4-node example

Ant 1, Ant 2 --- Ant 16 : Digitized data of baseband signals of Antennas

▪ 16 Dell T630 machines as Compute Nodes ▪ 16 ROACH (FPGA) boards with Atmel/e2v based ADCs developed by CASPER group, Berkeley for digitization and packetization ▪ 32 Tesla K40c GPU cards for processing ▪ 36 port Mellanox Infiniband switch for data sharing between Compute Nodes and Host Nodes ▪ Software : C/C++ and CUDA C programming with OpenMPI and OpenMP directives ▪ Developed in collaboration with Swinburne University, Australia

Image courtesy : Irappa Halagalli, NCRA

Legacy GMRT 325 MHz : 350 μJy Upgraded GMRT 300 – 500 MHz : 28 μJy Significantly lower noise RMS and better image quality with upgraded GMRT

Dharam Vir Lal and Ishwar Chandra, NCRA

Image of Coma cluster

Channels FFT (Gflops) MAC (Gflops) 2048 620 626 4096 626 620 8192 512 574 16384 498 537

CUDA 7.5

▪ Adding more compute intensive applications

▪ Working GMRT system and code provides an excellent testing ground for the features of next generation GPUs ▪ Performance measured and compared on GP100 and V100

Cuda 7.5, ECC off

Performance follows CUFFT benchmarks for K40 and P100 Reference for K40 benchmark : CUDA 6.5 performance report, September 2014 Reference for P100 benchmark : CUDA 8 PERFORMANCE OVERVIEW, November 2016

Cuda 7.5, ECC off

Cuda 7.5, ECC off

Peak Global Memory Bandwidth : K40 – 288 GB / sec GP100 – 732 GB / sec Peak Performance : K40 – 4.3 TFlops GP100 – 9.3 TFlops

Bandwidth : 200 MHz

Spectral Channels : 16384

GP100 on Cuda 7.5 V100 on Cuda 9.1 (using PSG cluster)

GP100 on Cuda 7.5 V100 on Cuda 9.1 (using PSG cluster)

GP100 on Cuda 7.5 V100 on Cuda 9.1 (using PSG cluster)

Peak Global Memory Bandwidth : GP100 – 732 GB / sec V100 – 900 GB / sec Peak Performance : GP100 – 9.3 TFlops V100 – 14 TFlops

▪ Non-contiguous Global Memory access at block level

MAC input data format

▪ Low Arithmetic Intensity

▪ MAC : Simplified Index Arithmetic Improved the L2 hit ratio : less then 5% to nearly 86% Vectorized loads – Increased ILP (float4) Exposing more parallelism by increasing the occupancy Single Precision to Half Precision floating point – No performance gain ▪ FFT : Single Precision to Half Precision floating point

V100 on Cuda 9.1 (using PSG cluster)

V100 on Cuda 9.1 (using PSG cluster)

Spectral Channels : 2048 Batch size : 128

Spectral Channels : 2048 Batch size : 128

▪ Improving MAC using Tensor cores – potential 2x improvement ▪ Implementing the MAC optimizations and half-precision floating point FFT in the GMRT code ▪ Optimized FIR filtering routines in CUDA for narrow-band mode implementation ▪ Implementing multi-beamforming, beam steering and gated correlator

▪

▪ Ajith Kumar B., Back-end group co-ordinator, GMRT, NCRA ▪ Sanjay Kudale, GMRT, NCRA ▪ Shelton Gnanaraj, GMRT, NCRA ▪ Andrew Jameson, Swinburne University, Australia ▪ Benjamin Barsdel, Swinburne University, Australia (now at Nvidia) ▪ CASPER Group, Berkeley ▪ Digital Back-end Group, GMRT, NCRA ▪ Computer Group, GMRT, NCRA ▪ Control Room, GMRT