P age 1

CS252/ Culler Lec 4. 1 1/ 31/ 02

CS252 Graduate Computer Architecture Lecture 7 Cache Design (continued)

Feb 12, 2002 Prof . David Culler

CS252/ Culler Lec 4. 2 1/ 31/ 02

How to I mprove Cache Perf ormance?

- 1. Reduce the miss rate,

- 2. Reduce t he miss penalt y, or

- 3. Reduce the time to hit in the cache.

y MissPenalt MissRate HitTime AMAT × + =

CS252/ Culler Lec 4. 3 1/ 31/ 02

Where to misses come f rom?

- Classif ying Misses: 3 Cs

– Compulsory—The f irst access to a block is not in the cache,

so the block must be brought into the cache. Also called cold start misses or f irst ref erence misses. (Misses in even an I nf inite Cache)

– Capacit y—I f the cache cannot contain all the blocks needed

during execution of a program, capacity misses will occur due t o blocks being discarded and later retrieved. (Misses in Fully Associative Size X Cache)

– Conf lict—I f block- placement strategy is set associative or

direct mapped, conf lict misses (in addition to compulsory & capacity misses) will occur because a block can be discarded and later retrieved if too many blocks map to its set. Also called collision misses or interf erence misses. (Misses in N- way Associative, Size X Cache)

- 4t h “C”:

– Coherence - Misses caused by cache coherence.

CS252/ Culler Lec 4. 4 1/ 31/ 02

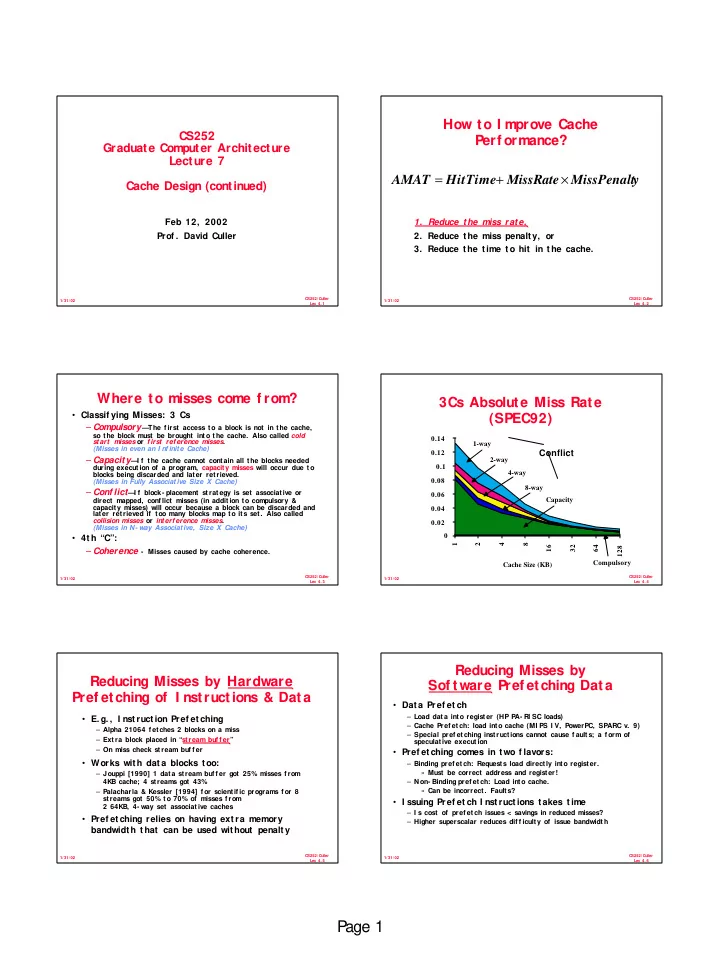

Cache Size (KB) 0.02 0.04 0.06 0.08 0.1 0.12 0.14 1 2 4 8 16 32 64 128 1-way 2-way 4-way 8-way Capacity Compulsory

3Cs Absolute Miss Rate (SPEC92)

Conflict

CS252/ Culler Lec 4. 5 1/ 31/ 02

Reducing Misses by Hardware Pref etching of I nstructions & Data

- E. g. , I nst ruct ion Pref et ching

– Alpha 21064 f etches 2 blocks on a miss – Extra block placed in “stream buf f er” – On miss check stream buf f er

- Works wit h dat a blocks t oo:

– Jouppi [1990] 1 data stream buf f er got 25% misses f rom 4KB cache; 4 streams got 43% – Palacharla & Kessler [1994] f or scientif ic programs f or 8 streams got 50% to 70% of misses f rom 2 64KB, 4- way set associative caches

- Pref et ching relies on having ext ra memory

bandwidt h t hat can be used wit hout penalt y

CS252/ Culler Lec 4. 6 1/ 31/ 02

Reducing Misses by Sof t ware Pref etching Dat a

- Data Pref et ch

– Load data into register (HP PA- RI SC loads) – Cache Pref etch: load into cache (MI PS I V, PowerPC, SPARC v. 9) – Special pref etching instructions cannot cause f aults; a f orm of speculat ive execut ion

- Pref et ching comes in t wo f lavors:

– Binding pref etch: Requests load directly into register. » Must be correct address and register! – Non- Binding pref etch: Load into cache. » Can be incorrect. Faults?

- I ssuing Pref et ch I nst ruct ions t akes t ime

– I s cost of pref etch issues < savings in reduced misses? – Higher superscalar reduces dif f iculty of issue bandwidth