P age 1

CS252/ Culler Lec 4. 1 1/ 31/ 02

CS252 Graduate Computer Architecture Lecture 4 Cache Design

January 31, 2002 Prof . David Culler

CS252/ Culler Lec 4. 2 1/ 31/ 02

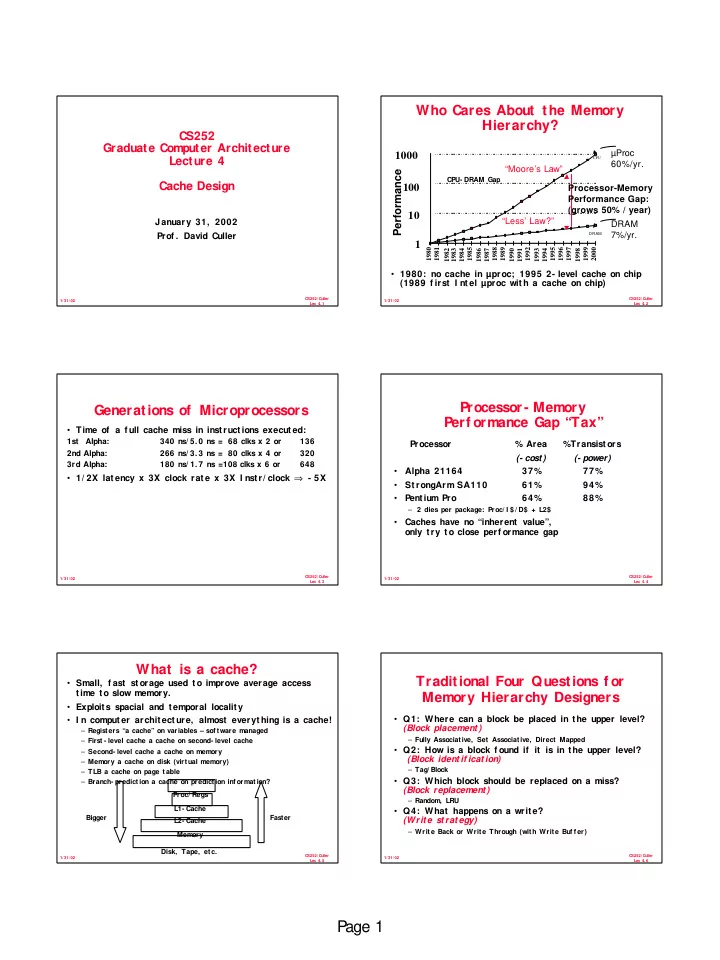

CPU- DRAM Gap

- 1980: no cache in µproc; 1995 2- level cache on chip

(1989 f irst I nt el µproc wit h a cache on chip)

Who Cares About the Memory Hierarchy?

µProc 60%/yr. DRAM 7%/yr.

1 10 100 1000

1980 1981 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000

DRAM CPU

1982

Processor-Memory Performance Gap: (grows 50% / year)

Performance

“Moore’s Law” “Less’ Law?”

CS252/ Culler Lec 4. 3 1/ 31/ 02

Generations of Microprocessors

- Time of a f ull cache miss in inst ruct ions execut ed:

1st Alpha: 340 ns/ 5. 0 ns = 68 clks x 2 or 136 2nd Alpha: 266 ns/ 3. 3 ns = 80 clks x 4 or 320 3rd Alpha: 180 ns/ 1. 7 ns =108 clks x 6 or 648

- 1/ 2X lat ency x 3X clock rat e x 3X I nstr/ clock ⇒ - 5X

CS252/ Culler Lec 4. 4 1/ 31/ 02

Processor - Memory Perf ormance Gap “Tax”

Processor % Area %Transist ors (- cost) (- power)

- Alpha 21164

37% 77%

- St rongArm SA110

61% 94%

- Pentium Pro

64% 88%

– 2 dies per package: Proc/ I $/ D$ + L2$

- Caches have no “inherent value”,

- nly t ry t o close perf ormance gap

CS252/ Culler Lec 4. 5 1/ 31/ 02

What is a cache?

- Small, f ast st orage used t o improve average access

time to slow memory.

- Exploits spacial and temporal locality

- I n comput er archit ect ure, almost everyt hing is a cache!

– Registers “a cache” on variables – sof tware managed – First - level cache a cache on second- level cache – Second- level cache a cache on memory – Memory a cache on disk (virtual memory) – TLB a cache on page table – Branch- prediction a cache on prediction inf ormation? Proc/ Regs L1- Cache L2- Cache Memory Disk, Tape, etc. Bigger Faster

CS252/ Culler Lec 4. 6 1/ 31/ 02

Traditional Four Questions f or Memory Hierarchy Designers

- Q1: Where can a block be placed in t he upper level?

(Block placement )

– Fully Associative, Set Associative, Direct Mapped

- Q2: How is a block f ound if it is in t he upper level?

(Block ident if icat ion)

– Tag/ Block

- Q3: Which block should be replaced on a miss?

(Block replacement )

– Random, LRU

- Q4: What happens on a writ e?

(Write strategy)

– Write Back or Write Through (with Write Buf f er)