1

Lect ure 13

J une 14, 2005 CS 486/ 686

CS486/686 Lecture Slides (c) 2005 P. Poupart

2

Out line

- Markov Decision P

rocesses

- Dynamic Decision Net works

- Russell and Nor vig: Sect 17.1, 17.2 (up

t o p. 620), 17.4, 17.5

CS486/686 Lecture Slides (c) 2005 P. Poupart

3

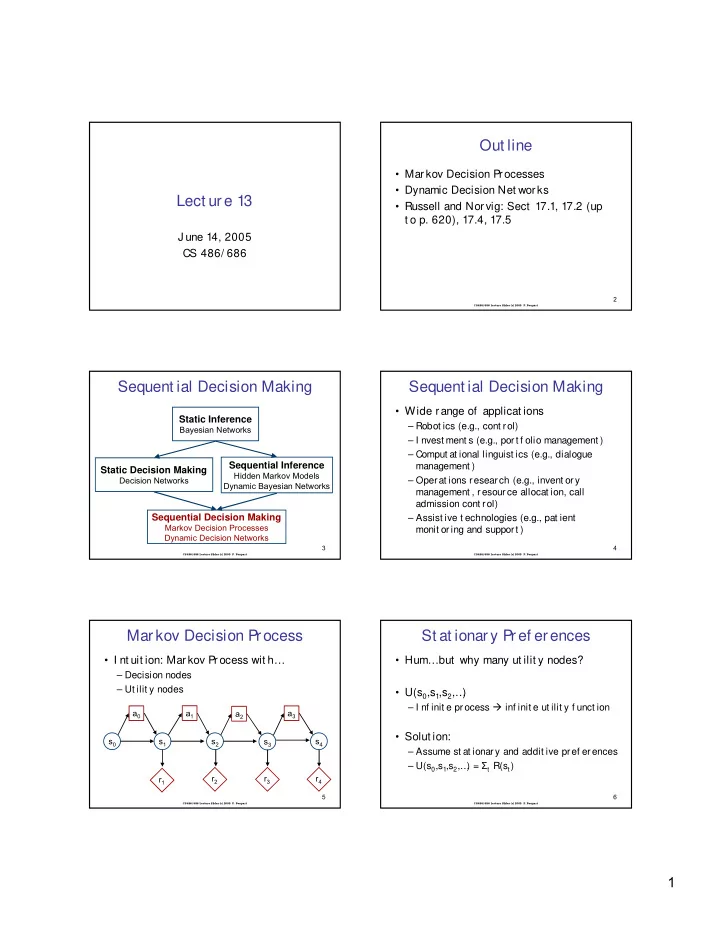

Sequent ial Decision Making

Static Inference

Bayesian Networks

Sequential Inference

Hidden Markov Models Dynamic Bayesian Networks

Static Decision Making

Decision Networks

Sequential Decision Making

Markov Decision Processes Dynamic Decision Networks

CS486/686 Lecture Slides (c) 2005 P. Poupart

4

Sequent ial Decision Making

- Wide range of applicat ions

– Robot ics (e.g., cont rol) – I nvest ment s (e.g., port f olio management ) – Comput at ional linguist ics (e.g., dialogue management ) – Operat ions research (e.g., invent ory management , resour ce allocat ion, call admission cont rol) – Assist ive t echnologies (e.g., pat ient monit or ing and support )

CS486/686 Lecture Slides (c) 2005 P. Poupart

5

- I nt uit ion: Mar kov Process wit h…

– Decision nodes – Ut ilit y nodes

Markov Decision Process

s0 s1 s2 s3 s4 a0 a1 a2 a3 r1 r2 r3 r4

CS486/686 Lecture Slides (c) 2005 P. Poupart

6

St at ionary Pref erences

- Hum…but why many ut ilit y nodes?

- U(s0,s1,s2,…

)

– I nf init e pr ocess inf init e ut ilit y f unct ion

- Solut ion: