1

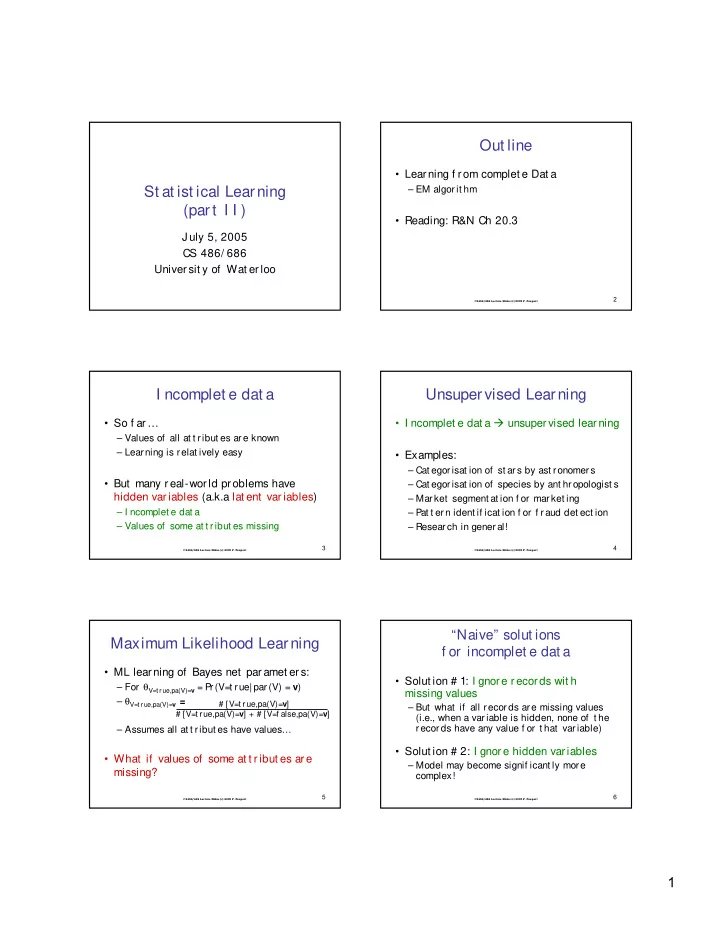

St at ist ical Learning (part I I )

J uly 5, 2005 CS 486/ 686 Univer sit y of Wat erloo

CS486/686 Lecture Slides (c) 2005 P. Poupart

2

Out line

- Learning f rom complet e Dat a

– EM algor it hm

- Reading: R&N Ch 20.3

CS486/686 Lecture Slides (c) 2005 P. Poupart

3

I ncomplet e dat a

- So f ar…

– Values of all at t ribut es are known – Learning is relat ively easy

- But many r eal-wor ld problems have

hidden var iables (a.k.a lat ent var iables)

– I ncomplet e dat a – Values of some at t ribut es missing

CS486/686 Lecture Slides (c) 2005 P. Poupart

4

Unsupervised Learning

- I ncomplet e dat a unsuper vised lear ning

- Examples:

– Cat egorisat ion of st ars by ast ronomers – Cat egorisat ion of species by ant hropologist s – Market segment at ion f or market ing – Pat t ern ident if icat ion f or f raud det ect ion – Resear ch in general!

CS486/686 Lecture Slides (c) 2005 P. Poupart

5

Maximum Likelihood Learning

- ML learning of Bayes net paramet er s:

– For θV=t r ue,pa(V)=v = Pr(V=t rue| par (V) = v) – θV=t r ue,pa(V)=v = – Assumes all at t ribut es have values…

- What if values of some at t r ibut es are

missing?

# [V=t rue,pa(V)=v] # [V=t rue,pa(V)=v] + # [V=f alse,pa(V)=v]

CS486/686 Lecture Slides (c) 2005 P. Poupart

6

“Naive” solut ions f or incomplet e dat a

- Solut ion # 1: I gnore records wit h

missing values

– But what if all records are missing values (i.e., when a variable is hidden, none of t he records have any value f or t hat variable)

- Solut ion # 2: I gnore hidden variables